Wei Hao Khoong

BUSU-Net: An Ensemble U-Net Framework for Medical Image Segmentation

Mar 08, 2020

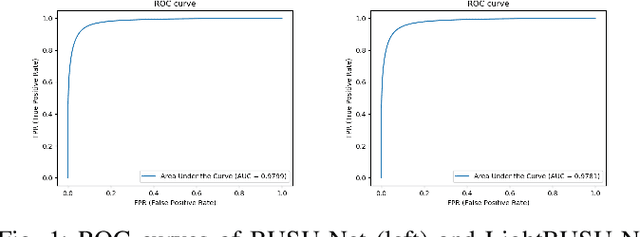

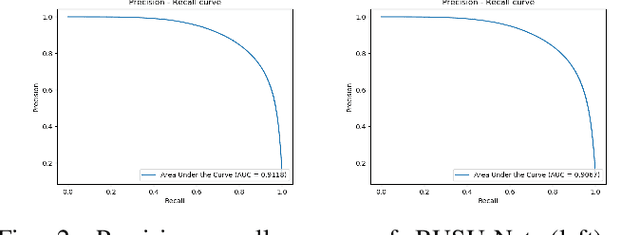

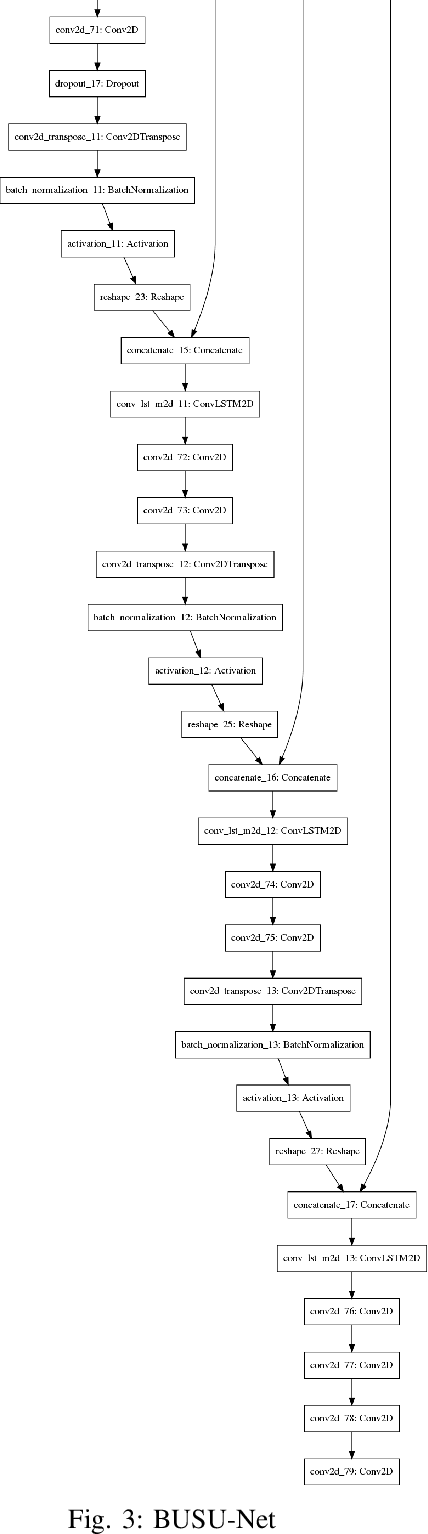

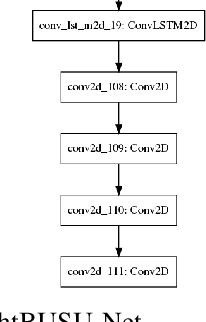

Abstract:In recent years, convolutional neural networks (CNNs) have revolutionized medical image analysis. One of the most well-known CNN architectures in semantic segmentation is the U-net, which has achieved much success in several medical image segmentation applications. Also more recently, with the rise of autoML ad advancements in neural architecture search (NAS), methods like NAS-Unet have been proposed for NAS in medical image segmentation. In this paper, with inspiration from LadderNet, U-Net, autoML and NAS, we propose an ensemble deep neural network with an underlying U-Net framework consisting of bi-directional convolutional LSTMs and dense connections, where the first (from left) U-Net-like network is deeper than the second (from left). We show that this ensemble network outperforms recent state-of-the-art networks in several evaluation metrics, and also evaluate a lightweight version of this ensemble network, which also outperforms recent state-of-the-art networks in some evaluation metrics.

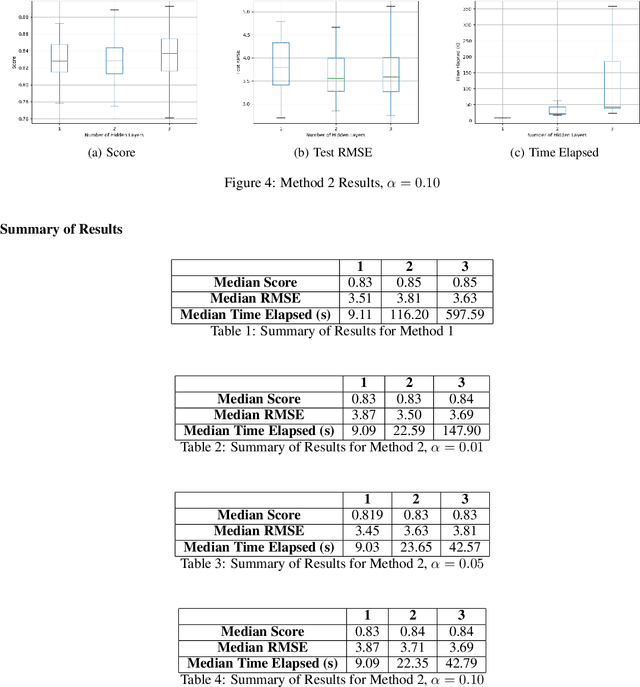

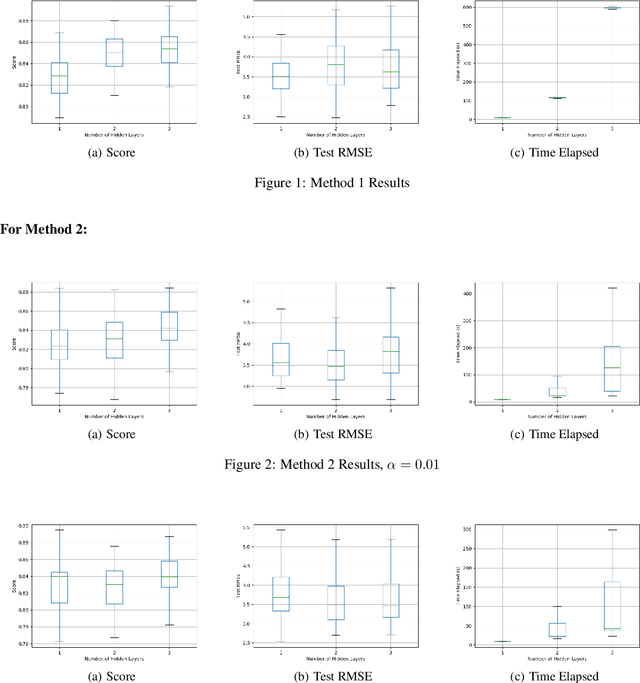

A Heuristic for Efficient Reduction in Hidden Layer Combinations For Feedforward Neural Networks

Sep 25, 2019

Abstract:In this paper, we describe the hyper-parameter search problem in the field of machine learning and present a heuristic approach in an attempt to tackle it. In most learning algorithms, a set of hyper-parameters must be determined before training commences. The choice of hyper-parameters can affect the final model's performance significantly, but yet determining a good choice of hyper-parameters is in most cases complex and consumes large amount of computing resources. In this paper, we show the differences between an exhaustive search of hyper-parameters and a heuristic search, and show that there is a significant reduction in time taken to obtain the resulting model with marginal differences in evaluation metrics when compared to the benchmark case.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge