Wang Zihao

Bayesian Logistic Shape Model Inference: application to cochlea image segmentation

May 05, 2021

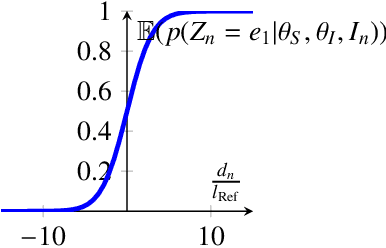

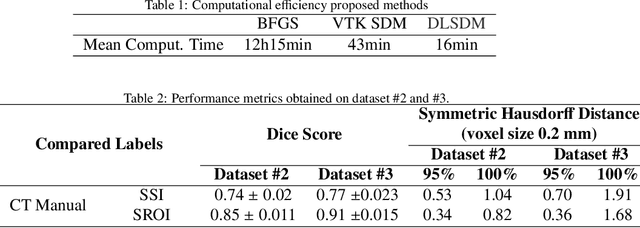

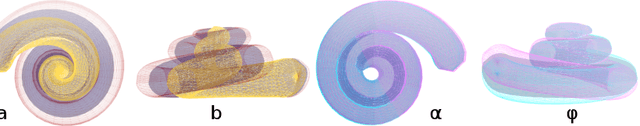

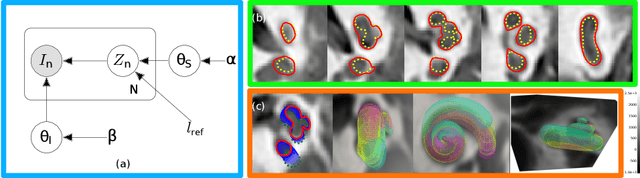

Abstract:Incorporating shape information is essential for the delineation of many organs and anatomical structures in medical images. While previous work has mainly focused on parametric spatial transformations applied on reference template shapes, in this paper, we address the Bayesian inference of parametric shape models for segmenting medical images with the objective to provide interpretable results. The proposed framework defines a likelihood appearance probability and a prior label probability based on a generic shape function through a logistic function. A reference length parameter defined in the sigmoid controls the trade-off between shape and appearance information. The inference of shape parameters is performed within an Expectation-Maximisation approach where a Gauss-Newton optimization stage allows to provide an approximation of the posterior probability of shape parameters. This framework is applied to the segmentation of cochlea structures from clinical CT images constrained by a 10 parameter shape model. It is evaluated on three different datasets, one of which includes more than 200 patient images. The results show performances comparable to supervised methods and better than previously proposed unsupervised ones. It also enables an analysis of parameter distributions and the quantification of segmentation uncertainty including the effect of the shape model.

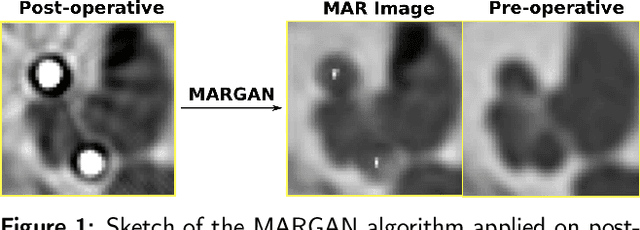

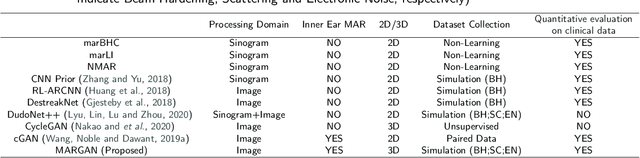

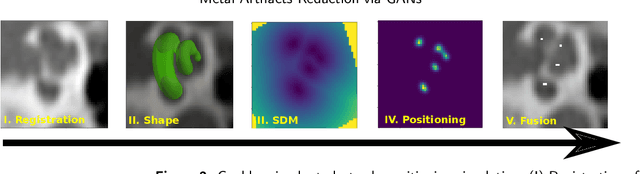

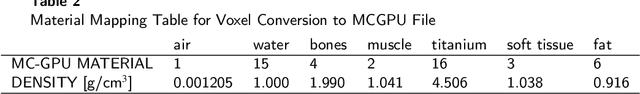

Inner-ear Augmented Metal Artifact Reduction with Simulation-based 3D Generative Adversarial Networks

Apr 26, 2021

Abstract:Metal Artifacts creates often difficulties for a high quality visual assessment of post-operative imaging in {c}omputed {t}omography (CT). A vast body of methods have been proposed to tackle this issue, but {these} methods were designed for regular CT scans and their performance is usually insufficient when imaging tiny implants. In the context of post-operative high-resolution {CT} imaging, we propose a 3D metal {artifact} reduction algorithm based on a generative adversarial neural network. It is based on the simulation of physically realistic CT metal artifacts created by cochlea implant electrodes on preoperative images. The generated images serve to train a 3D generative adversarial networks for artifacts reduction. The proposed approach was assessed qualitatively and quantitatively on clinical conventional and cone-beam CT of cochlear implant postoperative images. These experiments show that the proposed method {outperforms other} general metal artifact reduction approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge