Wang Jun

MobileHolo: A Lightweight Complex-Valued Deformable CNN for High-Quality Computer-Generated Hologram

Jun 17, 2025Abstract:Holographic displays have significant potential in virtual reality and augmented reality owing to their ability to provide all the depth cues. Deep learning-based methods play an important role in computer-generated holograms (CGH). During the diffraction process, each pixel exerts an influence on the reconstructed image. However, previous works face challenges in capturing sufficient information to accurately model this process, primarily due to the inadequacy of their effective receptive field (ERF). Here, we designed complex-valued deformable convolution for integration into network, enabling dynamic adjustment of the convolution kernel's shape to increase flexibility of ERF for better feature extraction. This approach allows us to utilize a single model while achieving state-of-the-art performance in both simulated and optical experiment reconstructions, surpassing existing open-source models. Specifically, our method has a peak signal-to-noise ratio that is 2.04 dB, 5.31 dB, and 9.71 dB higher than that of CCNN-CGH, HoloNet, and Holo-encoder, respectively, when the resolution is 1920$\times$1072. The number of parameters of our model is only about one-eighth of that of CCNN-CGH.

Deep Doubly Supervised Transfer Network for Diagnosis of Breast Cancer with Imbalanced Ultrasound Imaging Modalities

Jun 29, 2020

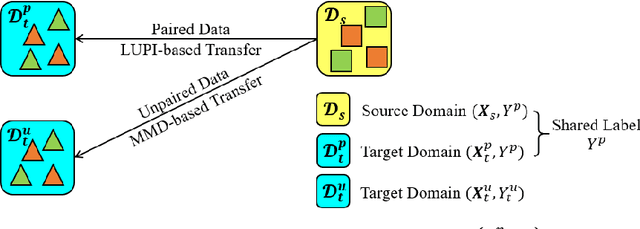

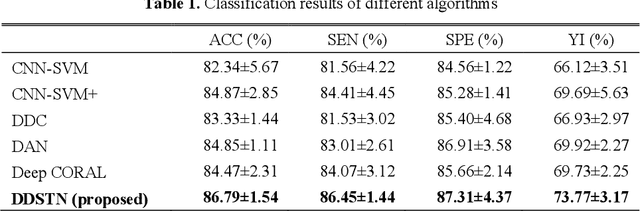

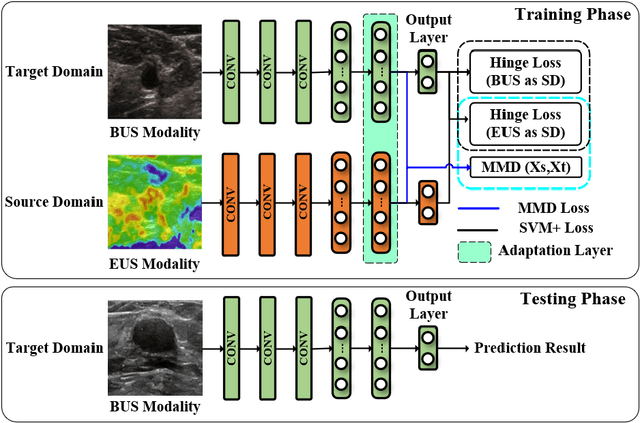

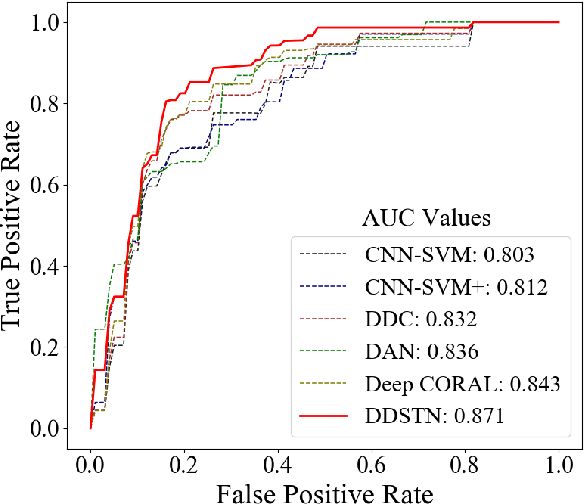

Abstract:Elastography ultrasound (EUS) provides additional bio-mechanical in-formation about lesion for B-mode ultrasound (BUS) in the diagnosis of breast cancers. However, joint utilization of both BUS and EUS is not popular due to the lack of EUS devices in rural hospitals, which arouses a novel modality im-balance problem in computer-aided diagnosis (CAD) for breast cancers. Current transfer learning (TL) pay little attention to this special issue of clinical modality imbalance, that is, the source domain (EUS modality) has fewer labeled samples than those in the target domain (BUS modality). Moreover, these TL methods cannot fully use the label information to explore the intrinsic relation between two modalities and then guide the promoted knowledge transfer. To this end, we propose a novel doubly supervised TL network (DDSTN) that integrates the Learning Using Privileged Information (LUPI) paradigm and the Maximum Mean Discrepancy (MMD) criterion into a unified deep TL framework. The proposed algorithm can not only make full use of the shared labels to effectively guide knowledge transfer by LUPI paradigm, but also perform additional super-vised transfer between unpaired data. We further introduce the MMD criterion to enhance the knowledge transfer. The experimental results on the breast ultra-sound dataset indicate that the proposed DDSTN outperforms all the compared state-of-the-art algorithms for the BUS-based CAD.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge