Wang Jian

Structure-Aware Distributed Backdoor Attacks in Federated Learning

Mar 04, 2026Abstract:While federated learning protects data privacy, it also makes the model update process vulnerable to long-term stealthy perturbations. Existing studies on backdoor attacks in federated learning mainly focus on trigger design or poisoning strategies, typically assuming that identical perturbations behave similarly across different model architectures. This assumption overlooks the impact of model structure on perturbation effectiveness. From a structure-aware perspective, this paper analyzes the coupling relationship between model architectures and backdoor perturbations. We introduce two metrics, Structural Responsiveness Score (SRS) and Structural Compatibility Coefficient (SCC), to measure a model's sensitivity to perturbations and its preference for fractal perturbations. Based on these metrics, we develop a structure-aware fractal perturbation injection framework (TFI) to study the role of architectural properties in the backdoor injection process. Experimental results show that model architecture significantly influences the propagation and aggregation of perturbations. Networks with multi-path feature fusion can amplify and retain fractal perturbations even under low poisoning ratios, while models with low structural compatibility constrain their effectiveness. Further analysis reveals a strong correlation between SCC and attack success rate, suggesting that SCC can predict perturbation survivability. These findings highlight that backdoor behaviors in federated learning depend not only on perturbation design or poisoning intensity but also on the interaction between model architecture and aggregation mechanisms, offering new insights for structure-aware defense design.

Auto-weighting for Breast Cancer Classification in Multimodal Ultrasound

Aug 08, 2020

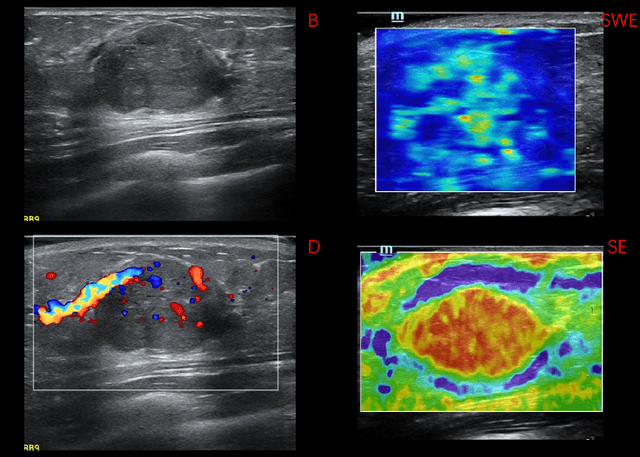

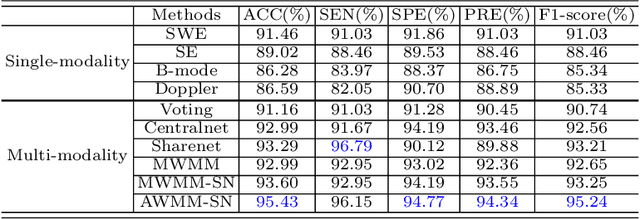

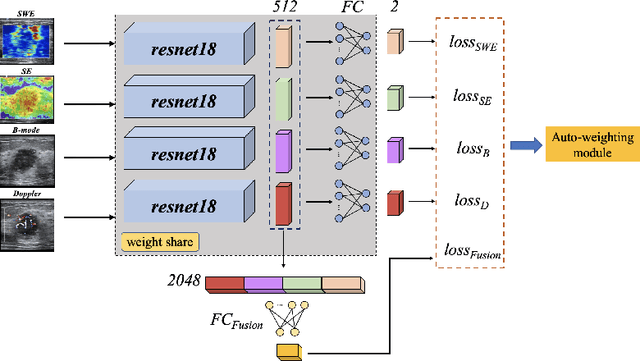

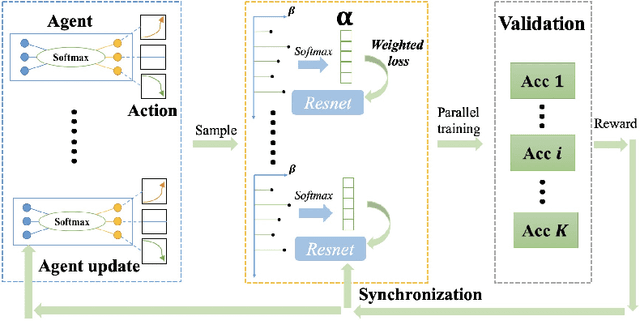

Abstract:Breast cancer is the most common invasive cancer in women. Besides the primary B-mode ultrasound screening, sonographers have explored the inclusion of Doppler, strain and shear-wave elasticity imaging to advance the diagnosis. However, recognizing useful patterns in all types of images and weighing up the significance of each modality can elude less-experienced clinicians. In this paper, we explore, for the first time, an automatic way to combine the four types of ultrasonography to discriminate between benign and malignant breast nodules. A novel multimodal network is proposed, along with promising learnability and simplicity to improve classification accuracy. The key is using a weight-sharing strategy to encourage interactions between modalities and adopting an additional cross-modalities objective to integrate global information. In contrast to hardcoding the weights of each modality in the model, we embed it in a Reinforcement Learning framework to learn this weighting in an end-to-end manner. Thus the model is trained to seek the optimal multimodal combination without handcrafted heuristics. The proposed framework is evaluated on a dataset contains 1616 set of multimodal images. Results showed that the model scored a high classification accuracy of 95.4%, which indicates the efficiency of the proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge