Walid Mahdi

Automatic video scene segmentation based on spatial-temporal clues and rhythm

Dec 15, 2014

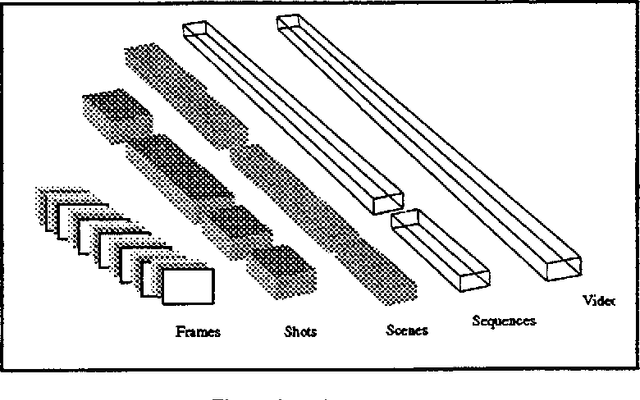

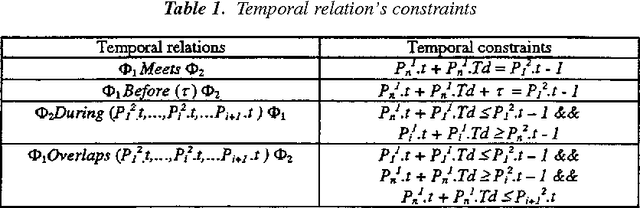

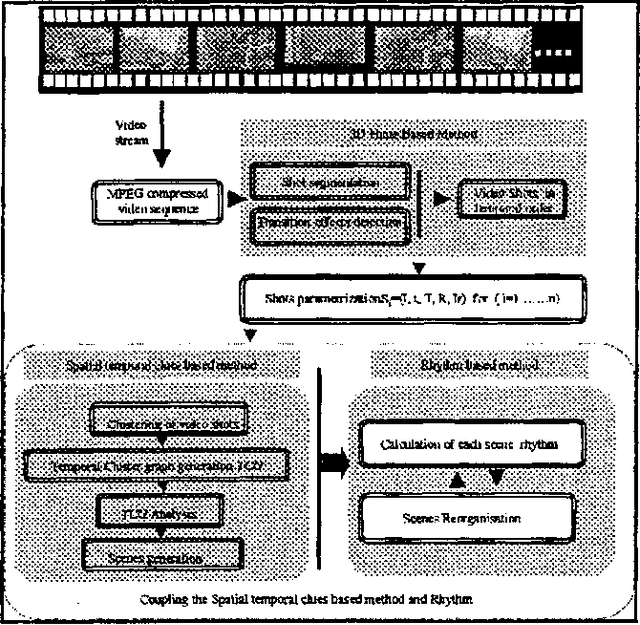

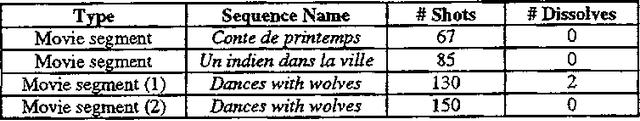

Abstract:With ever increasing computing power and data storage capacity, the potential for large digital video libraries is growing rapidly.However, the massive use of video for the moment is limited by its opaque characteristics. Indeed, a user who has to handle and retrieve sequentially needs too much time in order to find out segments of interest within a video. Therefore, providing an environment both convenient and efficient for video storing and retrieval, especially for content-based searching as this exists in traditional textbased database systems, has been the focus of recent and important efforts of a large research community In this paper, we propose a new automatic video scene segmentation method that explores two main video features; these are spatial-temporal relationship and rhythm of shots. The experimental evidence we obtained from a 80 minutevideo showed that our prototype provides very high accuracy for video segmentation.

* 25 pages, 12 figures

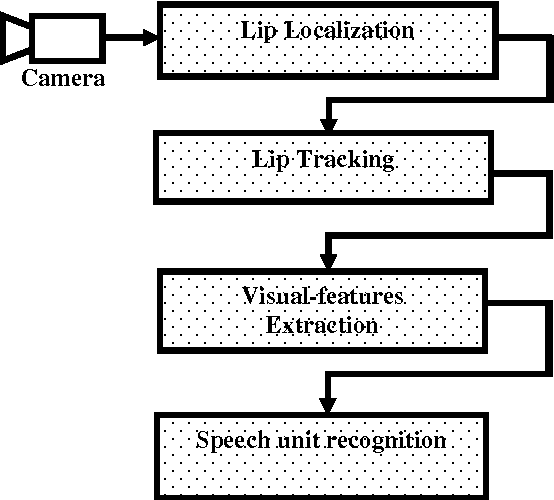

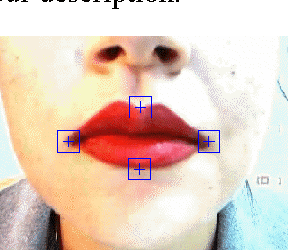

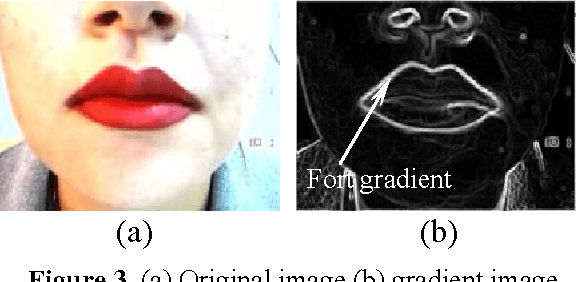

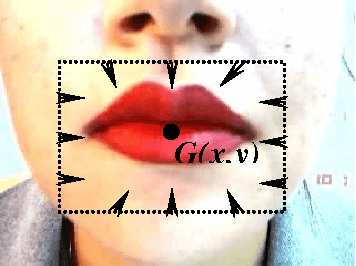

Lip Localization and Viseme Classification for Visual Speech Recognition

Jan 19, 2013

Abstract:The need for an automatic lip-reading system is ever increasing. Infact, today, extraction and reliable analysis of facial movements make up an important part in many multimedia systems such as videoconference, low communication systems, lip-reading systems. In addition, visual information is imperative among people with special needs. We can imagine, for example, a dependent person ordering a machine with an easy lip movement or by a simple syllable pronunciation. Moreover, people with hearing problems compensate for their special needs by lip-reading as well as listening to the person with whome they are talking.

* 14 pages

A new approach for digit recognition based on hand gesture analysis

Jun 27, 2009

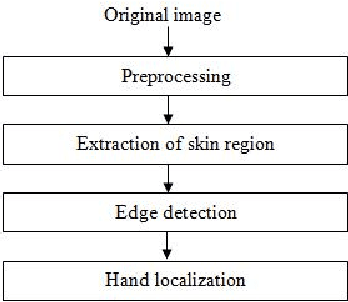

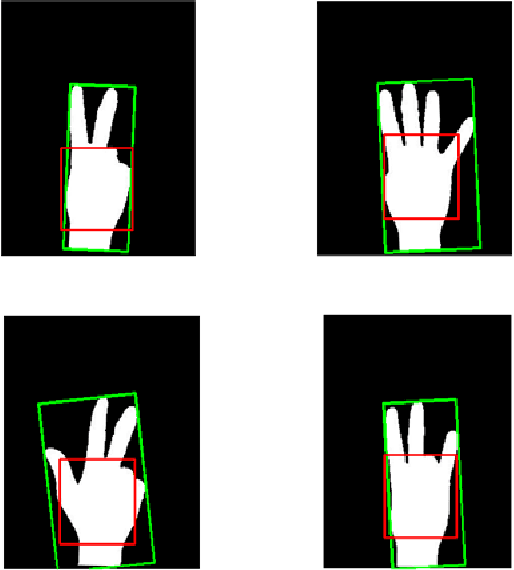

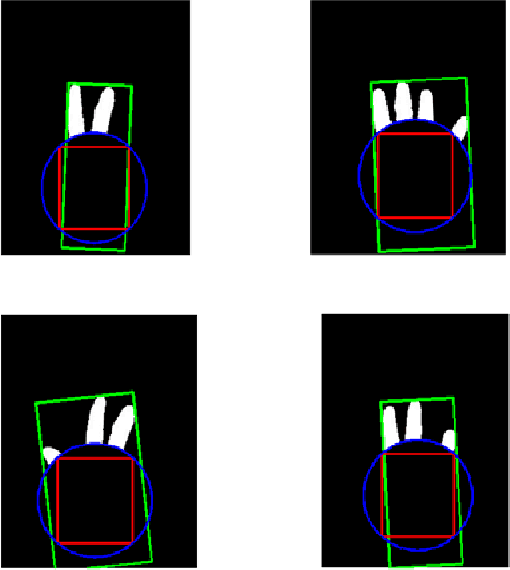

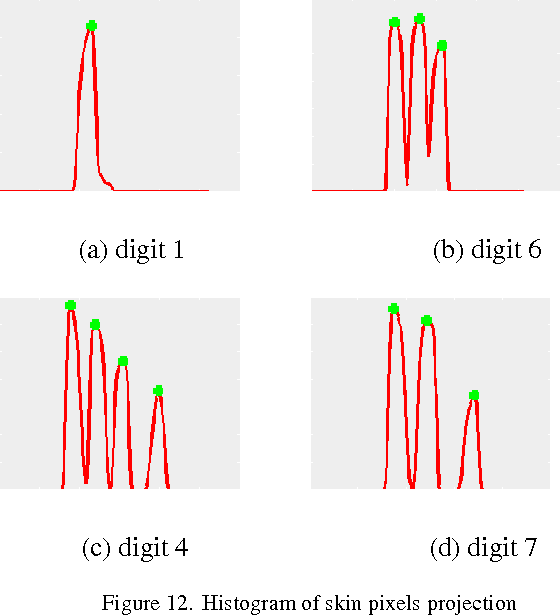

Abstract:We present in this paper a new approach for hand gesture analysis that allows digit recognition. The analysis is based on extracting a set of features from a hand image and then combining them by using an induction graph. The most important features we extract from each image are the fingers locations, their heights and the distance between each pair of fingers. Our approach consists of three steps: (i) Hand detection and localization, (ii) fingers extraction and (iii) features identification and combination to digit recognition. Each input image is assumed to contain only one person, thus we apply a fuzzy classifier to identify the skin pixels. In the finger extraction step, we attempt to remove all the hand components except the fingers, this process is based on the hand anatomy properties. The final step consists on representing histogram of the detected fingers in order to extract features that will be used for digit recognition. The approach is invariant to scale, rotation and translation of the hand. Some experiments have been undertaken to show the effectiveness of the proposed approach.

* 8 Pages, International Journal of Computer Science and Information Security

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge