Vishwas M. Shetty

G-IFT: A Gated Linear Unit adapter with Iterative Fine-Tuning for Low-Resource Children's Speaker Verification

Aug 11, 2025Abstract:Speaker Verification (SV) systems trained on adults speech often underperform on children's SV due to the acoustic mismatch, and limited children speech data makes fine-tuning not very effective. In this paper, we propose an innovative framework, a Gated Linear Unit adapter with Iterative Fine-Tuning (G-IFT), to enhance knowledge transfer efficiency between the high-resource adults speech domain and the low-resource children's speech domain. In this framework, a Gated Linear Unit adapter is first inserted between the pre-trained speaker embedding model and the classifier. Then the classifier, adapter, and pre-trained speaker embedding model are optimized sequentially in an iterative way. This framework is agnostic to the type of the underlying architecture of the SV system. Our experiments on ECAPA-TDNN, ResNet, and X-vector architectures using the OGI and MyST datasets demonstrate that the G-IFT framework yields consistent reductions in Equal Error Rates compared to baseline methods.

Investigation of Speaker-adaptation methods in Transformer based ASR

Aug 07, 2020

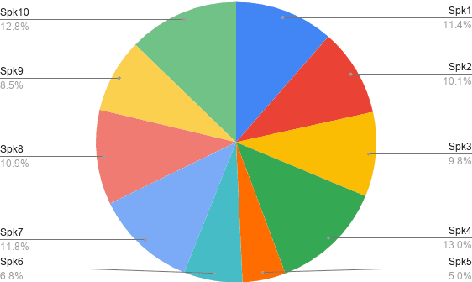

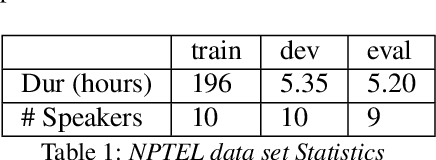

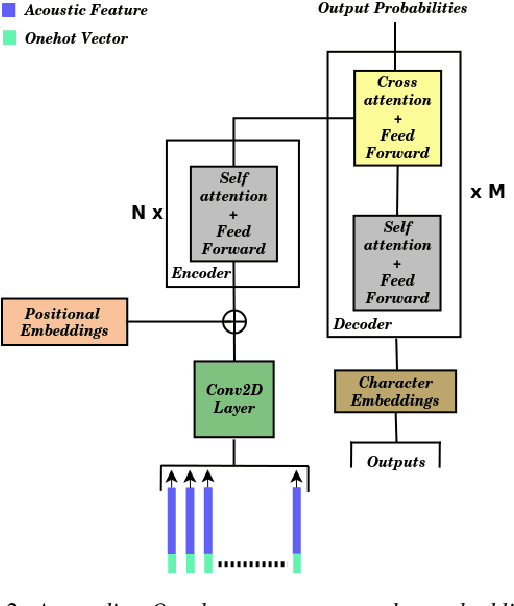

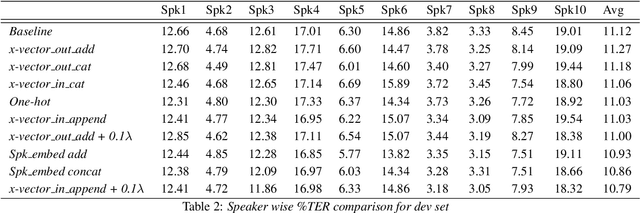

Abstract:End-to-end models are fast replacing conventional hybrid models in automatic speech recognition. A transformer is a sequence-to-sequence framework solely based on attention, that was initially applied to machine translation task. This end-to-end framework has been shown to give promising results when used for automatic speech recognition as well. In this paper, we explore different ways of incorporating speaker information while training a transformer-based model to improve its performance. We present speaker information in the form of speaker embeddings for each of the speakers. Two broad categories of speaker embeddings are used: (i)fixed embeddings, and (ii)learned embeddings. We experiment using speaker embeddings learned along with the model training, as well as one-hot vectors and x-vectors. Using these different speaker embeddings, we obtain an average relative improvement of 1% to 3% in the token error rate. We report results on the NPTEL lecture database. NPTEL is an open-source e-learning portal providing content from top Indian universities.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge