Utkarsh Contractor

Zoom, Enhance! Measuring Surveillance GAN Up-sampling

Aug 20, 2021

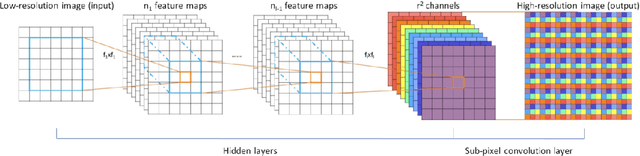

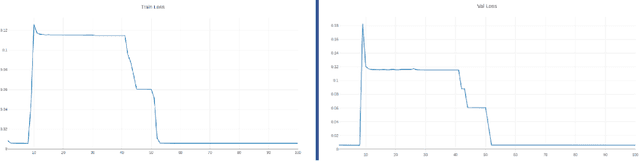

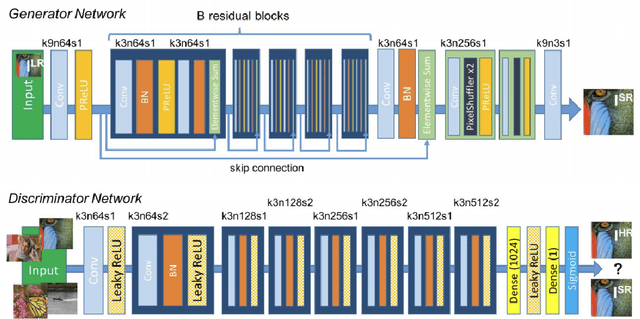

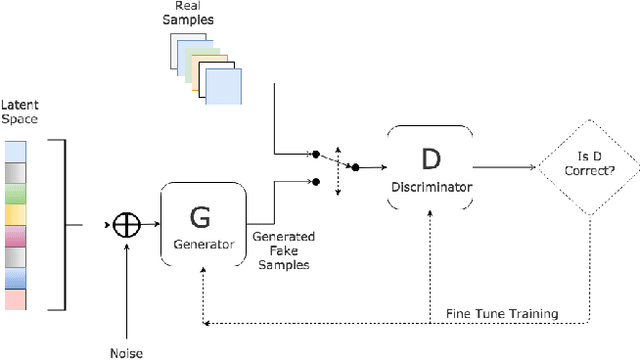

Abstract:Deep Neural Networks have been very successfully used for many computer vision and pattern recognition applications. While Convolutional Neural Networks(CNNs) have shown the path to state of art image classifications, Generative Adversarial Networks or GANs have provided state of art capabilities in image generation. In this paper we extend the applications of CNNs and GANs to experiment with up-sampling techniques in the domains of security and surveillance. Through this work we evaluate, compare and contrast the state of art techniques in both CNN and GAN based image and video up-sampling in the surveillance domain. As a result of this study we also provide experimental evidence to establish DISTS as a stronger Image Quality Assessment(IQA) metric for comparing GAN Based Image Up-sampling in the surveillance domain.

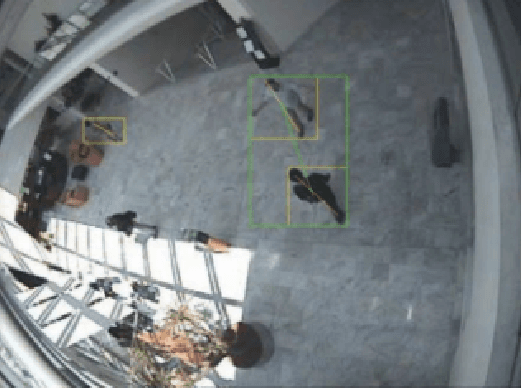

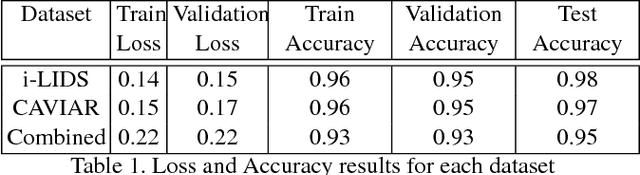

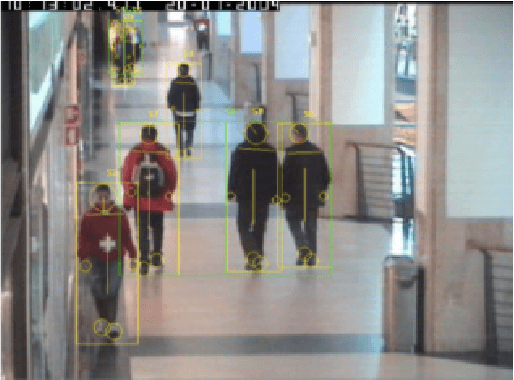

CNNs for Surveillance Footage Scene Classification

Sep 08, 2018

Abstract:In this project, we adapt high-performing CNN architectures to differentiate between scenes with and without abandoned luggage. Using frames from two video datasets, we compare the results of training different architectures on each dataset as well as on combining the datasets. We additionally use network visualization techniques to gain insight into what the neural network sees, and the basis of the classification decision. We intend that our results benefit further work in applying CNNs in surveillance and security-related tasks.

Language Modeling with Generative Adversarial Networks

Apr 08, 2018

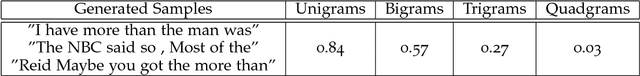

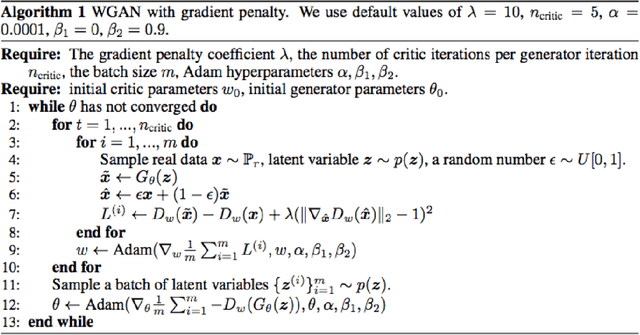

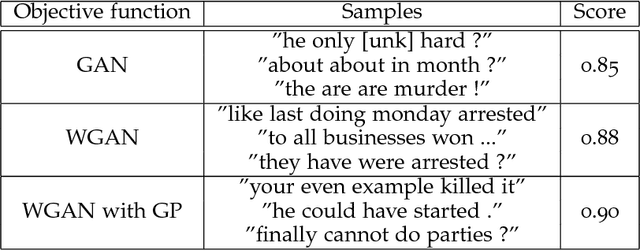

Abstract:Generative Adversarial Networks (GANs) have been promising in the field of image generation, however, they have been hard to train for language generation. GANs were originally designed to output differentiable values, so discrete language generation is challenging for them which causes high levels of instability in training GANs. Consequently, past work has resorted to pre-training with maximum-likelihood or training GANs without pre-training with a WGAN objective with a gradient penalty. In this study, we present a comparison of those approaches. Furthermore, we present the results of some experiments that indicate better training and convergence of Wasserstein GANs (WGANs) when a weaker regularization term is enforcing the Lipschitz constraint.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge