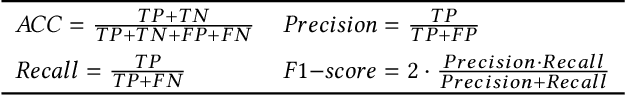

Ulrike Meyer

Towards Robust Domain Generation Algorithm Classification

Apr 09, 2024Abstract:In this work, we conduct a comprehensive study on the robustness of domain generation algorithm (DGA) classifiers. We implement 32 white-box attacks, 19 of which are very effective and induce a false-negative rate (FNR) of $\approx$ 100\% on unhardened classifiers. To defend the classifiers, we evaluate different hardening approaches and propose a novel training scheme that leverages adversarial latent space vectors and discretized adversarial domains to significantly improve robustness. In our study, we highlight a pitfall to avoid when hardening classifiers and uncover training biases that can be easily exploited by attackers to bypass detection, but which can be mitigated by adversarial training (AT). In our study, we do not observe any trade-off between robustness and performance, on the contrary, hardening improves a classifier's detection performance for known and unknown DGAs. We implement all attacks and defenses discussed in this paper as a standalone library, which we make publicly available to facilitate hardening of DGA classifiers: https://gitlab.com/rwth-itsec/robust-dga-detection

* Accepted at ACM Asia Conference on Computer and Communications Security (ASIA CCS 2024)

False Sense of Security: Leveraging XAI to Analyze the Reasoning and True Performance of Context-less DGA Classifiers

Jul 10, 2023Abstract:The problem of revealing botnet activity through Domain Generation Algorithm (DGA) detection seems to be solved, considering that available deep learning classifiers achieve accuracies of over 99.9%. However, these classifiers provide a false sense of security as they are heavily biased and allow for trivial detection bypass. In this work, we leverage explainable artificial intelligence (XAI) methods to analyze the reasoning of deep learning classifiers and to systematically reveal such biases. We show that eliminating these biases from DGA classifiers considerably deteriorates their performance. Nevertheless we are able to design a context-aware detection system that is free of the identified biases and maintains the detection rate of state-of-the art deep learning classifiers. In this context, we propose a visual analysis system that helps to better understand a classifier's reasoning, thereby increasing trust in and transparency of detection methods and facilitating decision-making.

Detecting Unknown DGAs without Context Information

May 30, 2022

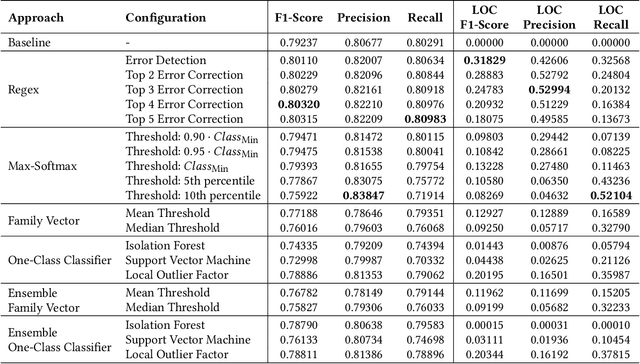

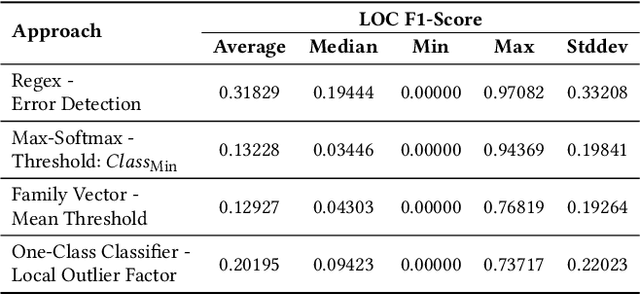

Abstract:New malware emerges at a rapid pace and often incorporates Domain Generation Algorithms (DGAs) to avoid blocking the malware's connection to the command and control (C2) server. Current state-of-the-art classifiers are able to separate benign from malicious domains (binary classification) and attribute them with high probability to the DGAs that generated them (multiclass classification). While binary classifiers can label domains of yet unknown DGAs as malicious, multiclass classifiers can only assign domains to DGAs that are known at the time of training, limiting the ability to uncover new malware families. In this work, we perform a comprehensive study on the detection of new DGAs, which includes an evaluation of 59,690 classifiers. We examine four different approaches in 15 different configurations and propose a simple yet effective approach based on the combination of a softmax classifier and regular expressions (regexes) to detect multiple unknown DGAs with high probability. At the same time, our approach retains state-of-the-art classification performance for known DGAs. Our evaluation is based on a leave-one-group-out cross-validation with a total of 94 DGA families. By using the maximum number of known DGAs, our evaluation scenario is particularly difficult and close to the real world. All of the approaches examined are privacy-preserving, since they operate without context and exclusively on a single domain to be classified. We round up our study with a thorough discussion of class-incremental learning strategies that can adapt an existing classifier to newly discovered classes.

Sharing FANCI Features: A Privacy Analysis of Feature Extraction for DGA Detection

Oct 12, 2021

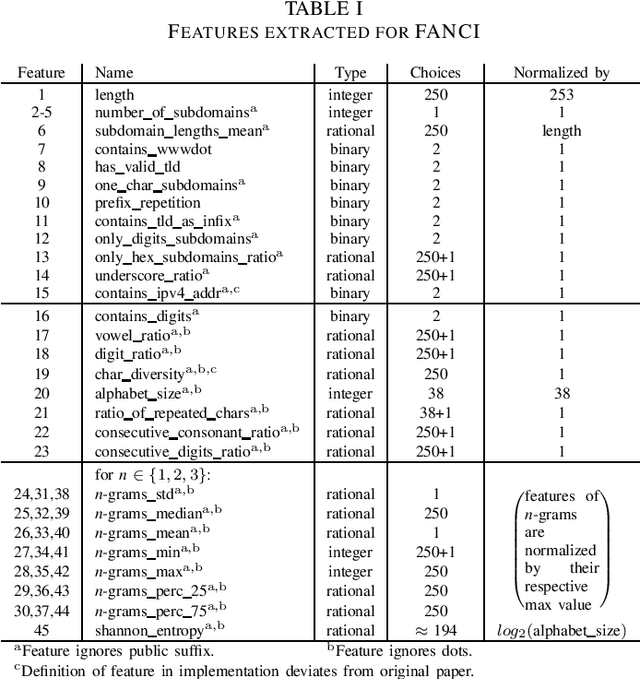

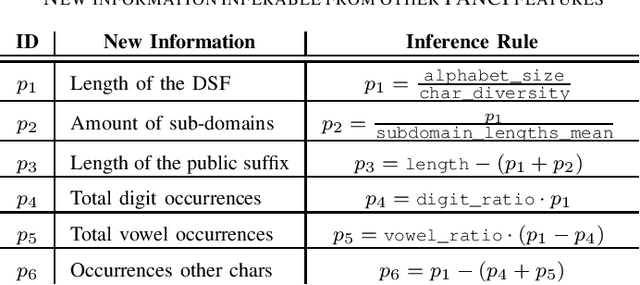

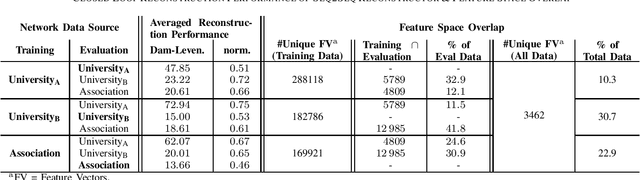

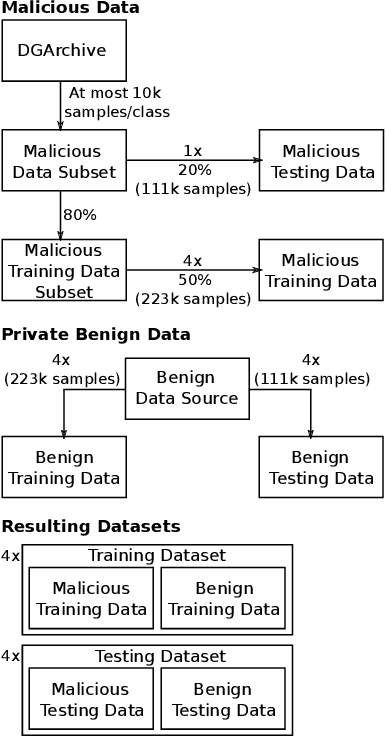

Abstract:The goal of Domain Generation Algorithm (DGA) detection is to recognize infections with bot malware and is often done with help of Machine Learning approaches that classify non-resolving Domain Name System (DNS) traffic and are trained on possibly sensitive data. In parallel, the rise of privacy research in the Machine Learning world leads to privacy-preserving measures that are tightly coupled with a deep learning model's architecture or training routine, while non deep learning approaches are commonly better suited for the application of privacy-enhancing methods outside the actual classification module. In this work, we aim to measure the privacy capability of the feature extractor of feature-based DGA detector FANCI (Feature-based Automated Nxdomain Classification and Intelligence). Our goal is to assess whether a data-rich adversary can learn an inverse mapping of FANCI's feature extractor and thereby reconstruct domain names from feature vectors. Attack success would pose a privacy threat to sharing FANCI's feature representation, while the opposite would enable this representation to be shared without privacy concerns. Using three real-world data sets, we train a recurrent Machine Learning model on the reconstruction task. Our approaches result in poor reconstruction performance and we attempt to back our findings with a mathematical review of the feature extraction process. We thus reckon that sharing FANCI's feature representation does not constitute a considerable privacy leakage.

The More, the Better? A Study on Collaborative Machine Learning for DGA Detection

Sep 24, 2021

Abstract:Domain generation algorithms (DGAs) prevent the connection between a botnet and its master from being blocked by generating a large number of domain names. Promising single-data-source approaches have been proposed for separating benign from DGA-generated domains. Collaborative machine learning (ML) can be used in order to enhance a classifier's detection rate, reduce its false positive rate (FPR), and to improve the classifier's generalization capability to different networks. In this paper, we complement the research area of DGA detection by conducting a comprehensive collaborative learning study, including a total of 13,440 evaluation runs. In two real-world scenarios we evaluate a total of eleven different variations of collaborative learning using three different state-of-the-art classifiers. We show that collaborative ML can lead to a reduction in FPR by up to 51.7%. However, while collaborative ML is beneficial for DGA detection, not all approaches and classifier types profit equally. We round up our comprehensive study with a thorough discussion of the privacy threats implicated by the different collaborative ML approaches.

Finding Phish in a Haystack: A Pipeline for Phishing Classification on Certificate Transparency Logs

Jun 23, 2021

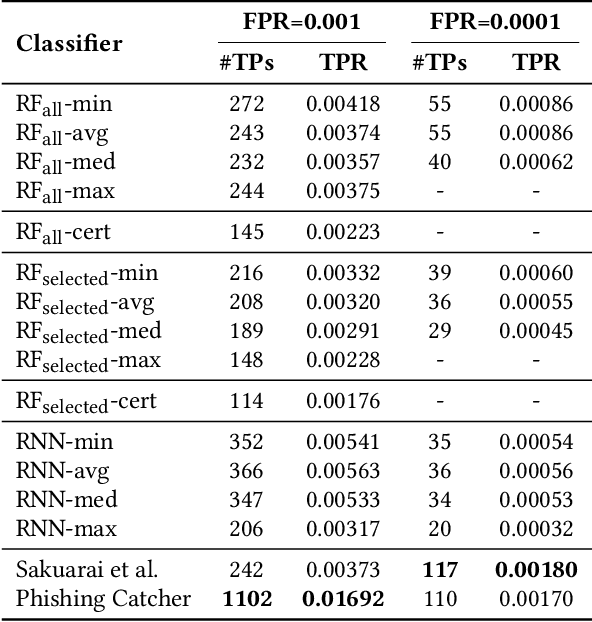

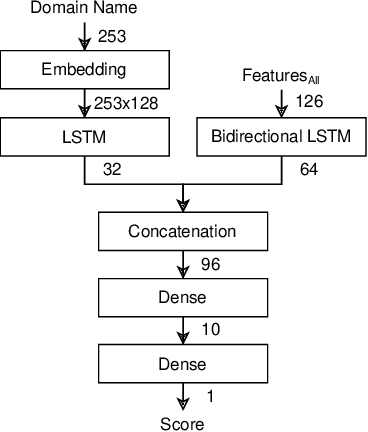

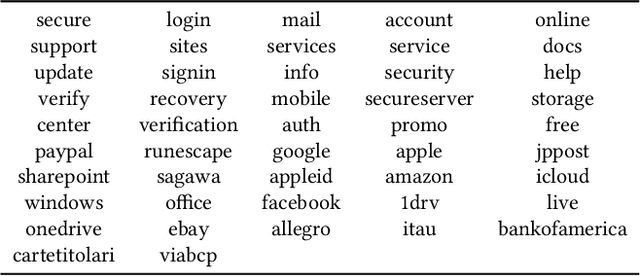

Abstract:Current popular phishing prevention techniques mainly utilize reactive blocklists, which leave a ``window of opportunity'' for attackers during which victims are unprotected. One possible approach to shorten this window aims to detect phishing attacks earlier, during website preparation, by monitoring Certificate Transparency (CT) logs. Previous attempts to work with CT log data for phishing classification exist, however they lack evaluations on actual CT log data. In this paper, we present a pipeline that facilitates such evaluations by addressing a number of problems when working with CT log data. The pipeline includes dataset creation, training, and past or live classification of CT logs. Its modular structure makes it possible to easily exchange classifiers or verification sources to support ground truth labeling efforts and classifier comparisons. We test the pipeline on a number of new and existing classifiers, and find a general potential to improve classifiers for this scenario in the future. We publish the source code of the pipeline and the used datasets along with this paper (https://gitlab.com/rwth-itsec/ctl-pipeline), thus making future research in this direction more accessible.

First Step Towards EXPLAINable DGA Multiclass Classification

Jun 23, 2021

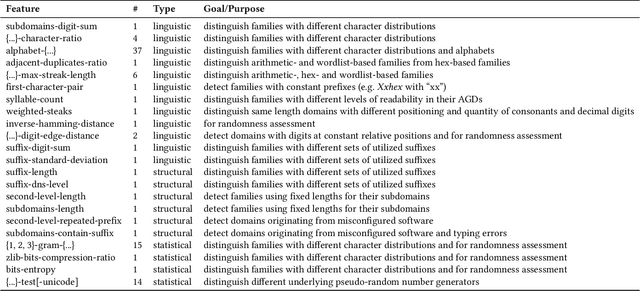

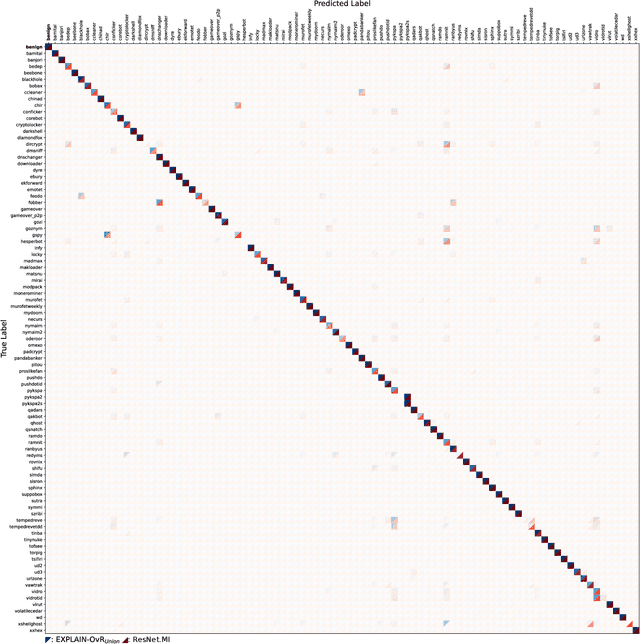

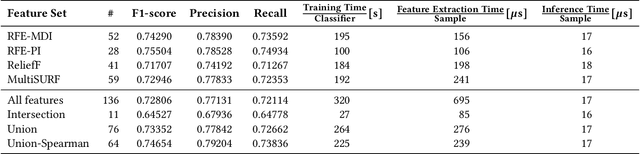

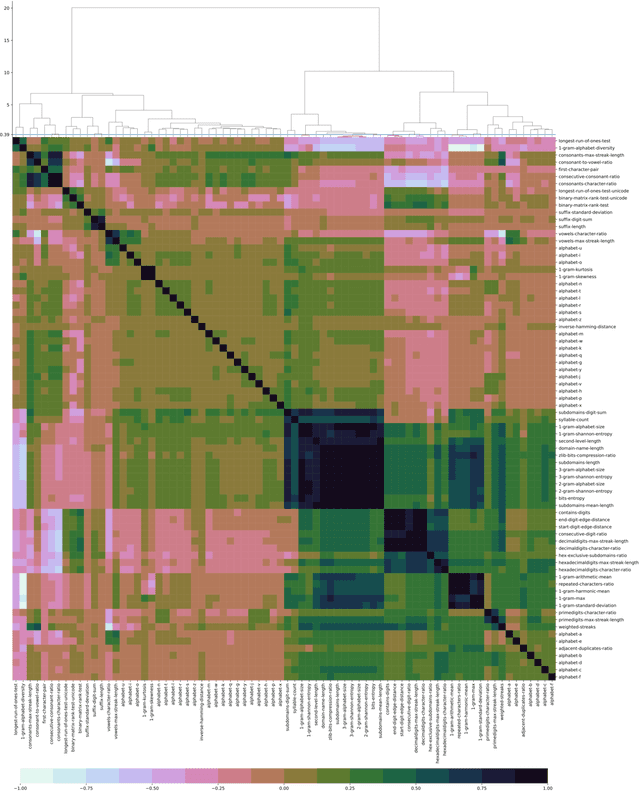

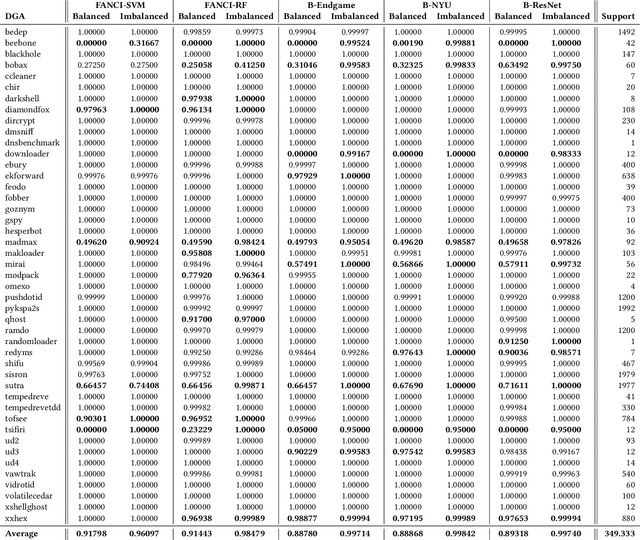

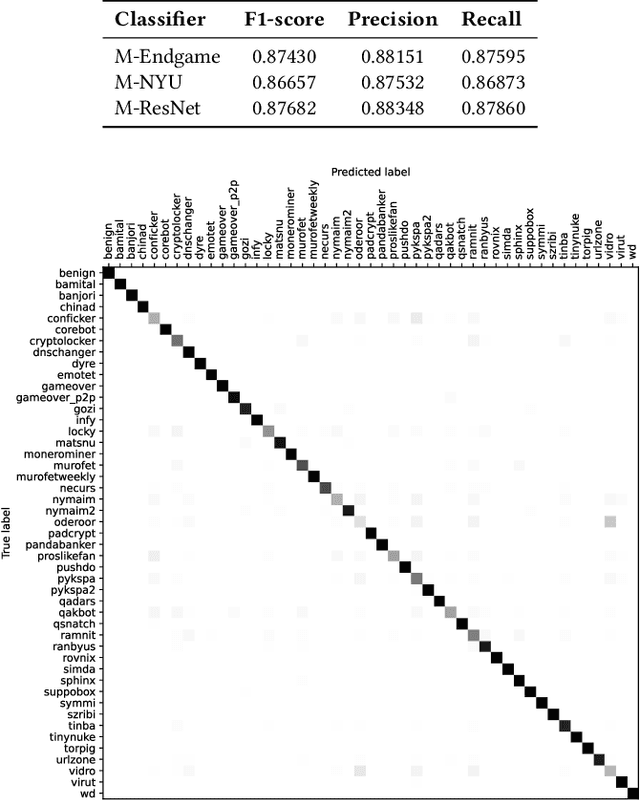

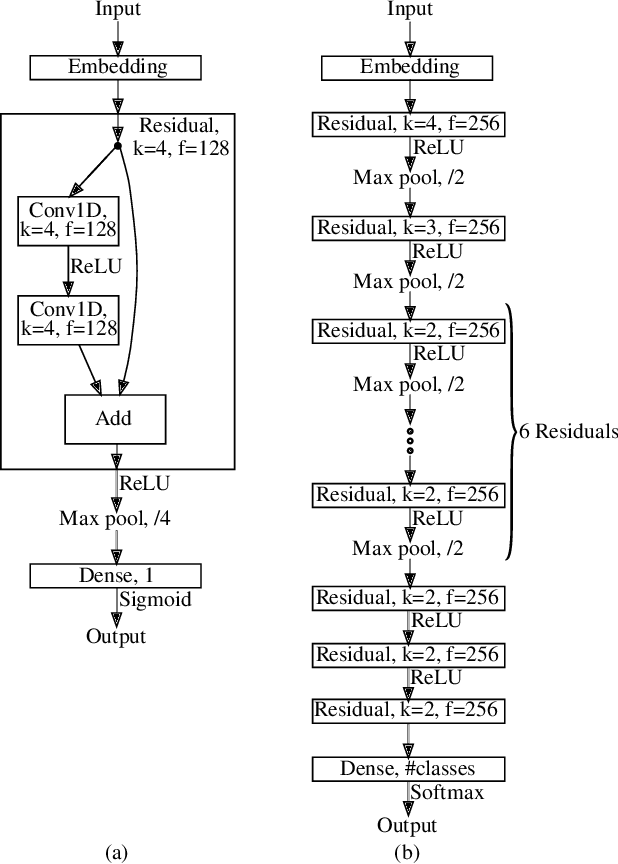

Abstract:Numerous malware families rely on domain generation algorithms (DGAs) to establish a connection to their command and control (C2) server. Counteracting DGAs, several machine learning classifiers have been proposed enabling the identification of the DGA that generated a specific domain name and thus triggering targeted remediation measures. However, the proposed state-of-the-art classifiers are based on deep learning models. The black box nature of these makes it difficult to evaluate their reasoning. The resulting lack of confidence makes the utilization of such models impracticable. In this paper, we propose EXPLAIN, a feature-based and contextless DGA multiclass classifier. We comparatively evaluate several combinations of feature sets and hyperparameters for our approach against several state-of-the-art classifiers in a unified setting on the same real-world data. Our classifier achieves competitive results, is real-time capable, and its predictions are easier to trace back to features than the predictions made by the DGA multiclass classifiers proposed in related work.

Making Use of NXt to Nothing: The Effect of Class Imbalances on DGA Detection Classifiers

Jul 01, 2020

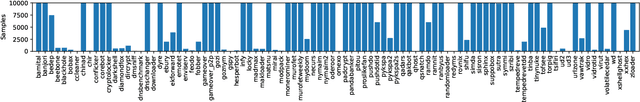

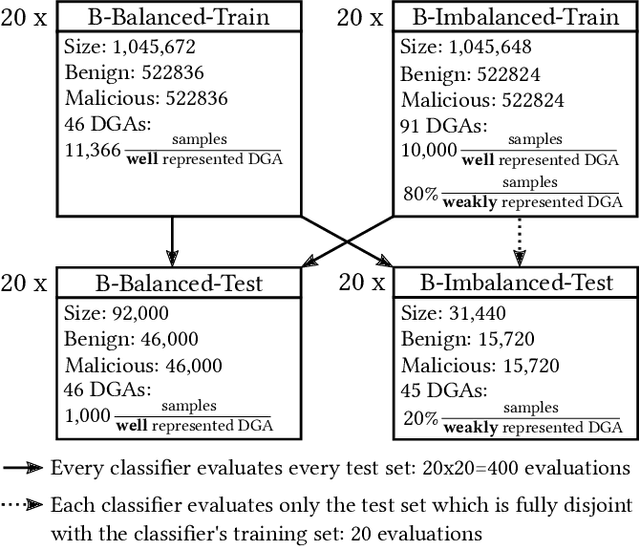

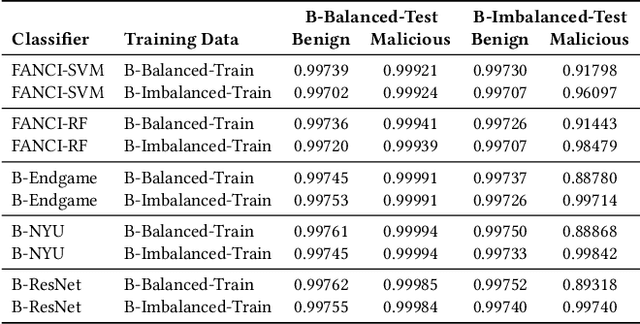

Abstract:Numerous machine learning classifiers have been proposed for binary classification of domain names as either benign or malicious, and even for multiclass classification to identify the domain generation algorithm (DGA) that generated a specific domain name. Both classification tasks have to deal with the class imbalance problem of strongly varying amounts of training samples per DGA. Currently, it is unclear whether the inclusion of DGAs for which only a few samples are known to the training sets is beneficial or harmful to the overall performance of the classifiers. In this paper, we perform a comprehensive analysis of various contextless DGA classifiers, which reveals the high value of a few training samples per class for both classification tasks. We demonstrate that the classifiers are able to detect various DGAs with high probability by including the underrepresented classes which were previously hardly recognizable. Simultaneously, we show that the classifiers' detection capabilities of well represented classes do not decrease.

* Accepted at The 15th International Conference on Availability, Reliability and Security (ARES 2020)

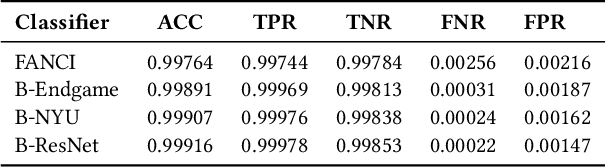

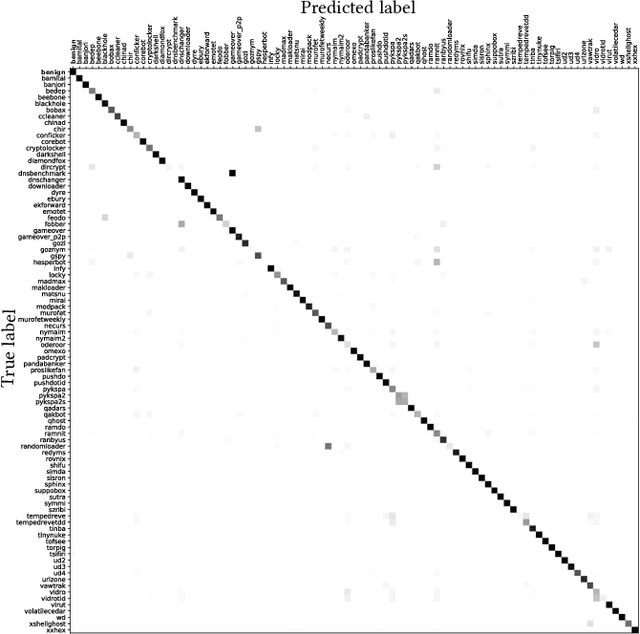

Analyzing the Real-World Applicability of DGA Classifiers

Jun 19, 2020

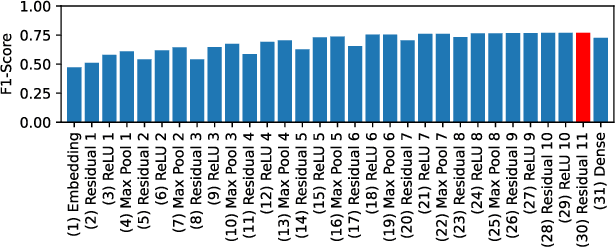

Abstract:Separating benign domains from domains generated by DGAs with the help of a binary classifier is a well-studied problem for which promising performance results have been published. The corresponding multiclass task of determining the exact DGA that generated a domain enabling targeted remediation measures is less well studied. Selecting the most promising classifier for these tasks in practice raises a number of questions that have not been addressed in prior work so far. These include the questions on which traffic to train in which network and when, just as well as how to assess robustness against adversarial attacks. Moreover, it is unclear which features lead a classifier to a decision and whether the classifiers are real-time capable. In this paper, we address these issues and thus contribute to bringing DGA detection classifiers closer to practical use. In this context, we propose one novel classifier based on residual neural networks for each of the two tasks and extensively evaluate them as well as previously proposed classifiers in a unified setting. We not only evaluate their classification performance but also compare them with respect to explainability, robustness, and training and classification speed. Finally, we show that our newly proposed binary classifier generalizes well to other networks, is time-robust, and able to identify previously unknown DGAs.

* Accepted at The 15th International Conference on Availability, Reliability and Security (ARES 2020)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge