Tina Woolf

Simple Classification using Binary Data

Jul 06, 2017

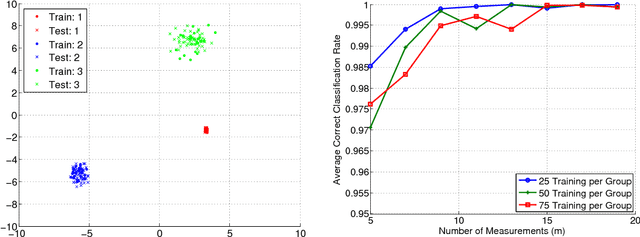

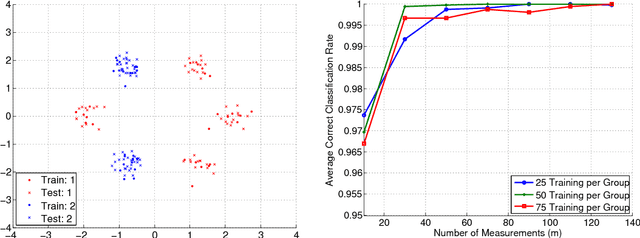

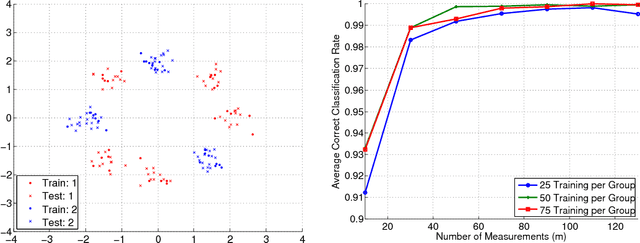

Abstract:Binary, or one-bit, representations of data arise naturally in many applications, and are appealing in both hardware implementations and algorithm design. In this work, we study the problem of data classification from binary data and propose a framework with low computation and resource costs. We illustrate the utility of the proposed approach through stylized and realistic numerical experiments, and provide a theoretical analysis for a simple case. We hope that our framework and analysis will serve as a foundation for studying similar types of approaches.

An Asynchronous Parallel Approach to Sparse Recovery

Jan 12, 2017

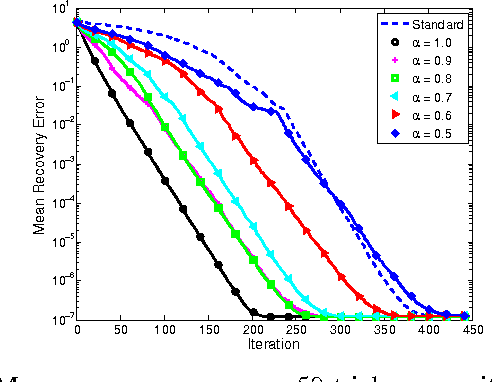

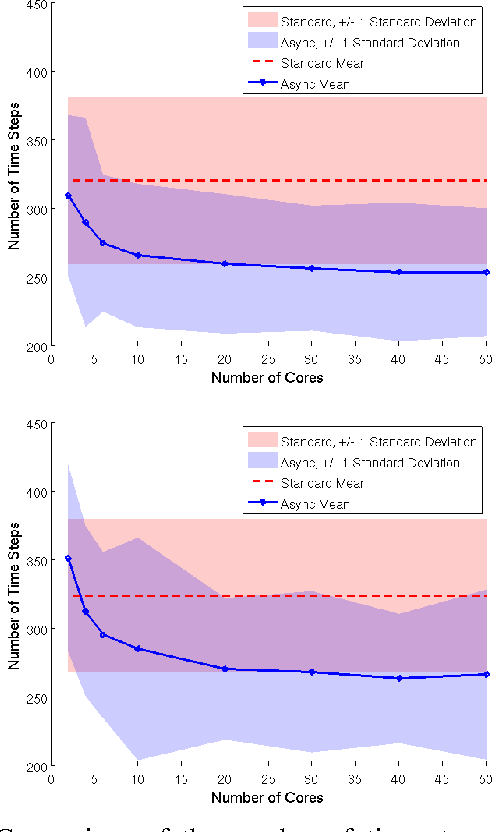

Abstract:Asynchronous parallel computing and sparse recovery are two areas that have received recent interest. Asynchronous algorithms are often studied to solve optimization problems where the cost function takes the form $\sum_{i=1}^M f_i(x)$, with a common assumption that each $f_i$ is sparse; that is, each $f_i$ acts only on a small number of components of $x\in\mathbb{R}^n$. Sparse recovery problems, such as compressed sensing, can be formulated as optimization problems, however, the cost functions $f_i$ are dense with respect to the components of $x$, and instead the signal $x$ is assumed to be sparse, meaning that it has only $s$ non-zeros where $s\ll n$. Here we address how one may use an asynchronous parallel architecture when the cost functions $f_i$ are not sparse in $x$, but rather the signal $x$ is sparse. We propose an asynchronous parallel approach to sparse recovery via a stochastic greedy algorithm, where multiple processors asynchronously update a vector in shared memory containing information on the estimated signal support. We include numerical simulations that illustrate the potential benefits of our proposed asynchronous method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge