Thomas Truong

Hybrid Score- and Rank-level Fusion for Person Identification using Face and ECG Data

Aug 07, 2020

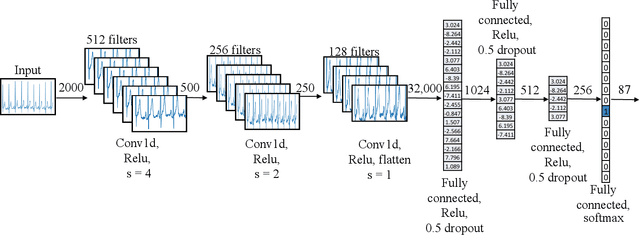

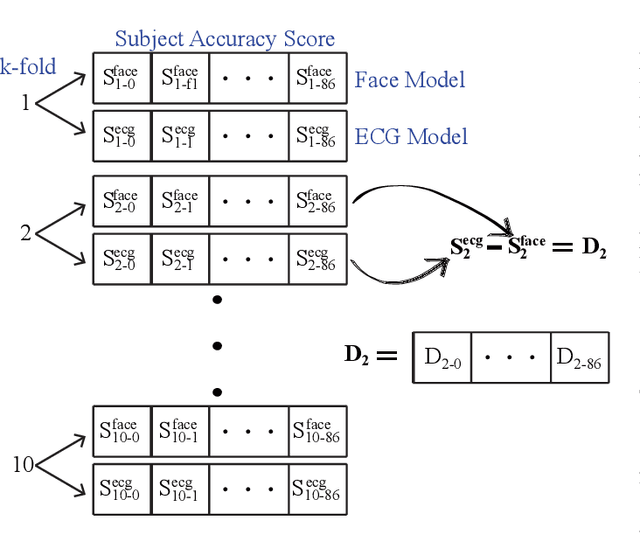

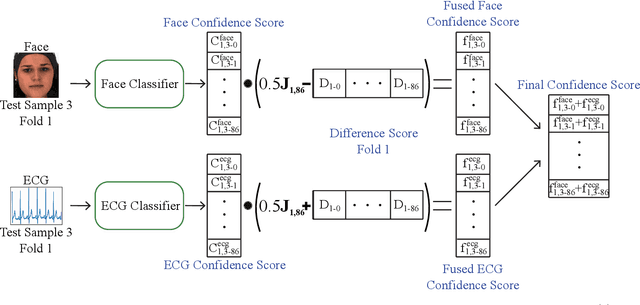

Abstract:Uni-modal identification systems are vulnerable to errors in sensor data collection and are therefore more likely to misidentify subjects. For instance, relying on data solely from an RGB face camera can cause problems in poorly lit environments or if subjects do not face the camera. Other identification methods such as electrocardiograms (ECG) have issues with improper lead connections to the skin. Errors in identification are minimized through the fusion of information gathered from both of these models. This paper proposes a methodology for combining the identification results of face and ECG data using Part A of the BioVid Heat Pain Database containing synchronized RGB-video and ECG data on 87 subjects. Using 10-fold cross-validation, face identification was 98.8% accurate, while the ECG identification was 96.1% accurate. By using a fusion approach the identification accuracy improved to 99.8%. Our proposed methodology allows for identification accuracies to be significantly improved by using disparate face and ECG models that have non-overlapping modalities.

Generative Adversarial Network for Radar Signal Generation

Aug 07, 2020

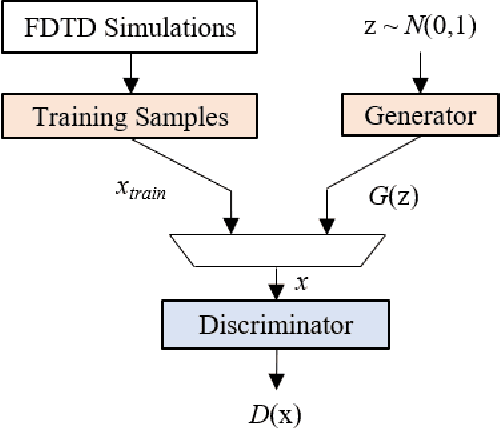

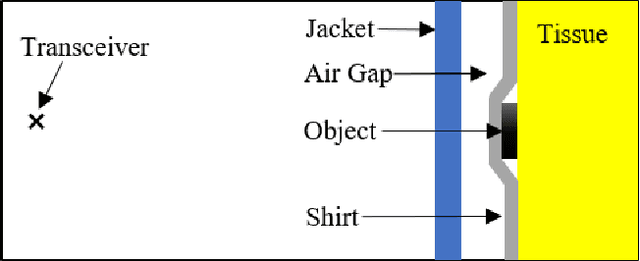

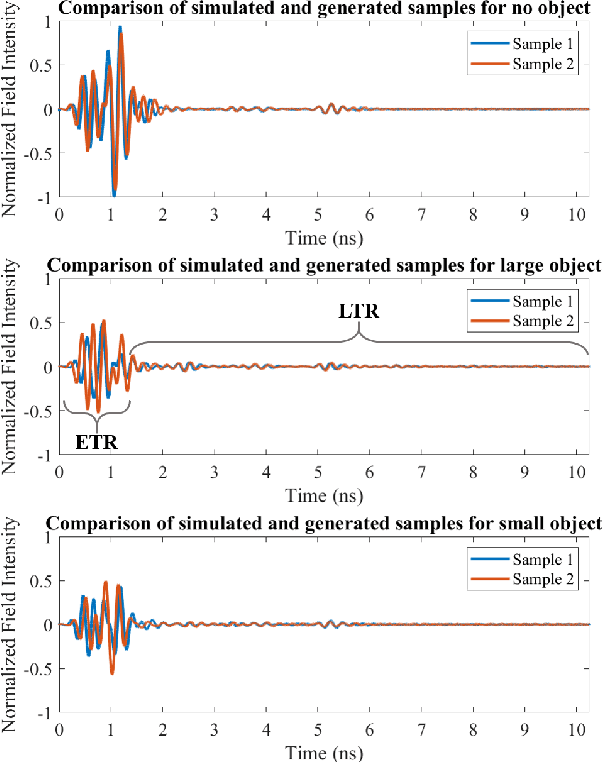

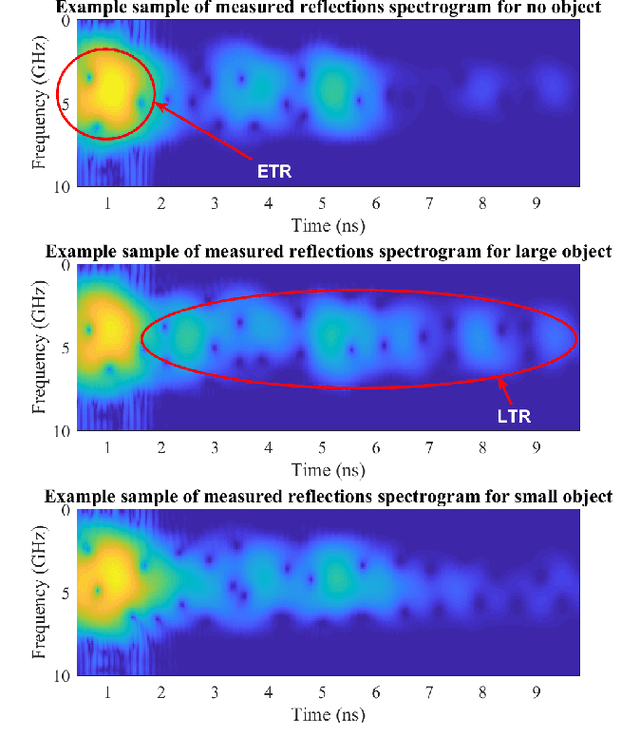

Abstract:A major obstacle in radar based methods for concealed object detection on humans and seamless integration into security and access control system is the difficulty in collecting high quality radar signal data. Generative adversarial networks (GAN) have shown promise in data generation application in the fields of image and audio processing. As such, this paper proposes the design of a GAN for application in radar signal generation. Data collected using the Finite-Difference Time-Domain (FDTD) method on three concealed object classes (no object, large object, and small object) were used as training data to train a GAN to generate radar signal samples for each class. The proposed GAN generated radar signal data which was indistinguishable from the training data by qualitative human observers.

Relatable Clothing: Detecting Visual Relationships between People and Clothing

Jul 20, 2020

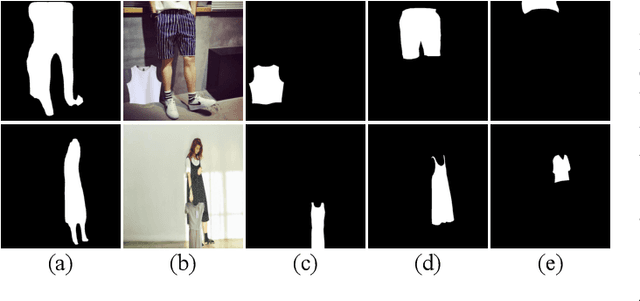

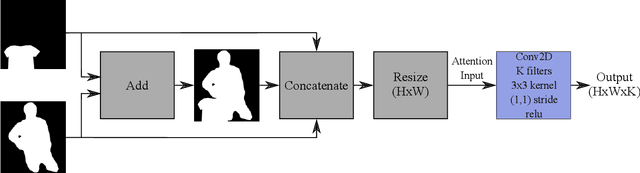

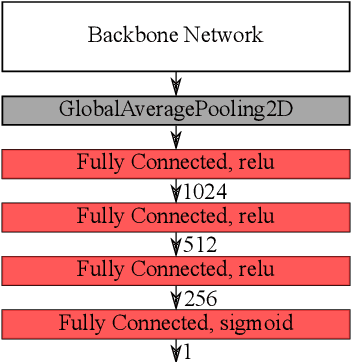

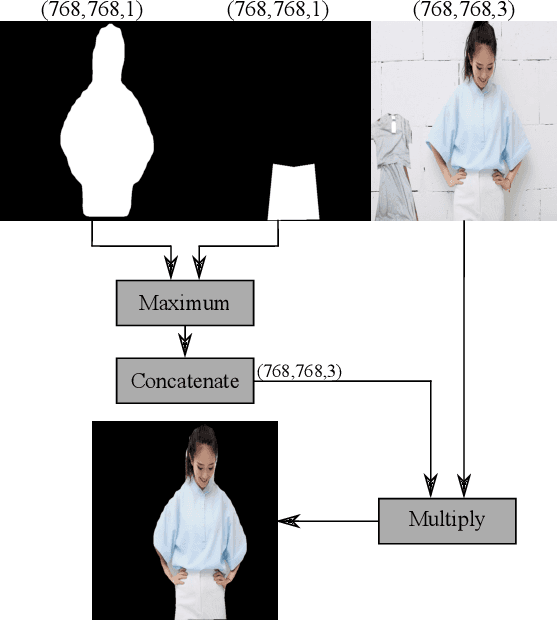

Abstract:Detecting visual relationships between people and clothing in an image has been a relatively unexplored problem in the field of computer vision and biometrics. The lack readily available public dataset for ``worn'' and ``unworn'' classification has slowed the development of solutions for this problem. We present the release of the Relatable Clothing Dataset which contains 35287 person-clothing pairs and segmentation masks for the development of ``worn'' and ``unworn'' classification models. Additionally, we propose a novel soft attention unit for performing ``worn'' and ``unworn'' classification using deep neural networks. The proposed soft attention models have an accuracy of upward $98.55\% \pm 0.35\%$ on the Relatable Clothing Dataset and demonstrate high generalizable, allowing us to classify unseen articles of clothing such as high visibility vests as ``worn'' or ``unworn''.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge