Thomas E. Portegys

Learning causation event conjunction sequences

Feb 17, 2024Abstract:This is an examination of some methods that learn causations in event sequences. A causation is defined as a conjunction of one or more cause events occurring in an arbitrary order, with possible intervening non-causal events, that lead to an effect. The methods include recurrent and non-recurrent artificial neural networks (ANNs), as well as a histogram-based algorithm. An attention recurrent ANN performed the best of the ANNs, while the histogram algorithm was significantly superior to all the ANNs.

A modularity comparison of Long Short-Term Memory and Morphognosis neural networks

Apr 23, 2021

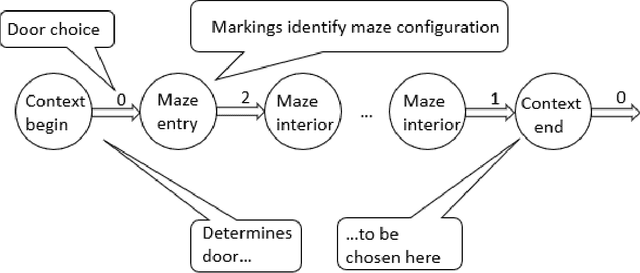

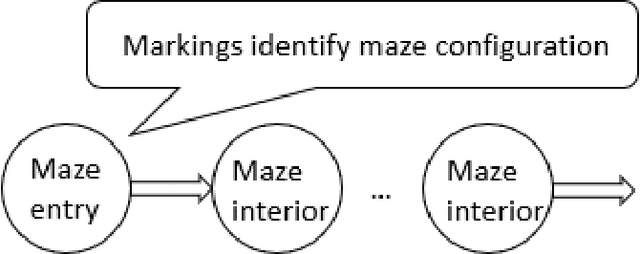

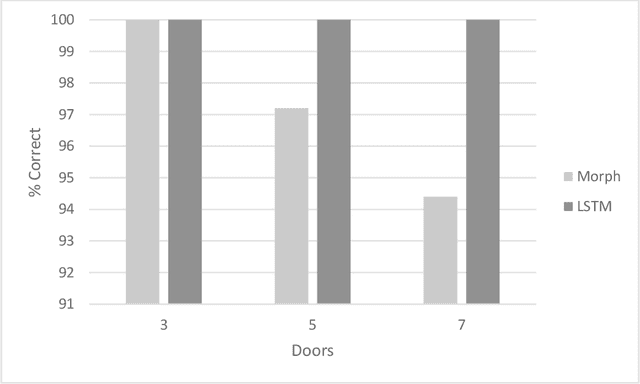

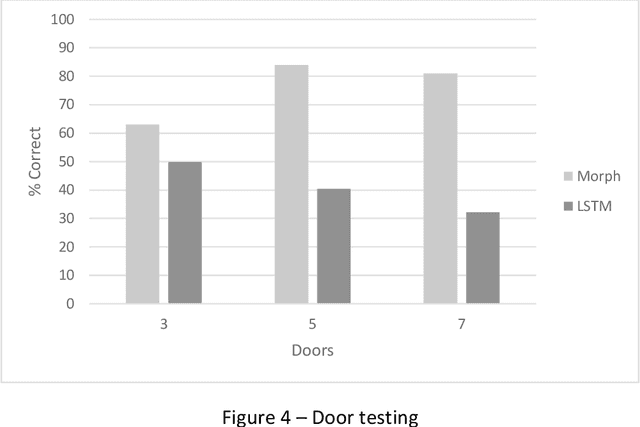

Abstract:This study compares the modularity performance of two artificial neural network architectures: a Long Short-Term Memory (LSTM) recurrent network, and Morphognosis, a neural network based on a hierarchy of spatial and temporal contexts. Mazes are used to measure performance, defined as the ability to utilize independently learned mazes to solve mazes composed of them. A maze is a sequence of rooms connected by doors. The modular task is implemented as follows: at the beginning of the maze, an initial door choice forms a context that must be retained until the end of an intervening maze, where the same door must be chosen again to reach the goal. For testing, the door-association mazes and separately trained intervening mazes are presented together for the first time. While both neural networks perform well during training, the testing performance of Morphognosis is significantly better than LSTM on this modular task.

Morphognosis: the shape of knowledge in space and time

Mar 04, 2017

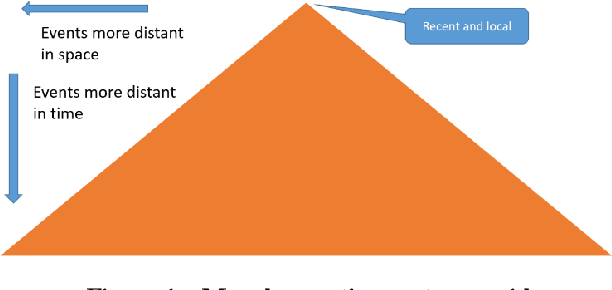

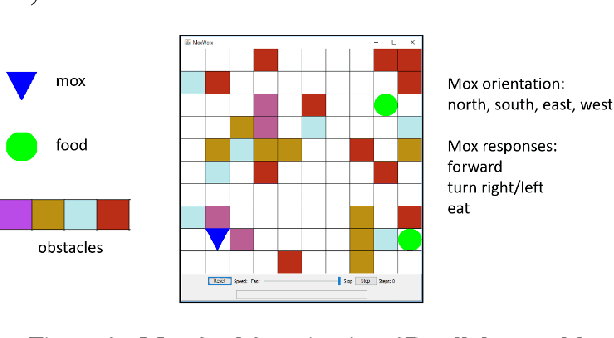

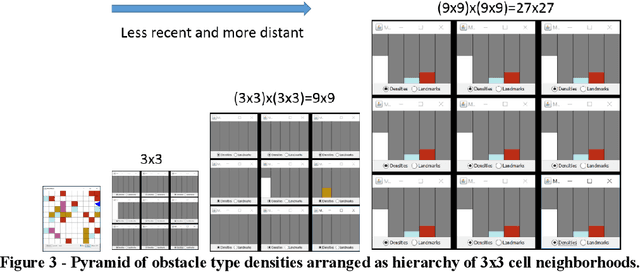

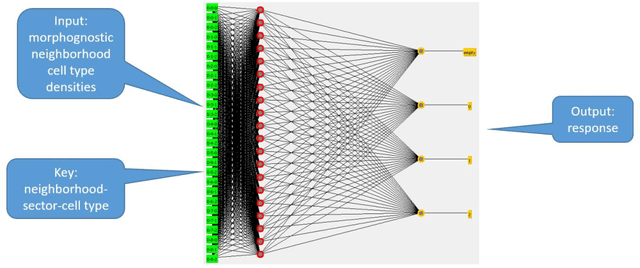

Abstract:Artificial intelligence research to a great degree focuses on the brain and behaviors that the brain generates. But the brain, an extremely complex structure resulting from millions of years of evolution, can be viewed as a solution to problems posed by an environment existing in space and time. The environment generates signals that produce sensory events within an organism. Building an internal spatial and temporal model of the environment allows an organism to navigate and manipulate the environment. Higher intelligence might be the ability to process information coming from a larger extent of space-time. In keeping with nature's penchant for extending rather than replacing, the purpose of the mammalian neocortex might then be to record events from distant reaches of space and time and render them, as though yet near and present, to the older, deeper brain whose instinctual roles have changed little over eons. Here this notion is embodied in a model called morphognosis (morpho = shape and gnosis = knowledge). Its basic structure is a pyramid of event recordings called a morphognostic. At the apex of the pyramid are the most recent and nearby events. Receding from the apex are less recent and possibly more distant events. A morphognostic can thus be viewed as a structure of progressively larger chunks of space-time knowledge. A set of morphognostics forms long-term memories that are learned by exposure to the environment. A cellular automaton is used as the platform to investigate the morphognosis model, using a simulated organism that learns to forage in its world for food, build a nest, and play the game of Pong.

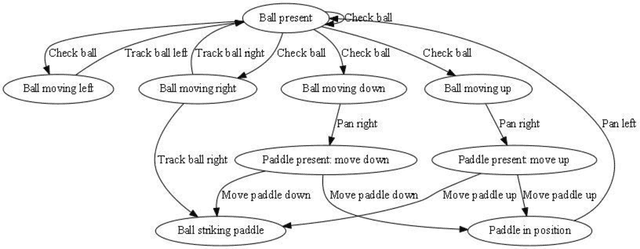

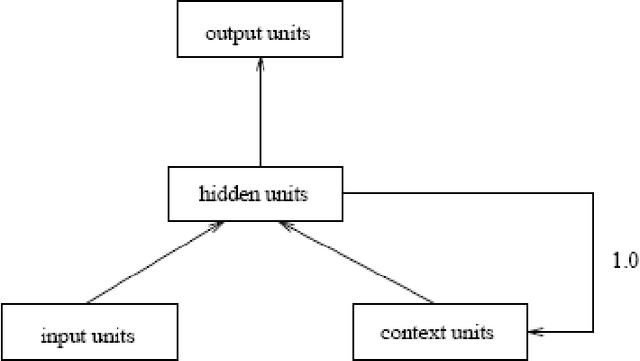

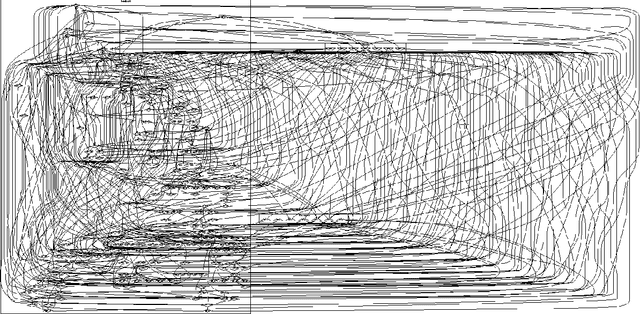

Training artificial neural networks to learn a nondeterministic game

Jul 14, 2015

Abstract:It is well known that artificial neural networks (ANNs) can learn deterministic automata. Learning nondeterministic automata is another matter. This is important because much of the world is nondeterministic, taking the form of unpredictable or probabilistic events that must be acted upon. If ANNs are to engage such phenomena, then they must be able to learn how to deal with nondeterminism. In this project the game of Pong poses a nondeterministic environment. The learner is given an incomplete view of the game state and underlying deterministic physics, resulting in a nondeterministic game. Three models were trained and tested on the game: Mona, Elman, and Numenta's NuPIC.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge