Terje Gobakken

Faculty of Environmental Sciences and Natural Resource Management, Norwegian University of Life Sciences, NMBU, Ås, Norway

Assessing airborne laser scanning and aerial photogrammetry for deep learning-based stand delineation

Feb 25, 2026Abstract:Accurate forest stand delineation is essential for forest inventory and management but remains a largely manual and subjective process. A recent study has shown that deep learning can produce stand delineations comparable to expert interpreters when combining aerial imagery and airborne laser scanning (ALS) data. However, temporal misalignment between data sources limits operational scalability. Canopy height models (CHMs) derived from digital photogrammetry (DAP) offer better temporal alignment but may smoothen canopy surface and canopy gaps, raising the question of whether they can reliably replace ALS-derived CHMs. Similarly, the inclusion of a digital terrain model (DTM) has been suggested to improve delineation performance, but has remained untested in published literature. Using expert-delineated forest stands as reference data, we assessed a U-Net-based semantic segmentation framework with municipality-level cross-validation across six municipalities in southeastern Norway. We compared multispectral aerial imagery combined with (i) an ALS-derived CHM, (ii) a DAP-derived CHM, and (iii) a DAP-derived CHM in combination with a DTM. Results showed comparable performance across all data combinations, reaching overall accuracy values between 0.90-0.91. Agreement between model predictions was substantially larger than agreement with the reference data, highlighting both model consistency and the inherent subjectivity of stand delineation. The similar performance of DAP-CHMs, despite the reduced structural detail, and the lack of improvements of the DTM indicate that the framework is resilient to variations in input data. These findings indicate that large datasets for deep learning-based stand delineations can be assembled using projects including temporally aligned ALS data and DAP point clouds.

Semantic segmentation of forest stands using deep learning

Apr 03, 2025

Abstract:Forest stands are the fundamental units in forest management inventories, silviculture, and financial analysis within operational forestry. Over the past two decades, a common method for mapping stand borders has involved delineation through manual interpretation of stereographic aerial images. This is a time-consuming and subjective process, limiting operational efficiency and introducing inconsistencies. Substantial effort has been devoted to automating the process, using various algorithms together with aerial images and canopy height models constructed from airborne laser scanning (ALS) data, but manual interpretation remains the preferred method. Deep learning (DL) methods have demonstrated great potential in computer vision, yet their application to forest stand delineation remains unexplored in published research. This study presents a novel approach, framing stand delineation as a multiclass segmentation problem and applying a U-Net based DL framework. The model was trained and evaluated using multispectral images, ALS data, and an existing stand map created by an expert interpreter. Performance was assessed on independent data using overall accuracy, a standard metric for classification tasks that measures the proportions of correctly classified pixels. The model achieved an overall accuracy of 0.73. These results demonstrate strong potential for DL in automated stand delineation. However, a few key challenges were noted, especially for complex forest environments.

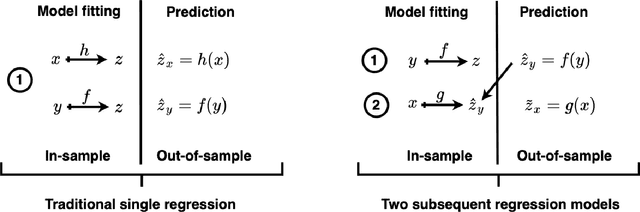

Forest Parameter Prediction by Multiobjective Deep Learning of Regression Models Trained with Pseudo-Target Imputation

Jun 19, 2023Abstract:In prediction of forest parameters with data from remote sensing (RS), regression models have traditionally been trained on a small sample of ground reference data. This paper proposes to impute this sample of true prediction targets with data from an existing RS-based prediction map that we consider as pseudo-targets. This substantially increases the amount of target training data and leverages the use of deep learning (DL) for semi-supervised regression modelling. We use prediction maps constructed from airborne laser scanning (ALS) data to provide accurate pseudo-targets and free data from Sentinel-1's C-band synthetic aperture radar (SAR) as regressors. A modified U-Net architecture is adapted with a selection of different training objectives. We demonstrate that when a judicious combination of loss functions is used, the semi-supervised imputation strategy produces results that surpass traditional ALS-based regression models, even though \sen data are considered as inferior for forest monitoring. These results are consistent for experiments on above-ground biomass prediction in Tanzania and stem volume prediction in Norway, representing a diversity in parameters and forest types that emphasises the robustness of the approach.

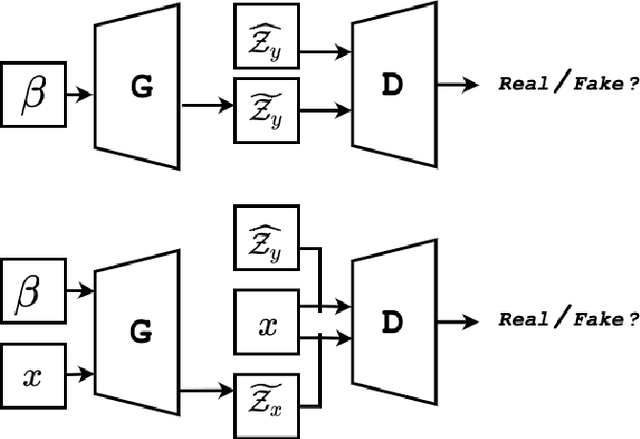

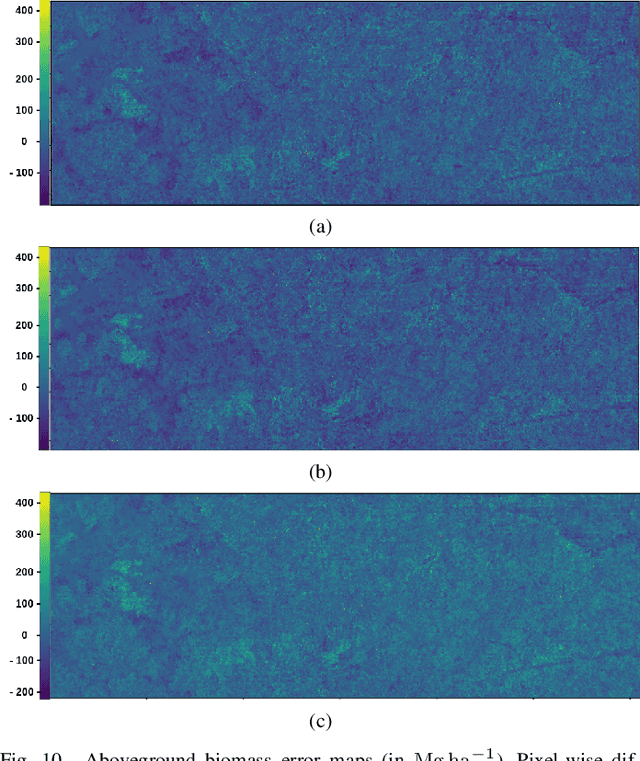

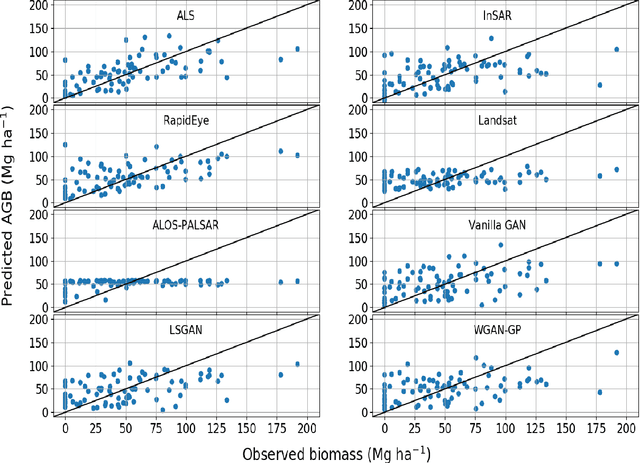

Constructing Forest Biomass Prediction Maps from Radar Backscatter by Sequential Regression with a Conditional Generative Adversarial Network

Jun 21, 2021

Abstract:This paper studies construction of above-ground biomass (AGB) prediction maps from synthetic aperture radar (SAR) intensity images. The purpose is to improve traditional regression models based on SAR intensity, trained with a limited amount of AGB in situ measurements. Although it is costly to collect, data from airborne laser scanning (ALS) sensors are highly correlated with AGB. Therefore, we propose using AGB predictions based on ALS data as surrogate response variables for SAR data in a sequential modelling fashion. This increases the amount of training data dramatically. To model the regression function between SAR intensity and ALS-predicted AGB we propose to utilise a conditional generative adversarial network (cGAN), i.e. the Pix2Pix convolutional neural network. This enables the recreation of existing ALS-based AGB prediction maps. The generated synthesised ALS-based AGB predictions are evaluated qualitatively and quantitatively against ALS-based AGB predictions retrieved from a traditional non-sequential regression model trained in the same area. Results show that the proposed architecture manages to capture characteristics of the actual data. This suggests that the use of ALS-guided generative models is a promising avenue for AGB prediction from SAR intensity. Further research on this area has the potential of providing both large-scale and low-cost predictions of AGB.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge