Tal Feldman

Know Your Scientist: KYC as Biosecurity Infrastructure

Feb 05, 2026Abstract:Biological AI tools for protein design and structure prediction are advancing rapidly, creating dual-use risks that existing safeguards cannot adequately address. Current model-level restrictions, including keyword filtering, output screening, and content-based access denials, are fundamentally ill-suited to biology, where reliable function prediction remains beyond reach and novel threats evade detection by design. We propose a three-tier Know Your Customer (KYC) framework, inspired by anti-money laundering (AML) practices in the financial sector, that shifts governance from content inspection to user verification and monitoring. Tier I leverages research institutions as trust anchors to vouch for affiliated researchers and assume responsibility for vetting. Tier II applies output screening through sequence homology searches and functional annotation. Tier III monitors behavioral patterns to detect anomalies inconsistent with declared research purposes. This layered approach preserves access for legitimate researchers while raising the cost of misuse through institutional accountability and traceability. The framework can be implemented immediately using existing institutional infrastructure, requiring no new legislation or regulatory mandates.

On the Basis of Sex: A Review of Gender Bias in Machine Learning Applications

Apr 06, 2021

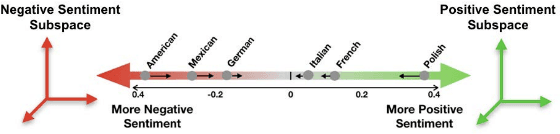

Abstract:Machine Learning models have been deployed across almost every aspect of society, often in situations that affect the social welfare of many individuals. Although these models offer streamlined solutions to large problems, they may contain biases and treat groups or individuals unfairly. To our knowledge, this review is one of the first to focus specifically on gender bias in applications of machine learning. We first introduce several examples of machine learning gender bias in practice. We then detail the most widely used formalizations of fairness in order to address how to make machine learning models fairer. Specifically, we discuss the most influential bias mitigation algorithms as applied to domains in which models have a high propensity for gender discrimination. We group these algorithms into two overarching approaches -- removing bias from the data directly and removing bias from the model through training -- and we present representative examples of each. As society increasingly relies on artificial intelligence to help in decision-making, addressing gender biases present in these models is imperative. To provide readers with the tools to assess the fairness of machine learning models and mitigate the biases present in them, we discuss multiple open source packages for fairness in AI.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge