Sylvester Kaczmarek

Benchmarking the Energy Cost of Assurance in Neuromorphic Edge Robotics

Mar 14, 2026Abstract:Deploying trustworthy artificial intelligence on edge robotics imposes a difficult trade-off between high-assurance robustness and energy sustainability. Traditional defense mechanisms against adversarial attacks typically incur significant computational overhead, threatening the viability of power-constrained platforms in environments such as cislunar space. This paper quantifies the energy cost of assurance in event-driven neuromorphic systems. We benchmark the Hierarchical Temporal Defense (HTD) framework on the BrainChip Akida AKD1000 processor against a suite of adversarial temporal attacks. We demonstrate that unlike traditional deep learning defenses which often degrade efficiency significantly with increased robustness, the event-driven nature of the proposed architecture achieves a superior trade-off. The system reduces gradient-based adversarial success rates from 82.1% to 18.7% and temporal jitter success rates from 75.8% to 25.1%, while maintaining an energy consumption of approximately 45 microjoules per inference. We report a counter-intuitive reduction in dynamic power consumption in the fully defended configuration, attributed to volatility-gated plasticity mechanisms that induce higher network sparsity. These results provide empirical evidence that neuromorphic sparsity enables sustainable and high-assurance edge autonomy.

Towards Automated Satellite Conjunction Management with Bayesian Deep Learning

Dec 23, 2020

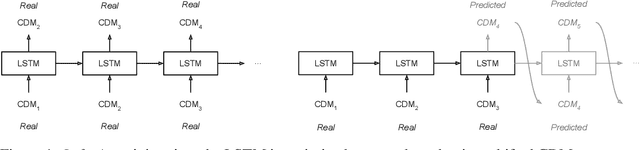

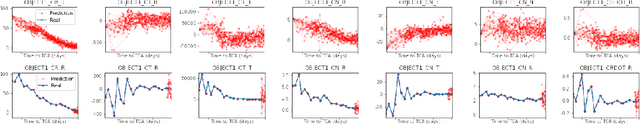

Abstract:After decades of space travel, low Earth orbit is a junkyard of discarded rocket bodies, dead satellites, and millions of pieces of debris from collisions and explosions. Objects in high enough altitudes do not re-enter and burn up in the atmosphere, but stay in orbit around Earth for a long time. With a speed of 28,000 km/h, collisions in these orbits can generate fragments and potentially trigger a cascade of more collisions known as the Kessler syndrome. This could pose a planetary challenge, because the phenomenon could escalate to the point of hindering future space operations and damaging satellite infrastructure critical for space and Earth science applications. As commercial entities place mega-constellations of satellites in orbit, the burden on operators conducting collision avoidance manoeuvres will increase. For this reason, development of automated tools that predict potential collision events (conjunctions) is critical. We introduce a Bayesian deep learning approach to this problem, and develop recurrent neural network architectures (LSTMs) that work with time series of conjunction data messages (CDMs), a standard data format used by the space community. We show that our method can be used to model all CDM features simultaneously, including the time of arrival of future CDMs, providing predictions of conjunction event evolution with associated uncertainties.

Spacecraft Collision Risk Assessment with Probabilistic Programming

Dec 18, 2020

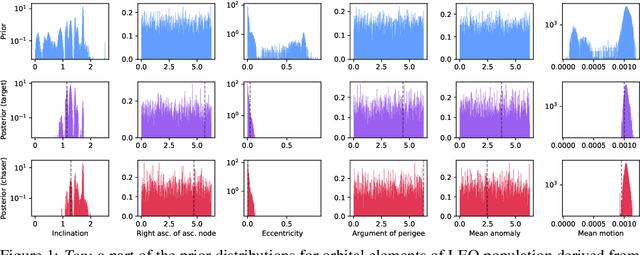

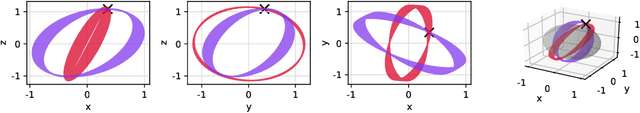

Abstract:Over 34,000 objects bigger than 10 cm in length are known to orbit Earth. Among them, only a small percentage are active satellites, while the rest of the population is made of dead satellites, rocket bodies, and debris that pose a collision threat to operational spacecraft. Furthermore, the predicted growth of the space sector and the planned launch of megaconstellations will add even more complexity, therefore causing the collision risk and the burden on space operators to increase. Managing this complex framework with internationally agreed methods is pivotal and urgent. In this context, we build a novel physics-based probabilistic generative model for synthetically generating conjunction data messages, calibrated using real data. By conditioning on observations, we use the model to obtain posterior distributions via Bayesian inference. We show that the probabilistic programming approach to conjunction assessment can help in making predictions and in finding the parameters that explain the observed data in conjunction data messages, thus shedding more light on key variables and orbital characteristics that more likely lead to conjunction events. Moreover, our technique enables the generation of physically accurate synthetic datasets of collisions, answering a fundamental need of the space and machine learning communities working in this area.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge