Soheil Mehrabkhani

Fourier Transform Approach to Machine Learning III: Fourier Classification

Jan 03, 2020

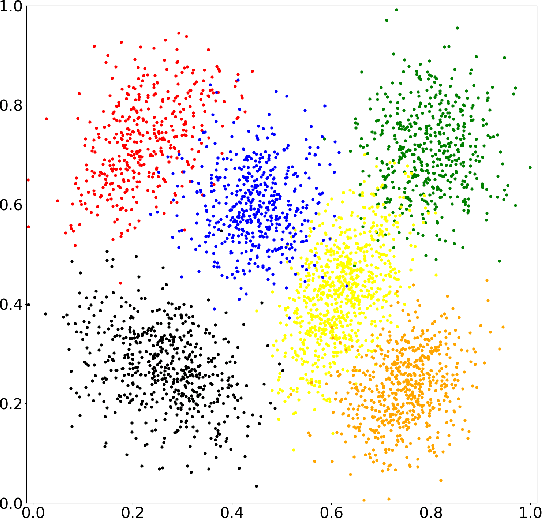

Abstract:We propose a Fourier-based learning algorithm for highly nonlinear multiclass classification. The algorithm is based on a smoothing technique to calculate the probability distribution of all classes. To obtain the probability distribution, the density distribution of each class is smoothed by a low-pass filter separately. The advantage of the Fourier representation is capturing the nonlinearities of the data distribution without defining any kernel function. Furthermore, contrary to the support vector machines, it makes a probabilistic explanation for the classification possible. Moreover, it can treat overlapped classes as well. Comparing to the logistic regression, it does not require feature engineering. In general, its computational performance is also very well for large data sets and in contrast to other algorithms, the typical overfitting problem does not happen at all. The capability of the algorithm is demonstrated for multiclass classification with overlapped classes and very high nonlinearity of the class distributions.

Clustering Optimization: Finding the Number and Centroids of Clusters by a Fourier-based Algorithm

Jun 03, 2019

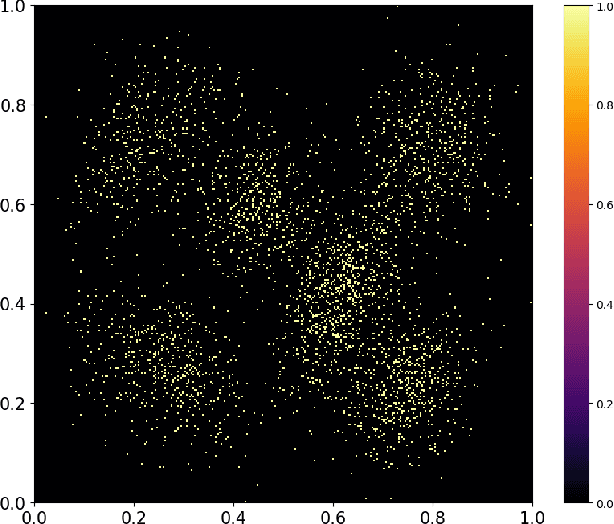

Abstract:We propose a Fourier-based approach for optimization of several clustering algorithms. Mathematically, clusters data can be described by a density function represented by the Dirac mixture distribution. The density function can be smoothed by applying the Fourier transform and a Gaussian filter. The determination of the optimal standard deviation of the Gaussian filter will be accomplished by the use of a convergence criterion related to the correlation between the smoothed and the original density functions. In principle, the optimal smoothed density function exhibits local maxima, which correspond to the cluster centroids. Thus, the complex task of finding the centroids of the clusters is simplified by the detection of the peaks of the smoothed density function. A multiple sliding windows procedure is used to detect the peaks. The remarkable accuracy of the proposed algorithm demonstrates its capability as a reliable general method for enhancement of the clustering performance, its global optimization and also removing the initialization problem in many clustering methods.

Fourier Transform Approach to Machine Learning

Apr 02, 2019

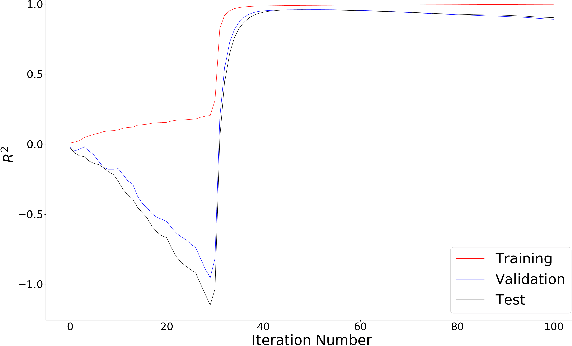

Abstract:We propose a supervised learning algorithm for machine learning applications. Contrary to the model developing in the classical methods, which treat training, validation, and test as separate steps, in the presented approach, there is a unified training and evaluating procedure based on an iterative band filtering by the use of a fast Fourier transform. The presented approach does not apply the method of least squares, thus, basically typical ill-conditioned matrices do not occur at all. The optimal model results from the convergence of the performance metric, which automatically prevents the usual underfitting and overfitting problems. The algorithm capability is investigated for noisy data, and the obtained result demonstrates a reliable and powerful machine learning approach beyond the typical limits of the classical methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge