Simon Diemert

Can Large Language Models assist in Hazard Analysis?

Mar 25, 2023Abstract:Large Language Models (LLMs), such as GPT-3, have demonstrated remarkable natural language processing and generation capabilities and have been applied to a variety tasks, such as source code generation. This paper explores the potential of integrating LLMs in the hazard analysis for safety-critical systems, a process which we refer to as co-hazard analysis (CoHA). In CoHA, a human analyst interacts with an LLM via a context-aware chat session and uses the responses to support elicitation of possible hazard causes. In this experiment, we explore CoHA with three increasingly complex versions of a simple system, using Open AI's ChatGPT service. The quality of ChatGPT's responses were systematically assessed to determine the feasibility of CoHA given the current state of LLM technology. The results suggest that LLMs may be useful for supporting human analysts performing hazard analysis.

Safety-Critical Adaptation in Self-Adaptive Systems

Sep 30, 2022

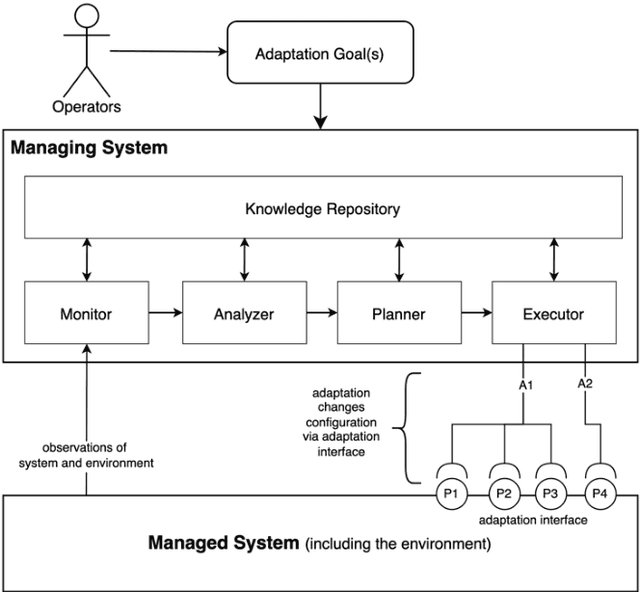

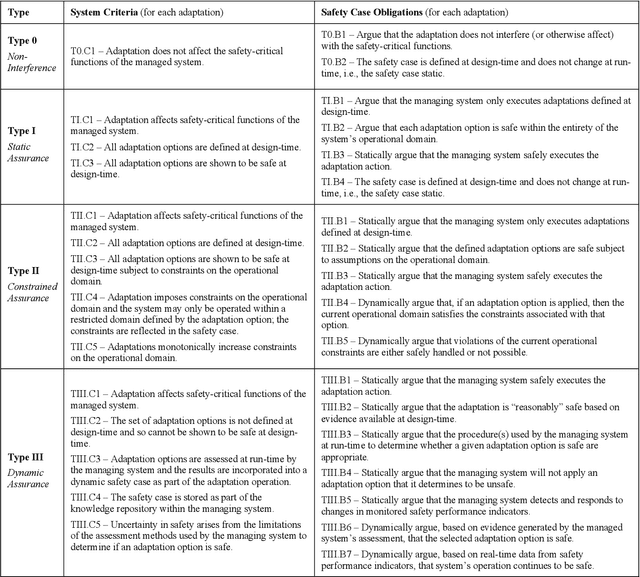

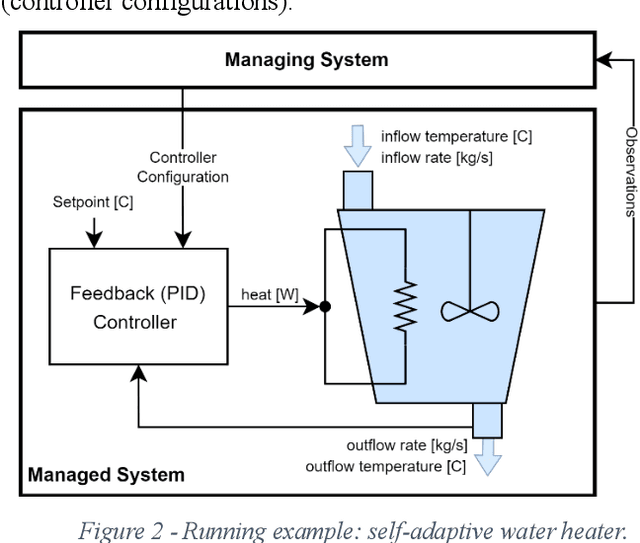

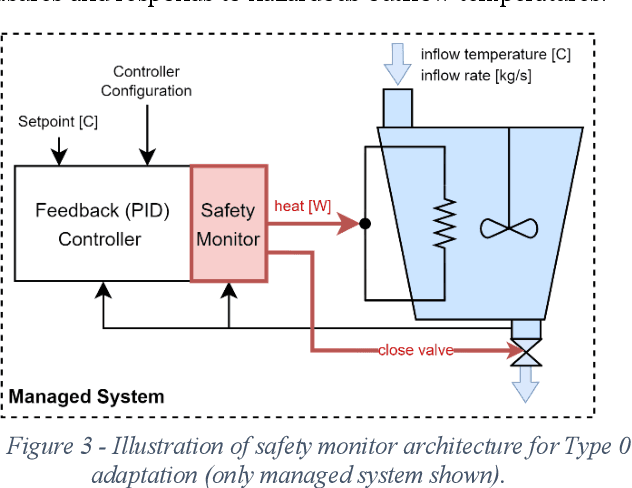

Abstract:Modern systems are designed to operate in increasingly variable and uncertain environments. Not only are these environments complex, in the sense that they contain a tremendous number of variables, but they also change over time. Systems must be able to adjust their behaviour at run-time to manage these uncertainties. These self-adaptive systems have been studied extensively. This paper proposes a definition of a safety-critical self-adaptive system and then describes a taxonomy for classifying adaptations into different types based on their impact on the system's safety and the system's safety case. The taxonomy expresses criteria for classification and then describes specific criteria that the safety case for a self-adaptive system must satisfy, depending on the type of adaptations performed. Each type in the taxonomy is illustrated using the example of a safety-critical self-adaptive water heating system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge