Shridhar Ravikumar

Large-Scale High-Quality 3D Gaussian Head Reconstruction from Multi-View Captures

May 05, 2026Abstract:We propose HeadsUp, a scalable feed-forward method for reconstructing high-quality 3D Gaussian heads from large-scale multi-camera setups. Our method employs an efficient encoder-decoder architecture that compresses input views into a compact latent representation. This latent representation is then decoded into a set of UV-parameterized 3D Gaussians anchored to a neutral head template. This UV representation decouples the number of 3D Gaussians from the number and resolution of input images, enabling training with many high-resolution input views. We train and evaluate our model on an internal dataset with more than 10,000 subjects, which is an order of magnitude larger than existing multi-view human head datasets. HeadsUp achieves state-of-the-art reconstruction quality and generalizes to novel identities without test-time optimization. We extensively analyze the scaling behavior of our model across identities, views, and model capacity, revealing practical insights for quality-compute trade-offs. Finally, we highlight the strength of our latent space by showcasing two downstream applications: generating novel 3D identities and animating the 3D heads with expression blendshapes.

Lightweight Markerless Monocular Face Capture with 3D Spatial Priors

Jan 16, 2019

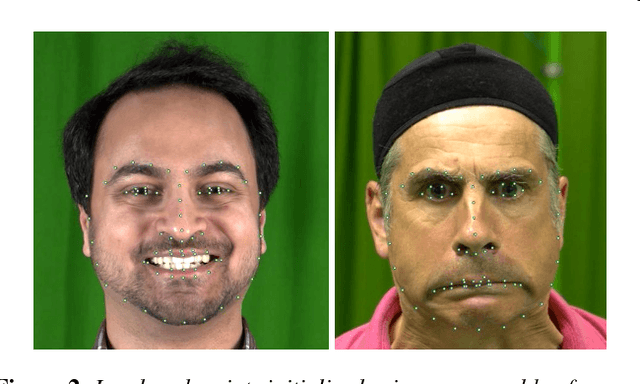

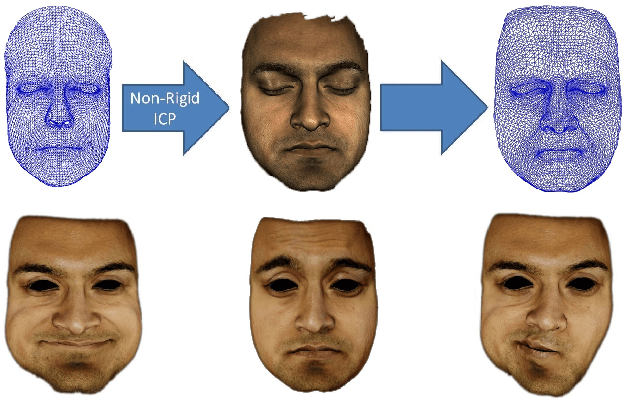

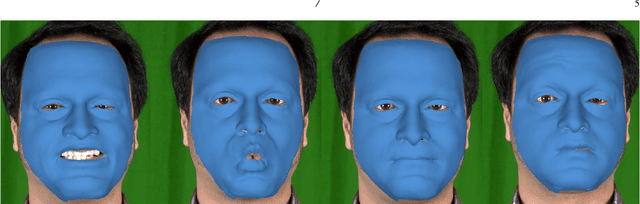

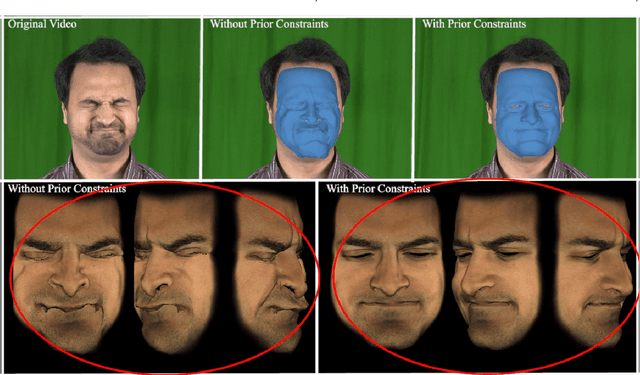

Abstract:We present a simple lightweight markerless facial performance capture framework using just a monocular video input that combines Active Appearance Models for feature tracking and prior constraints on 3D shapes into an integrated objective function. 2D monocular inputs inherently lack information along the depth axis and can lead to physically implausible solutions. In order to address this loss of information, we enforce a constraint on our objective function within a probabilistic framework that uses preexisting animations obtained from accurate 3D tracking systems, thus achieving more plausible results. Our system fits a Blendshape model to tracked 2D features while also handling noise in estimation of features and camera parameters. We learn separate constraints for the upper and lower regions of the face thus maintaining flexibility. We show that using this approach, we can obtain significant improvement in tracking especially along the depth dimension. Our method uses easily obtainable prior animation data. We show that our method can generate convincing animations using only a monocular video input. We quantitatively evaluate our results comparing it with an approach using a monocular input without our spatial constraints and show that our results are closer to the ground-truth geometry. Finally, we also evaluate the effect that the choice of the Blendshape set has on the results of the solver by solving for a different set of Blendshapes and quantitatively comparing it with our previous results and to the ground truth. We show that while the choice of Blendshapes does make a difference, the use of our spatial constraints generates results that are closer to the ground truth.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge