Serap Kırbız

Improving Driver Drowsiness Detection via Personalized EAR/MAR Thresholds and CNN-Based Classification

Apr 24, 2026Abstract:Driver drowsiness is a major cause of traffic accidents worldwide, posing a serious threat to public safety. Vision-based driver monitoring systems often rely on fixed Eye Aspect Ratio (EAR) and Mouth Aspect Ratio (MAR) thresholds; however, such fixed values frequently fail to generalize across individuals due to variations in facial structure, illumination, and driving conditions. This paper proposes a personalized driver drowsiness detection system that monitors eyelid movements, head position, and yawning behavior in real time and provides warnings when signs of fatigue are detected. The system employs driver-specific EAR and MAR thresholds, calibrated before driving, to improve classical metric-based detection. In addition, deep learning-based Convolutional Neural Network (CNN) models are integrated to enhance accuracy in challenging scenarios. The system is evaluated using publicly available datasets as well as a custom dataset collected under diverse lighting conditions, head poses, and user characteristics. Experimental results show that personalized thresholding improves detection accuracy by 2-3% compared to fixed thresholds, while CNN-based classification achieves 99.1% accuracy for eye state detection and 98.8% for yawning detection, demonstrating the effectiveness of combining classical metrics with deep learning for robust real-time driver monitoring.

Improving Facial Emotion Recognition through Dataset Merging and Balanced Training Strategies

Apr 22, 2026Abstract:In this paper, a deep learning framework is proposed for automatic facial emotion based on deep convolutional networks. In order to increase the generalization ability and the robustness of the method, the dataset size is increased by merging three publicly available facial emotion datasets: CK+, FER+ and KDEF. Despite the increase in dataset size, the minority classes still suffer from insufficient number of training samples, leading to data imbalance. The data imbalance problem is minimized by online and offline augmentation techniques and random weighted sampling. Experimental results demonstrate that the proposed method can recognize the seven basic emotions with 82% accuracy. The results demonstrate the effectiveness of the proposed approach in tackling the challenges of data imbalance and improving classification performance in facial emotion recognition.

Unsupervised Source Separation via Self-Supervised Training

Feb 08, 2022

Abstract:We introduce two novel unsupervised (blind) source separation methods, which involve self-supervised training from single-channel two-source speech mixtures without any access to the ground truth source signals. Our first method employs permutation invariant training (PIT) to separate artificially-generated mixtures of the original mixtures back into the original mixtures, which we named mixture permutation invariant training (MixPIT). We found this challenging objective to be a valid proxy task for learning to separate the underlying sources. We improve upon this first method by creating mixtures of source estimates and employing PIT to separate these new mixtures in a cyclic fashion. We named this second method cyclic mixture permutation invariant training (MixCycle), where cyclic refers to the fact that we use the same model to produce artificial mixtures and to learn from them continuously. We show that MixPIT outperforms a common baseline (MixIT) on our small dataset (SC09Mix), and they have comparable performance on a standard dataset (LibriMix). Strikingly, we also show that MixCycle surpasses the performance of supervised PIT by being data-efficient, thanks to its inherent data augmentation mechanism. To the best of our knowledge, no other purely unsupervised method is able to match or exceed the performance of supervised training.

Weak Label Supervision for Monaural Source Separation Using Non-negative Denoising Variational Autoencoders

Nov 05, 2018

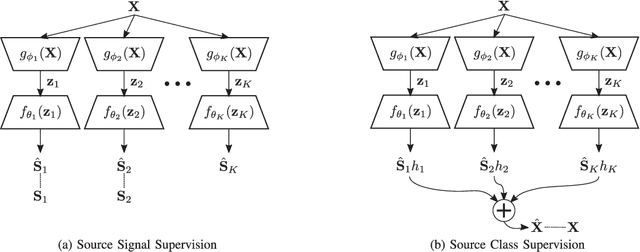

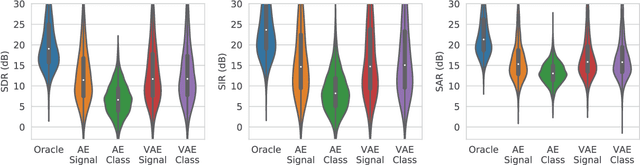

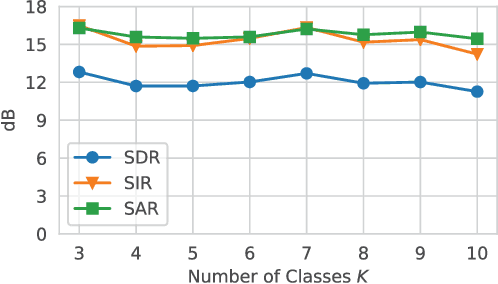

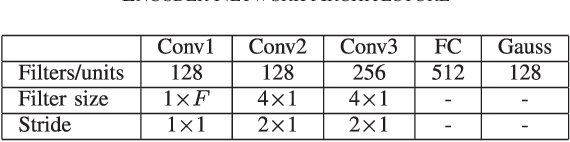

Abstract:Deep learning models are very effective in source separation when there are large amounts of labeled data available. However it is not always possible to have carefully labeled datasets. In this paper, we propose a weak supervision method that only uses class information rather than source signals for learning to separate short utterance mixtures. We associate a variational autoencoder (VAE) with each class within a non-negative model. We demonstrate that deep convolutional VAEs provide a prior model to identify complex signals in a sound mixture without having access to any source signal. We show that the separation results are on par with source signal supervision.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge