Sasanka Sekhar Chanda

An Algorithm to Effect Prompt Termination of Myopic Local Search on Kauffman-s NK Landscape

May 11, 2021

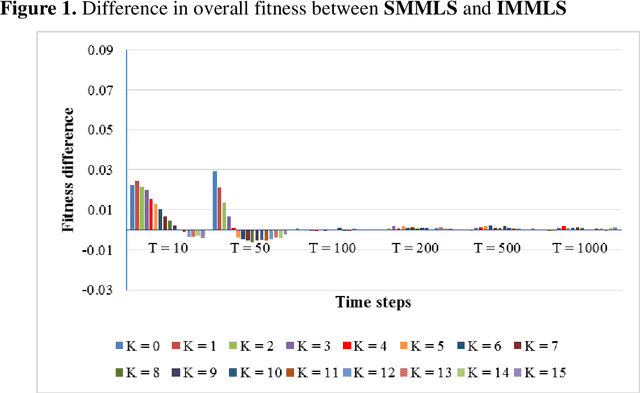

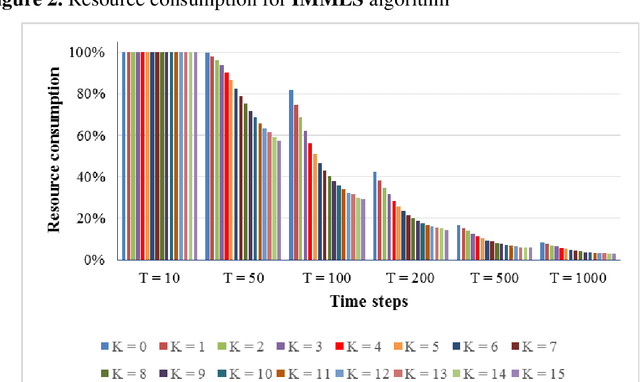

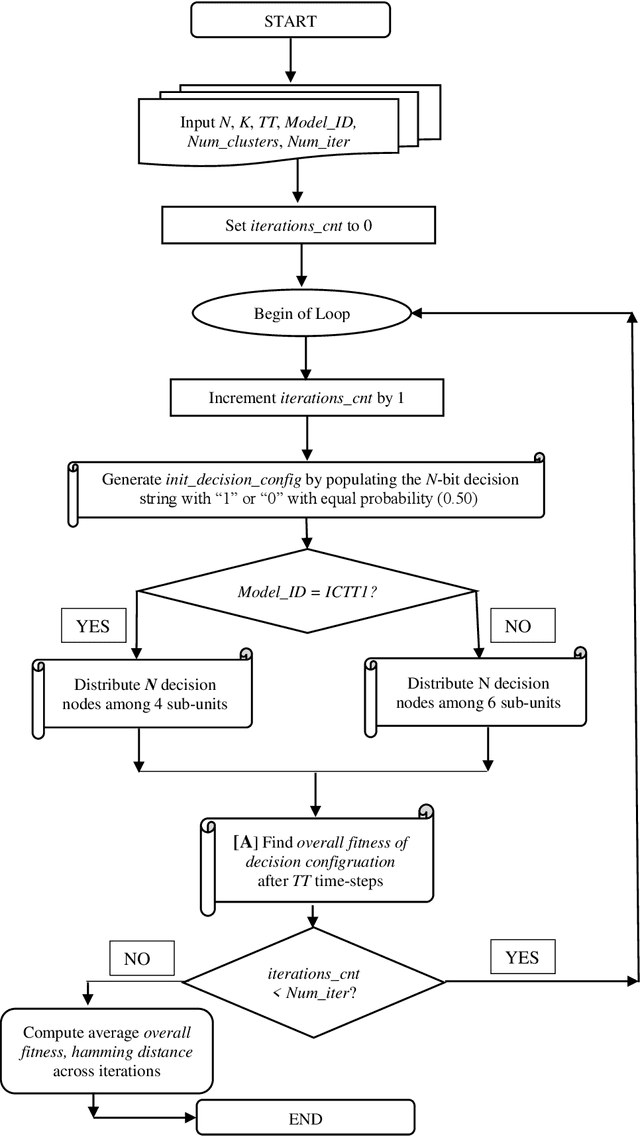

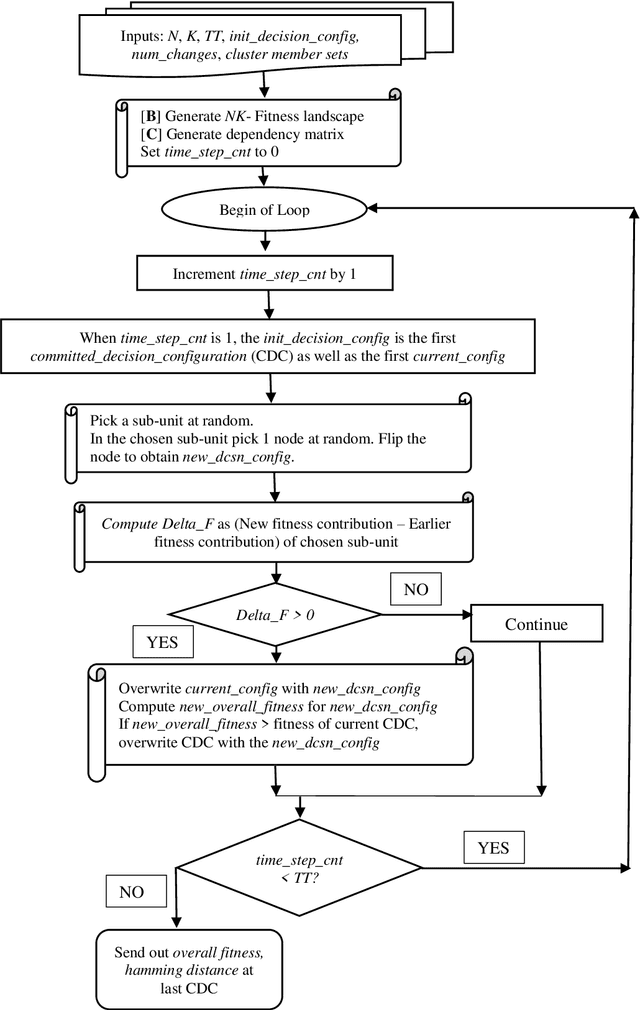

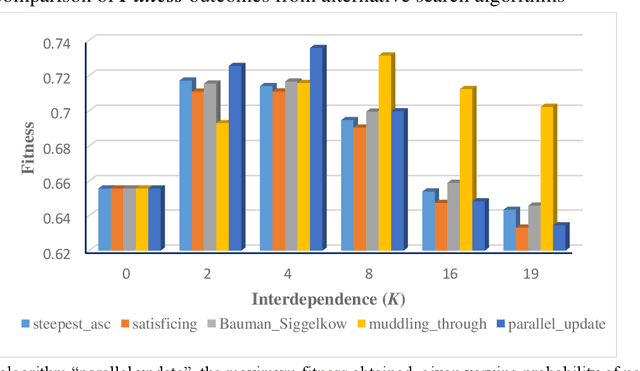

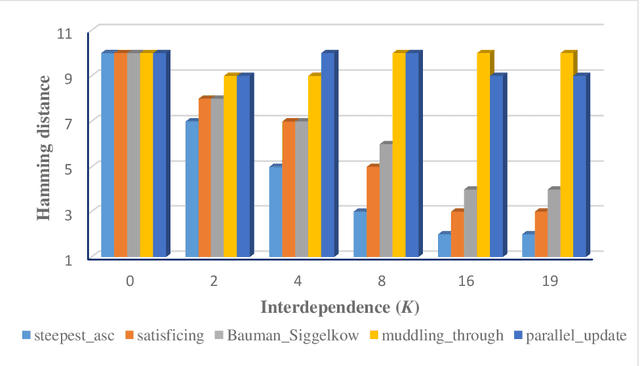

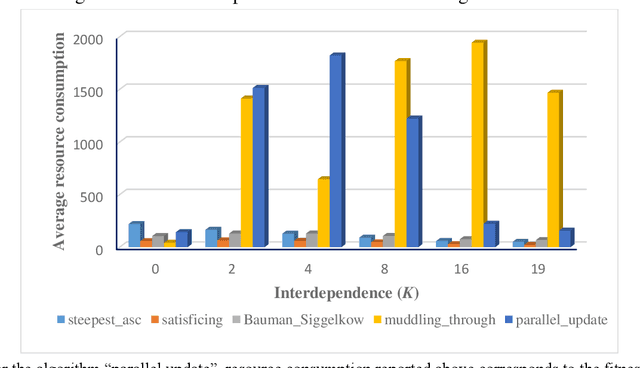

Abstract:In Kauffman-s NK model, myopic local search involves flipping one randomly-chosen bit of an N-bit decision string in every time step and accepting the new configuration if that has higher fitness. One issue is that, this algorithm consumes the full extent of computational resources allocated - given by the number of alternative configurations inspected - even though search is expected to terminate the moment there are no neighbors having higher fitness. Otherwise, the algorithm must compute the fitness of all N neighbors in every time step, consuming a high amount of resources. In order to get around this problem, I describe an algorithm that allows search to logically terminate relatively early, without having to evaluate fitness of all N neighbors at every time step. I further suggest that when the efficacy of two algorithms need to be compared head to head, imposing a common limit on the number of alternatives evaluated - metering - provides the necessary level field.

Overcoming Complexity Catastrophe: An Algorithm for Beneficial Far-Reaching Adaptation under High Complexity

May 10, 2021

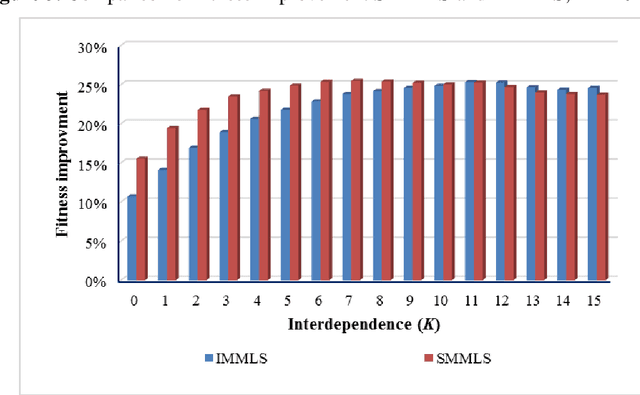

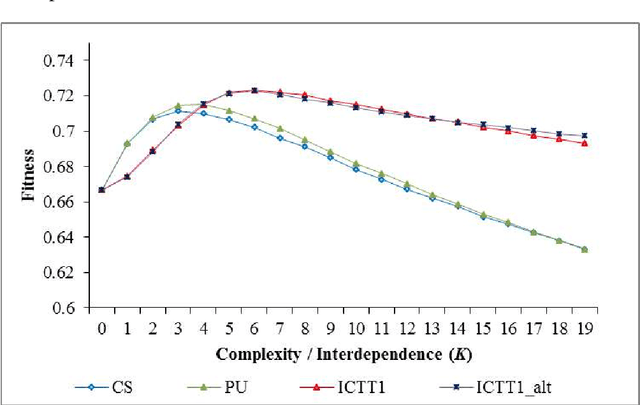

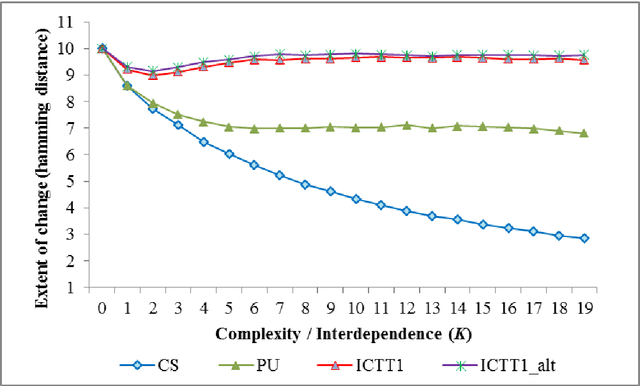

Abstract:In his seminal work with NK algorithms, Kauffman noted that fitness outcomes from algorithms navigating an NK landscape show a sharp decline at high complexity arising from pervasive interdependence among problem dimensions. This phenomenon - where complexity effects dominate (Darwinian) adaptation efforts - is called complexity catastrophe. We present an algorithm - incremental change taking turns (ICTT) - that finds distant configurations having fitness superior to that reported in extant research, under high complexity. Thus, complexity catastrophe is not inevitable: a series of incremental changes can lead to excellent outcomes.

AI Failures: A Review of Underlying Issues

Jul 18, 2020Abstract:Instances of Artificial Intelligence (AI) systems failing to deliver consistent, satisfactory performance are legion. We investigate why AI failures occur. We address only a narrow subset of the broader field of AI Safety. We focus on AI failures on account of flaws in conceptualization, design and deployment. Other AI Safety issues like trade-offs between privacy and security or convenience, bad actors hacking into AI systems to create mayhem or bad actors deploying AI for purposes harmful to humanity and are out of scope of our discussion. We find that AI systems fail on account of omission and commission errors in the design of the AI system, as well as upon failure to develop an appropriate interpretation of input information. Moreover, even when there is no significant flaw in the AI software, an AI system may fail because the hardware is incapable of robust performance across environments. Finally an AI system is quite likely to fail in situations where, in effect, it is called upon to deliver moral judgments -- a capability AI does not possess. We observe certain trade-offs in measures to mitigate a subset of AI failures and provide some recommendations.

An Algorithm to find Superior Fitness on NK Landscapes under High Complexity: Muddling Through

Jun 06, 2020

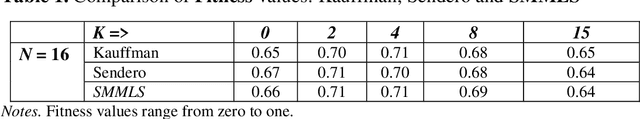

Abstract:Under high complexity - given by pervasive interdependence between constituent elements of a decision in an NK landscape - our algorithm obtains fitness superior to that reported in extant research. We distribute the decision elements comprising a decision into clusters. When a change in value of a decision element is considered, a forward move is explored if the aggregate fitness of the cluster members residing alongside the decision element is higher. The decision configuration with the highest fitness accomplished in the path is selected. Our algorithm obtains superior outcomes by enabling more extensive search, and allowing inspection of more distant decision configurations. We name this algorithm the muddling through algorithm, in memory of Charles Lindblom who spotted the efficacy of the process long before sophisticated computer simulations came into being.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge