Samy Sá

On the Equivalence between Logic Programming and SETAF

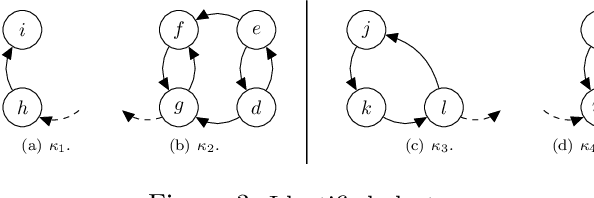

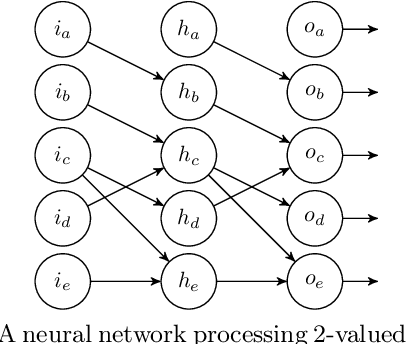

Jul 08, 2024Abstract:A framework with sets of attacking arguments (SETAF) is an extension of the well-known Dung's Abstract Argumentation Frameworks (AAFs) that allows joint attacks on arguments. In this paper, we provide a translation from Normal Logic Programs (NLPs) to SETAFs and vice versa, from SETAFs to NLPs. We show that there is pairwise equivalence between their semantics, including the equivalence between L-stable and semi-stable semantics. Furthermore, for a class of NLPs called Redundancy-Free Atomic Logic Programs (RFALPs), there is also a structural equivalence as these back-and-forth translations are each other's inverse. Then, we show that RFALPs are as expressive as NLPs by transforming any NLP into an equivalent RFALP through a series of program transformations already known in the literature. We also show that these program transformations are confluent, meaning that every NLP will be transformed into a unique RFALP. The results presented in this paper enhance our understanding that NLPs and SETAFs are essentially the same formalism. Under consideration in Theory and Practice of Logic Programming (TPLP).

Online Handbook of Argumentation for AI: Volume 1

Jun 22, 2020

Abstract:This volume contains revised versions of the papers selected for the first volume of the Online Handbook of Argumentation for AI (OHAAI). Previously, formal theories of argument and argument interaction have been proposed and studied, and this has led to the more recent study of computational models of argument. Argumentation, as a field within artificial intelligence (AI), is highly relevant for researchers interested in symbolic representations of knowledge and defeasible reasoning. The purpose of this handbook is to provide an open access and curated anthology for the argumentation research community. OHAAI is designed to serve as a research hub to keep track of the latest and upcoming PhD-driven research on the theory and application of argumentation in all areas related to AI.

On the Equivalence Between Abstract Dialectical Frameworks and Logic Programs

Jul 22, 2019Abstract:Abstract Dialectical Frameworks (ADFs) are argumentation frameworks where each node is associated with an acceptance condition. This allows us to model different types of dependencies as supports and attacks. Previous studies provided a translation from Normal Logic Programs (NLPs) to ADFs and proved the stable models semantics for a normal logic program has an equivalent semantics to that of the corresponding ADF. However, these studies failed in identifying a semantics for ADFs equivalent to a three-valued semantics (as partial stable models and well-founded models) for NLPs. In this work, we focus on a fragment of ADFs, called Attacking Dialectical Frameworks (ADF$^+$s), and provide a translation from NLPs to ADF$^+$s robust enough to guarantee the equivalence between partial stable models, well-founded models, regular models, stable models semantics for NLPs and respectively complete models, grounded models, preferred models, stable models for ADFs. In addition, we define a new semantics for ADF$^+$s, called L-stable, and show it is equivalent to the L-stable semantics for NLPs. This paper is under consideration for acceptance in TPLP.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge