Ryan Miller

Resonant and Stochastic Vibration in Neurorehabilitation

Dec 08, 2025

Abstract:Neurological injuries and age-related decline can impair sensory processing and disrupt motor coordination, gait, and balance. As mechanisms of neuroplasticity have become better understood, vibration-based interventions have gained attention as potential tools to stimulate sensory pathways and motor circuits to support functional recovery. This survey reviews stochastic and resonant vibration modalities, describing their mechanisms, therapeutic rationales, and clinical applications. We synthesize evidence on whole-body vibration for improving balance, mobility, and fine motor function in aging adults, stroke survivors, and individuals with Parkinson's disease, with attention to challenges in parameter optimization, generalizability, and safety. We also assess recent developments in focused muscle vibration and wearable stochastic resonance devices for upper-limb rehabilitation, evaluating their clinical promise along with limitations in scalability, ecological validity, and standardization. Across these modalities, we identify key variables that shape therapeutic outcomes and highlight ongoing efforts to refine protocols, improve usability, and integrate vibration techniques into broader neurorehabilitation frameworks. We conclude by outlining the most important research needs for translating vibration-based interventions into reliable and deployable clinical tools.

Prompt Optimization and Evaluation for LLM Automated Red Teaming

Jul 29, 2025Abstract:Applications that use Large Language Models (LLMs) are becoming widespread, making the identification of system vulnerabilities increasingly important. Automated Red Teaming accelerates this effort by using an LLM to generate and execute attacks against target systems. Attack generators are evaluated using the Attack Success Rate (ASR) the sample mean calculated over the judgment of success for each attack. In this paper, we introduce a method for optimizing attack generator prompts that applies ASR to individual attacks. By repeating each attack multiple times against a randomly seeded target, we measure an attack's discoverability the expectation of the individual attack success. This approach reveals exploitable patterns that inform prompt optimization, ultimately enabling more robust evaluation and refinement of generators.

Hardware-In-the-Loop for Connected Automated Vehicles Testing in Real Traffic

Jul 21, 2019

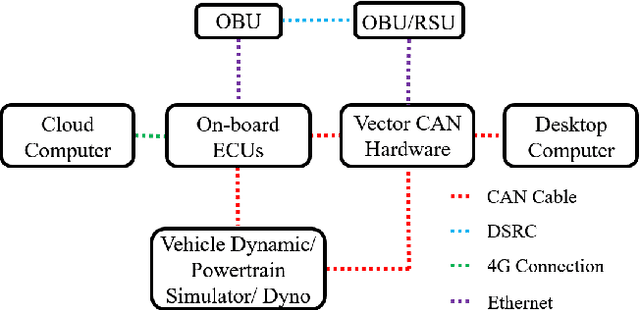

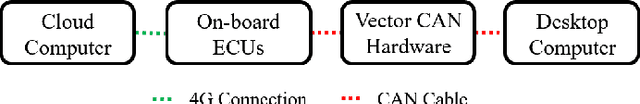

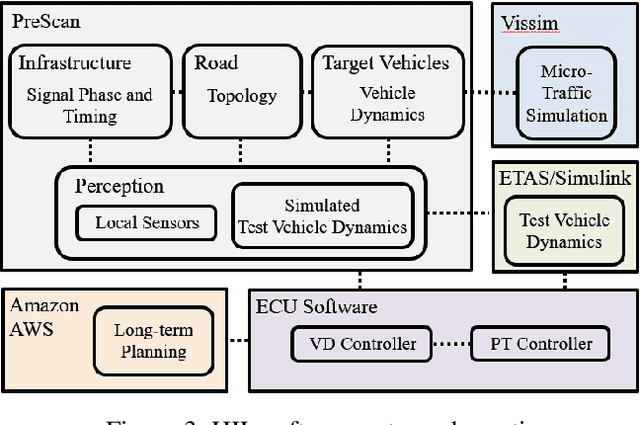

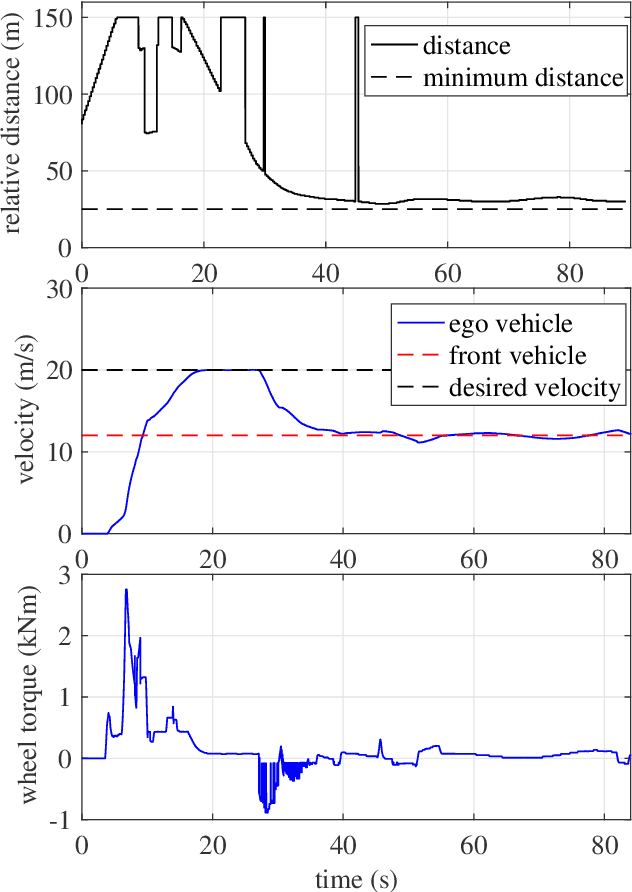

Abstract:We present a hardware-in-the-loop (HIL) simulation setup for repeatable testing of Connected Automated Vehicles (CAVs) in dynamic, real-world scenarios. Our goal is to test control and planning algorithms and their distributed implementation on the vehicle hardware and, possibly, in the cloud. The HIL setup combines PreScan for perception sensors, road topography, and signalized intersections; Vissim for traffic micro-simulation; ETAS DESK-LABCAR/a dynamometer for vehicle and powertrain dynamics; and on-board electronic control units for CAV real time control. Models of traffic and signalized intersections are driven by real-world measurements. To demonstrate this HIL simulation setup, we test a Model Predictive Control approach for maximizing energy efficiency of CAVs in urban environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge