Ruanui Nicholson

Shape Derivative-Informed Neural Operators with Application to Risk-Averse Shape Optimization

Mar 03, 2026Abstract:Shape optimization under uncertainty (OUU) is computationally intensive for classical PDE-based methods due to the high cost of repeated sampling-based risk evaluation across many uncertainty realizations and varying geometries, while standard neural surrogates often fail to provide accurate and efficient sensitivities for optimization. We introduce Shape-DINO, a derivative-informed neural operator framework for learning PDE solution operators on families of varying geometries, with a particular focus on accelerating PDE-constrained shape OUU. Shape-DINOs encode geometric variability through diffeomorphic mappings to a fixed reference domain and employ a derivative-informed operator learning objective that jointly learns the PDE solution and its Fréchet derivatives with respect to design variables and uncertain parameters, enabling accurate state predictions and reliable gradients for large-scale OUU. We establish a priori error bounds linking surrogate accuracy to optimization error and prove universal approximation results for multi-input reduced basis neural operators in suitable $C^1$ norms. We demonstrate efficiency and scalability on three representative shape OUU problems, including boundary design for a Poisson equation and shape design governed by steady-state Navier-Stokes exterior flows in two and three dimensions. Across these examples, Shape-DINOs produce more reliable optimization results than operator surrogates trained without derivative information. In our examples, Shape-DINOs achieve 3-8 orders-of-magnitude speedups in state and gradient evaluations. Counting training data generation, Shape-DINOs reduce necessary PDE solves by 1-2 orders-of-magnitude compared to a strictly PDE-based approach for a single OUU problem. Moreover, Shape-DINO construction costs can be amortized across many objectives and risk measures, enabling large-scale shape OUU for complex systems.

What can be estimated? Identifiability, estimability, causal inference and ill-posed inverse problems

Apr 11, 2019

Abstract:Here we consider, in the context of causal inference, the basic question: 'what can be estimated from data?'. We call this the question of estimability. We consider the usual definition adopted in the causal inference literature -- identifiability -- in a general mathematical setting and show why it is an inadequate formal translation of the concept of estimability. Despite showing that identifiability implies the existence of a Fisher-consistent estimator, we show that this estimator may be discontinuous, and hence unstable, in general. The difficulty arises because the causal inference problem is in general an ill-posed inverse problem. Inverse problems have three conditions which must be satisfied in order to be considered well-posed: existence, uniqueness, and stability of solutions. We illustrate how identifiability corresponds to the question of uniqueness; in contrast, we take estimability to mean satisfaction of all three conditions, i.e. well-posedness. It follows that mere identifiability does not guarantee well-posedness of a causal inference procedure, i.e. estimability, and apparent solutions to causal inference problems can be essentially useless with even the smallest amount of imperfection. These concerns apply, in particular, to causal inference approaches that focus on identifiability while ignoring the additional stability requirements needed for estimability.

An Additive Approximation to Multiplicative Noise

May 07, 2018

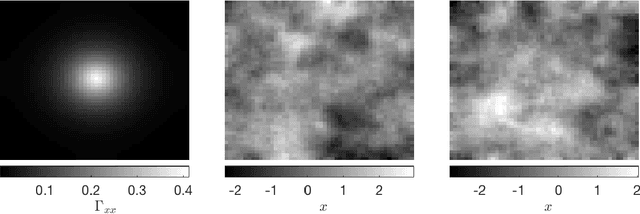

Abstract:Multiplicative noise models are often used instead of additive noise models in cases in which the noise variance depends on the state. Furthermore, when Poisson distributions with relatively small counts are approximated with normal distributions, multiplicative noise approximations are straightforward to implement. There are a number of limitations in existing approaches to marginalize over multiplicative errors, such as positivity of the multiplicative noise term. The focus in this paper is in large dimensional (inverse) problems for which sampling type approaches have too high computational complexity. In this paper, we propose an alternative approach to carry out approximative marginalization over the multiplicative error by embedding the statistics in an additive error term. The approach is essentially a Bayesian one in that the statistics of the additive error is induced by the statistics of the other unknowns. As an example, we consider a deconvolution problem on random fields with different statistics of the multiplicative noise. Furthermore, the approach allows for correlated multiplicative noise. We show that the proposed approach provides feasible error estimates in the sense that the posterior models support the actual image.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge