Rinat Khaziev

ATOD: An Evaluation Framework and Benchmark for Agentic Task-Oriented Dialogue System

Jan 17, 2026Abstract:Recent advances in task-oriented dialogue (TOD) systems, driven by large language models (LLMs) with extensive API and tool integration, have enabled conversational agents to coordinate interleaved goals, maintain long-horizon context, and act proactively through asynchronous execution. These capabilities extend beyond traditional TOD systems, yet existing benchmarks lack systematic support for evaluating such agentic behaviors. To address this gap, we introduce ATOD, a benchmark and synthetic dialogue generation pipeline that produces richly annotated conversations requiring long-term reasoning. ATOD captures key characteristics of advanced TOD, including multi-goal coordination, dependency management, memory, adaptability, and proactivity. Building on ATOD, we propose ATOD-Eval, a holistic evaluation framework that translates these dimensions into fine-grained metrics and supports reproducible offline and online evaluation. We further present a strong agentic memory-based evaluator for benchmarking on ATOD. Experiments show that ATOD-Eval enables comprehensive assessment across task completion, agentic capability, and response quality, and that the proposed evaluator offers a better accuracy-efficiency tradeoff compared to existing memory- and LLM-based approaches under this evaluation setting.

Constrained Entropic Unlearning: A Primal-Dual Framework for Large Language Models

Jun 05, 2025Abstract:Large Language Models (LLMs) deployed in real-world settings increasingly face the need to unlearn sensitive, outdated, or proprietary information. Existing unlearning methods typically formulate forgetting and retention as a regularized trade-off, combining both objectives into a single scalarized loss. This often leads to unstable optimization and degraded performance on retained data, especially under aggressive forgetting. We propose a new formulation of LLM unlearning as a constrained optimization problem: forgetting is enforced via a novel logit-margin flattening loss that explicitly drives the output distribution toward uniformity on a designated forget set, while retention is preserved through a hard constraint on a separate retain set. Compared to entropy-based objectives, our loss is softmax-free, numerically stable, and maintains non-vanishing gradients, enabling more efficient and robust optimization. We solve the constrained problem using a scalable primal-dual algorithm that exposes the trade-off between forgetting and retention through the dynamics of the dual variable. Evaluations on the TOFU and MUSE benchmarks across diverse LLM architectures demonstrate that our approach consistently matches or exceeds state-of-the-art baselines, effectively removing targeted information while preserving downstream utility.

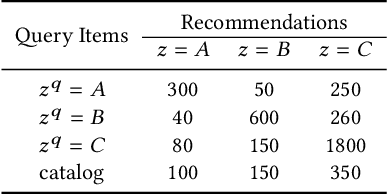

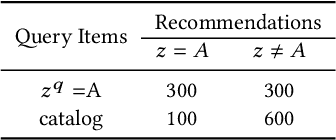

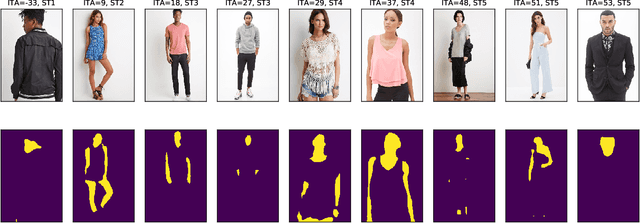

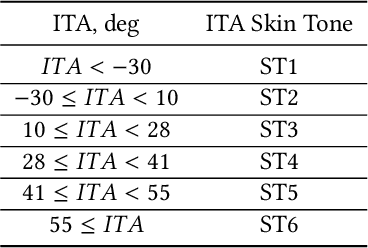

Recommendation or Discrimination?: Quantifying Distribution Parity in Information Retrieval Systems

Sep 13, 2019

Abstract:Information retrieval (IR) systems often leverage query data to suggest relevant items to users. This introduces the possibility of unfairness if the query (i.e., input) and the resulting recommendations unintentionally correlate with latent factors that are protected variables (e.g., race, gender, and age). For instance, a visual search system for fashion recommendations may pick up on features of the human models rather than fashion garments when generating recommendations. In this work, we introduce a statistical test for "distribution parity" in the top-K IR results, which assesses whether a given set of recommendations is fair with respect to a specific protected variable. We evaluate our test using both simulated and empirical results. First, using artificially biased recommendations, we demonstrate the trade-off between statistically detectable bias and the size of the search catalog. Second, we apply our test to a visual search system for fashion garments, specifically testing for recommendation bias based on the skin tone of fashion models. Our distribution parity test can help ensure that IR systems' results are fair and produce a good experience for all users.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge