Ratko Grbić

NM-Hebb: Coupling Local Hebbian Plasticity with Metric Learning for More Accurate and Interpretable CNNs

Aug 27, 2025

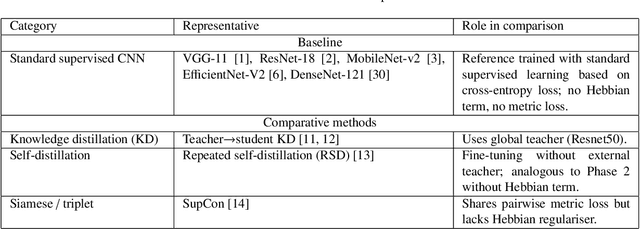

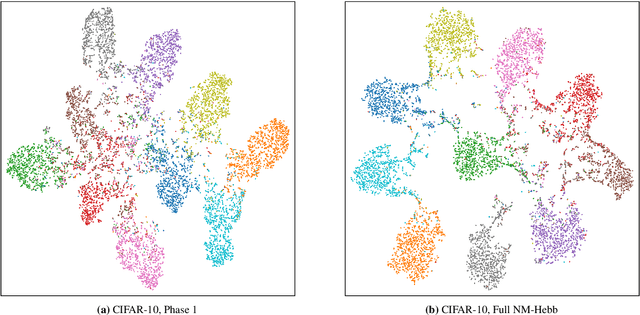

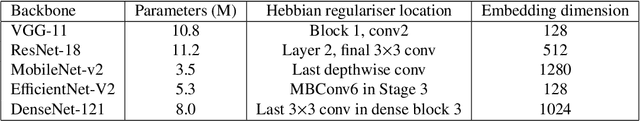

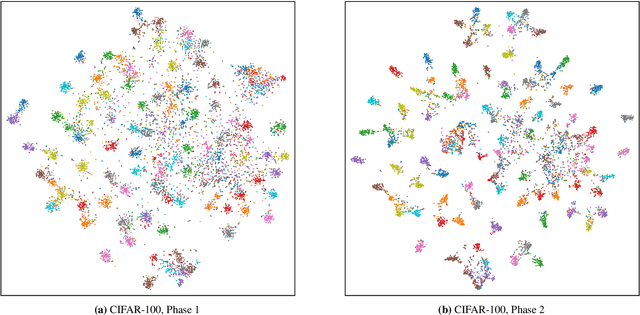

Abstract:Deep Convolutional Neural Networks (CNNs) achieve high accuracy but often rely on purely global, gradient-based optimisation, which can lead to overfitting, redundant filters, and reduced interpretability. To address these limitations, we propose NM-Hebb, a two-phase training framework that integrates neuro-inspired local plasticity with distance-aware supervision. Phase 1 extends standard supervised training by jointly optimising a cross-entropy objective with two biologically inspired mechanisms: (i) a Hebbian regulariser that aligns the spatial mean of activations with the mean of the corresponding convolutional filter weights, encouraging structured, reusable primitives; and (ii) a learnable neuromodulator that gates an elastic-weight-style consolidation loss, preserving beneficial parameters without freezing the network. Phase 2 fine-tunes the backbone with a pairwise metric-learning loss, explicitly compressing intra-class distances and enlarging inter-class margins in the embedding space. Evaluated on CIFAR-10, CIFAR-100, and TinyImageNet across five backbones (ResNet-18, VGG-11, MobileNet-v2, EfficientNet-V2, DenseNet-121), NM-Hebb achieves consistent gains over baseline and other methods: Top-1 accuracy improves by +2.0-10.0 pp (CIFAR-10), +2.0-9.0 pp (CIFAR-100), and up to +4.3-8.9 pp (TinyImageNet), with Normalised Mutual Information (NMI) increased by up to +0.15. Qualitative visualisations and filter-level analyses further confirm that NM-Hebb produces more structured and selective features, yielding tighter and more interpretable class clusters. Overall, coupling local Hebbian plasticity with metric-based fine-tuning yields CNNs that are not only more accurate but also more interpretable, offering practical benefits for resource-constrained and safety-critical AI deployments.

Automatic Vision-Based Parking Slot Detection and Occupancy Classification

Aug 16, 2023Abstract:Parking guidance information (PGI) systems are used to provide information to drivers about the nearest parking lots and the number of vacant parking slots. Recently, vision-based solutions started to appear as a cost-effective alternative to standard PGI systems based on hardware sensors mounted on each parking slot. Vision-based systems provide information about parking occupancy based on images taken by a camera that is recording a parking lot. However, such systems are challenging to develop due to various possible viewpoints, weather conditions, and object occlusions. Most notably, they require manual labeling of parking slot locations in the input image which is sensitive to camera angle change, replacement, or maintenance. In this paper, the algorithm that performs Automatic Parking Slot Detection and Occupancy Classification (APSD-OC) solely on input images is proposed. Automatic parking slot detection is based on vehicle detections in a series of parking lot images upon which clustering is applied in bird's eye view to detect parking slots. Once the parking slots positions are determined in the input image, each detected parking slot is classified as occupied or vacant using a specifically trained ResNet34 deep classifier. The proposed approach is extensively evaluated on well-known publicly available datasets (PKLot and CNRPark+EXT), showing high efficiency in parking slot detection and robustness to the presence of illegal parking or passing vehicles. Trained classifier achieves high accuracy in parking slot occupancy classification.

* 39 pages, 8 figures, 9 tables

One-shot lip-based biometric authentication: extending behavioral features with authentication phrase information

Aug 14, 2023Abstract:Lip-based biometric authentication (LBBA) is an authentication method based on a person's lip movements during speech in the form of video data captured by a camera sensor. LBBA can utilize both physical and behavioral characteristics of lip movements without requiring any additional sensory equipment apart from an RGB camera. State-of-the-art (SOTA) approaches use one-shot learning to train deep siamese neural networks which produce an embedding vector out of these features. Embeddings are further used to compute the similarity between an enrolled user and a user being authenticated. A flaw of these approaches is that they model behavioral features as style-of-speech without relation to what is being said. This makes the system vulnerable to video replay attacks of the client speaking any phrase. To solve this problem we propose a one-shot approach which models behavioral features to discriminate against what is being said in addition to style-of-speech. We achieve this by customizing the GRID dataset to obtain required triplets and training a siamese neural network based on 3D convolutions and recurrent neural network layers. A custom triplet loss for batch-wise hard-negative mining is proposed. Obtained results using an open-set protocol are 3.2% FAR and 3.8% FRR on the test set of the customized GRID dataset. Additional analysis of the results was done to quantify the influence and discriminatory power of behavioral and physical features for LBBA.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge