Rajagopal Venkatesaramani

Therefore I am. I Think

Apr 02, 2026Abstract:We consider the question: when a large language reasoning model makes a choice, did it think first and then decide to, or decide first and then think? In this paper, we present evidence that detectable, early-encoded decisions shape chain-of-thought in reasoning models. Specifically, we show that a simple linear probe successfully decodes tool-calling decisions from pre-generation activations with very high confidence, and in some cases, even before a single reasoning token is produced. Activation steering supports this causally: perturbing the decision direction leads to inflated deliberation, and flips behavior in many examples (between 7 - 79% depending on model and benchmark). We also show through behavioral analysis that, when steering changes the decision, the chain-of-thought process often rationalizes the flip rather than resisting it. Together, these results suggest that reasoning models can encode action choices before they begin to deliberate in text.

Re-identification of Individuals in Genomic Datasets Using Public Face Images

Feb 17, 2021

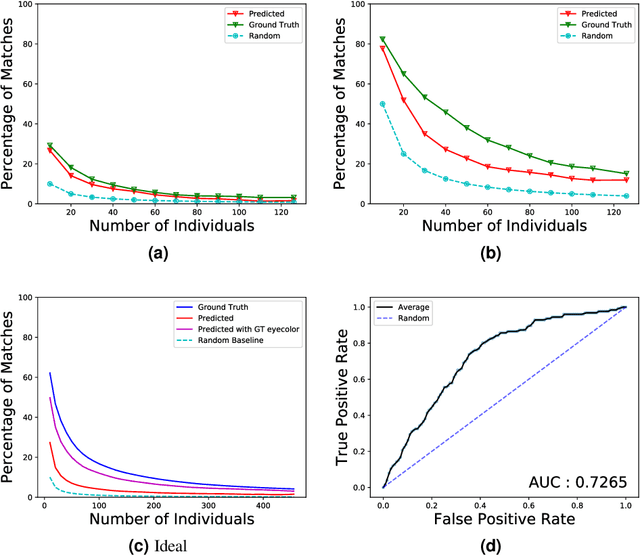

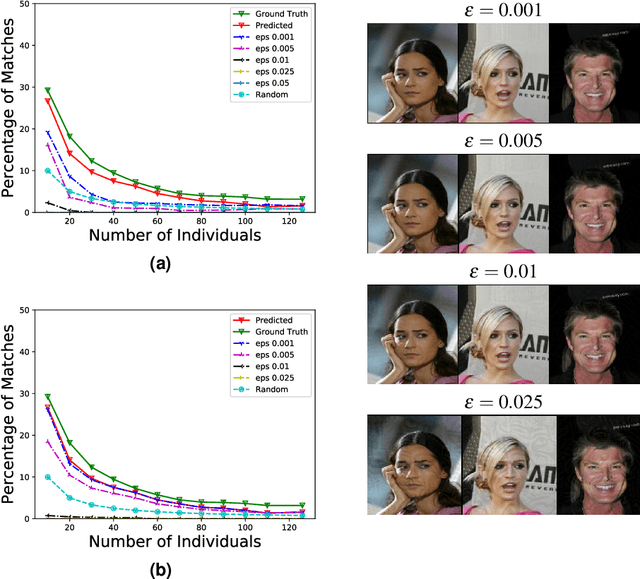

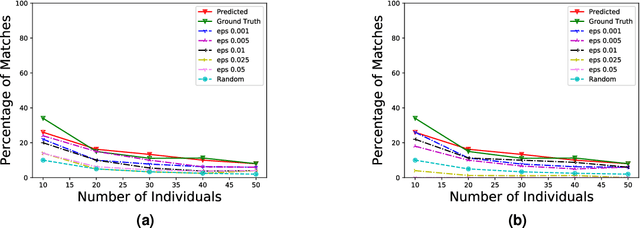

Abstract:DNA sequencing is becoming increasingly commonplace, both in medical and direct-to-consumer settings. To promote discovery, collected genomic data is often de-identified and shared, either in public repositories, such as OpenSNP, or with researchers through access-controlled repositories. However, recent studies have suggested that genomic data can be effectively matched to high-resolution three-dimensional face images, which raises a concern that the increasingly ubiquitous public face images can be linked to shared genomic data, thereby re-identifying individuals in the genomic data. While these investigations illustrate the possibility of such an attack, they assume that those performing the linkage have access to extremely well-curated data. Given that this is unlikely to be the case in practice, it calls into question the pragmatic nature of the attack. As such, we systematically study this re-identification risk from two perspectives: first, we investigate how successful such linkage attacks can be when real face images are used, and second, we consider how we can empower individuals to have better control over the associated re-identification risk. We observe that the true risk of re-identification is likely substantially smaller for most individuals than prior literature suggests. In addition, we demonstrate that the addition of a small amount of carefully crafted noise to images can enable a controlled trade-off between re-identification success and the quality of shared images, with risk typically significantly lowered even with noise that is imperceptible to humans.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge