Radu Dogaru

LB-CNN: An Open Source Framework for Fast Training of Light Binary Convolutional Neural Networks using Chainer and Cupy

Jun 25, 2021

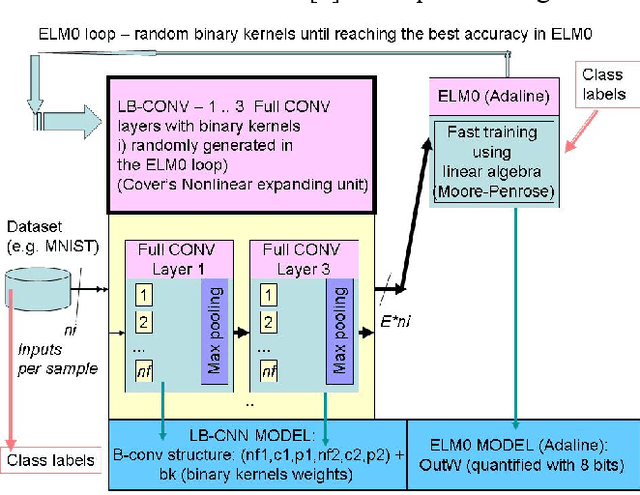

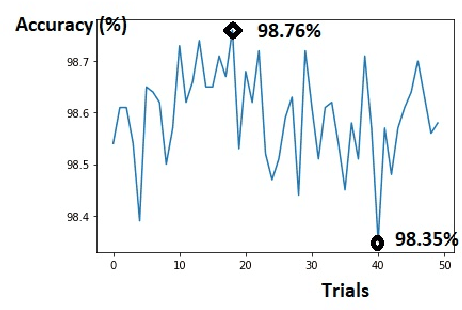

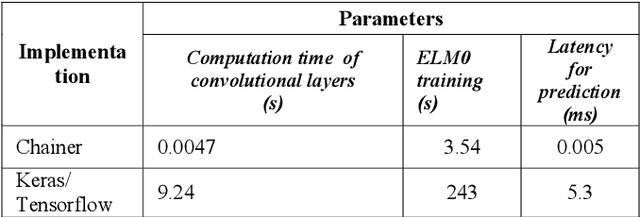

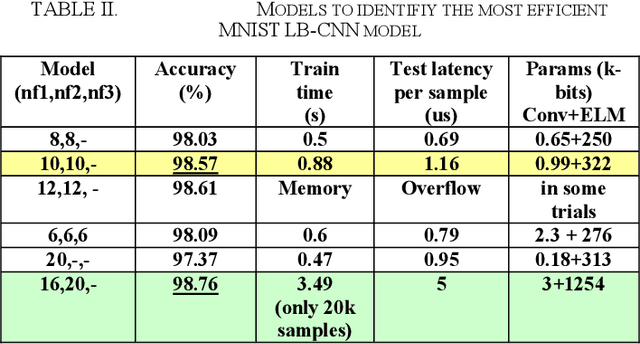

Abstract:Light binary convolutional neural networks (LB-CNN) are particularly useful when implemented in low-energy computing platforms as required in many industrial applications. Herein, a framework for optimizing compact LB-CNN is introduced and its effectiveness is evaluated. The framework is freely available and may run on free-access cloud platforms, thus requiring no major investments. The optimized model is saved in the standardized .h5 format and can be used as input to specialized tools for further deployment into specific technologies, thus enabling the rapid development of various intelligent image sensors. The main ingredient in accelerating the optimization of our model, particularly the selection of binary convolution kernels, is the Chainer/Cupy machine learning library offering significant speed-ups for training the output layer as an extreme-learning machine. Additional training of the output layer using Keras/Tensorflow is included, as it allows an increase in accuracy. Results for widely used datasets including MNIST, GTSRB, ORL, VGG show very good compromise between accuracy and complexity. Particularly, for face recognition problems a carefully optimized LB-CNN model provides up to 100% accuracies. Such TinyML solutions are well suited for industrial applications requiring image recognition with low energy consumption.

A Python Framework for Fast Modelling and Simulation of Cellular Nonlinear Networks and other Finite-difference Time-domain Systems

Feb 20, 2021

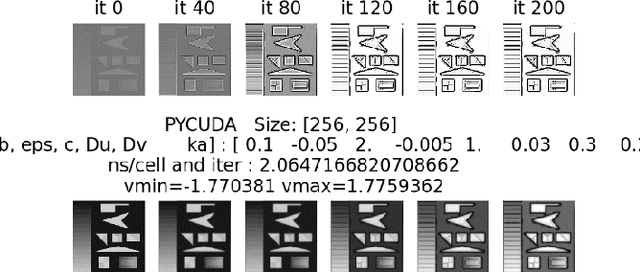

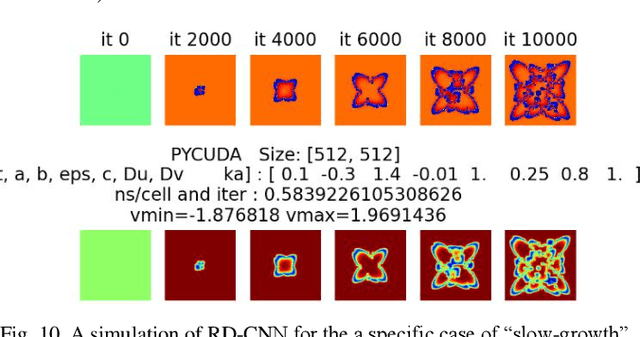

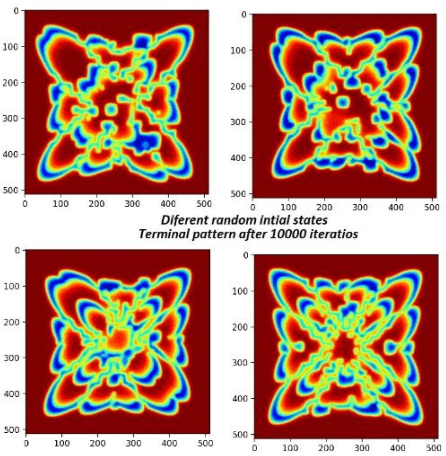

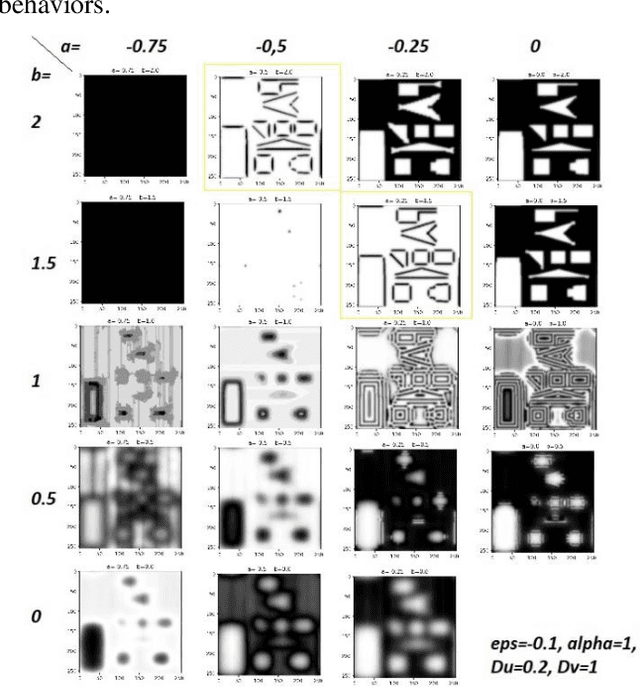

Abstract:This paper introduces and evaluates a freely available cellular nonlinear network simulator optimized for the effective use of GPUs, to achieve fast modelling and simulations. Its relevance is demonstrated for several applications in nonlinear complex dynamical systems, such as slow-growth phenomena as well as for various image processing applications such as edge detection. The simulator is designed as a Jupyter notebook written in Python and functionally tested and optimized to run on the freely available cloud platform Google Collaboratory. Although the simulator, in its actual form, is designed to model the FitzHugh Nagumo Reaction-Diffusion cellular nonlinear network, it can be easily adapted for any other type of finite-difference time-domain model. Four implementation versions are considered, namely using the PyCUDA, NUMBA respectively CUPY libraries (all three supporting GPU computations) as well as a NUMPY-based implementation to be used when GPU is not available. The specificities and performances for each of the four implementations are analyzed concluding that the PyCUDA implementation ensures a very good performance being capable to run up to 14000 Mega cells per seconds (each cell referring to the basic nonlinear dynamic system composing the cellular nonlinear network).

NL-CNN: A Resources-Constrained Deep Learning Model based on Nonlinear Convolution

Jan 30, 2021

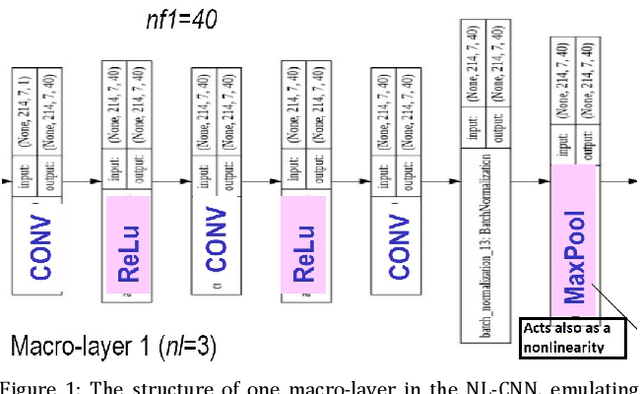

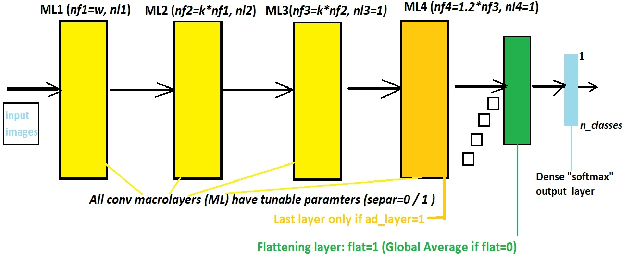

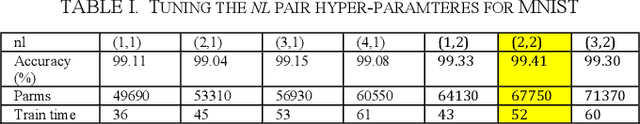

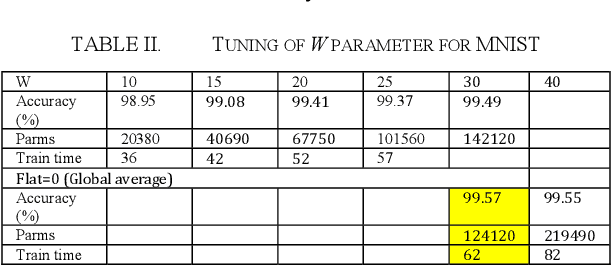

Abstract:A novel convolution neural network model, abbreviated NL-CNN is proposed, where nonlinear convolution is emulated in a cascade of convolution + nonlinearity layers. The code for its implementation and some trained models are made publicly available. Performance evaluation for several widely known datasets is provided, showing several relevant features: i) for small / medium input image sizes the proposed network gives very good testing accuracy, given a low implementation complexity and model size; ii) compares favorably with other widely known resources-constrained models, for instance in comparison to MobileNetv2 provides better accuracy with several times less training times and up to ten times less parameters (memory occupied by the model); iii) has a relevant set of hyper-parameters which can be easily and rapidly tuned due to the fast training specific to it. All these features make NL-CNN suitable for IoT, smart sensing, bio-medical portable instrumentation and other applications where artificial intelligence must be deployed in energy-constrained environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge