R. Martin Chavez

KNET: Integrating Hypermedia and Bayesian Modeling

Mar 27, 2013

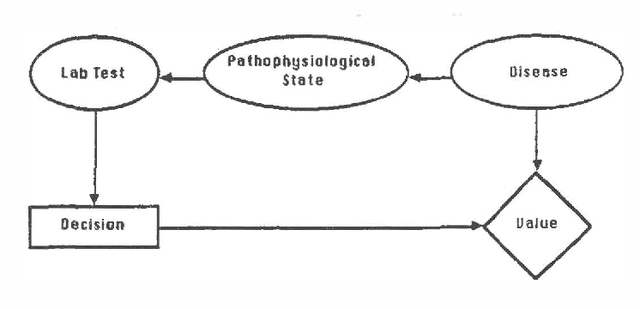

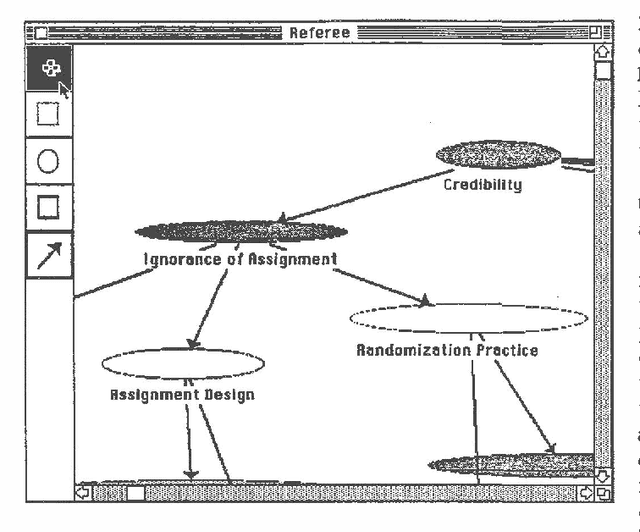

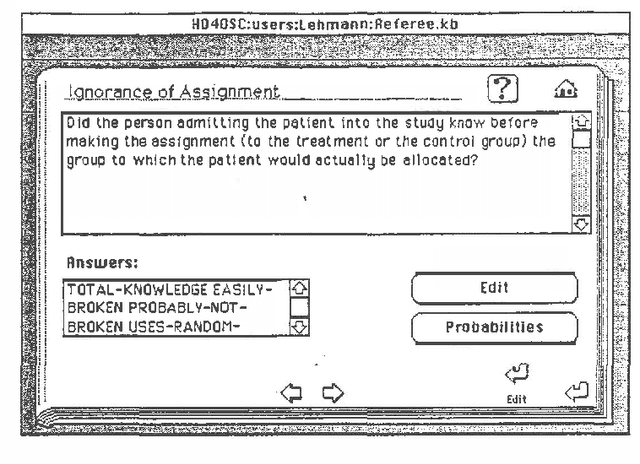

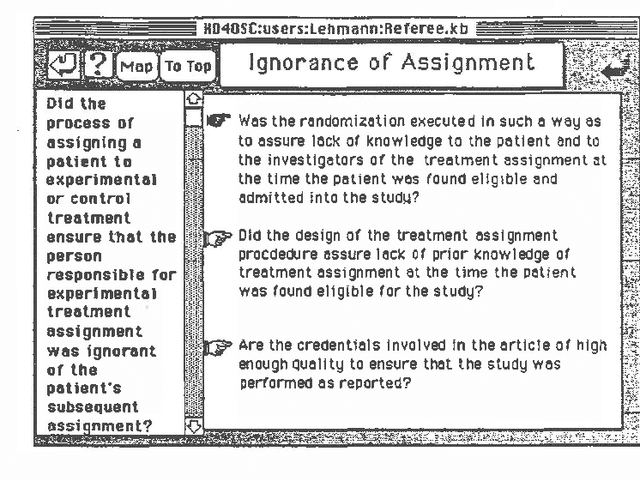

Abstract:KNET is a general-purpose shell for constructing expert systems based on belief networks and decision networks. Such networks serve as graphical representations for decision models, in which the knowledge engineer must define clearly the alternatives, states, preferences, and relationships that constitute a decision basis. KNET contains a knowledge-engineering core written in Object Pascal and an interface that tightly integrates HyperCard, a hypertext authoring tool for the Apple Macintosh computer, into a novel expert-system architecture. Hypertext and hypermedia have become increasingly important in the storage management, and retrieval of information. In broad terms, hypermedia deliver heterogeneous bits of information in dynamic, extensively cross-referenced packages. The resulting KNET system features a coherent probabilistic scheme for managing uncertainty, an objectoriented graphics editor for drawing and manipulating decision networks, and HyperCard's potential for quickly constructing flexible and friendly user interfaces. We envision KNET as a useful prototyping tool for our ongoing research on a variety of Bayesian reasoning problems, including tractable representation, inference, and explanation.

An Empirical Evaluation of a Randomized Algorithm for Probabilistic Inference

Mar 27, 2013

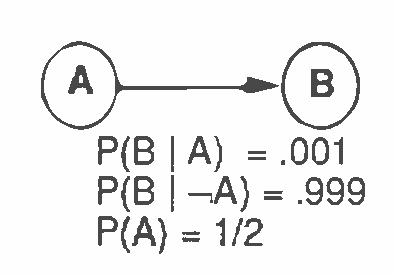

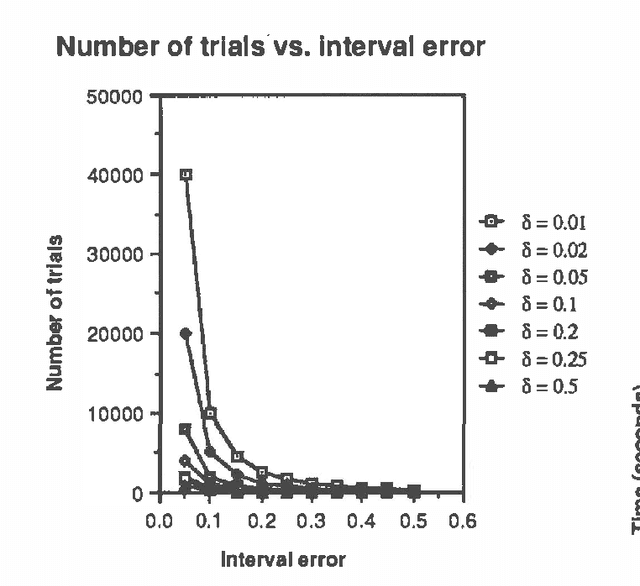

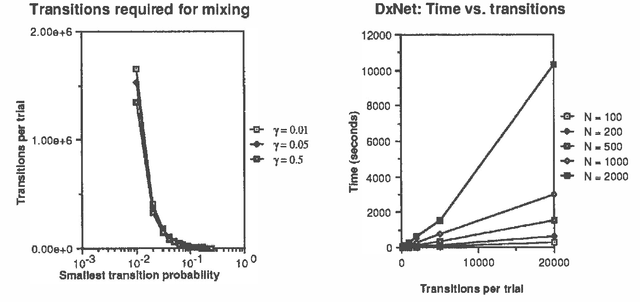

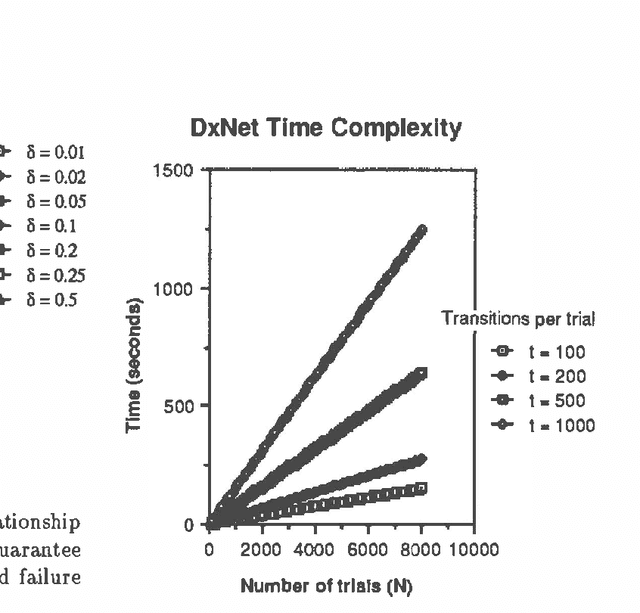

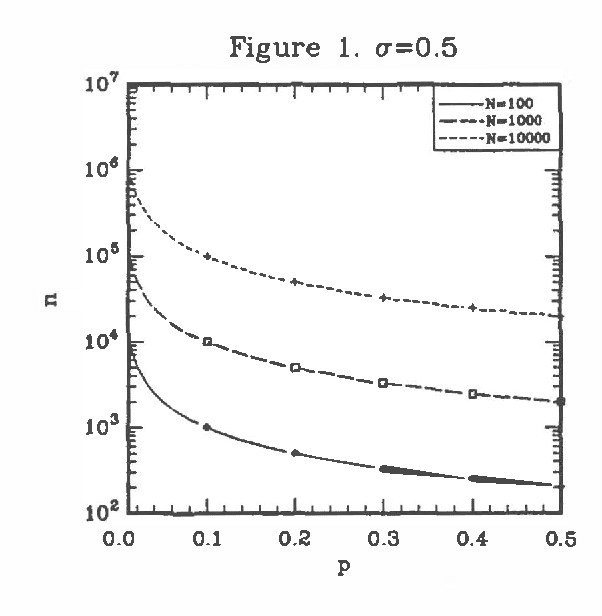

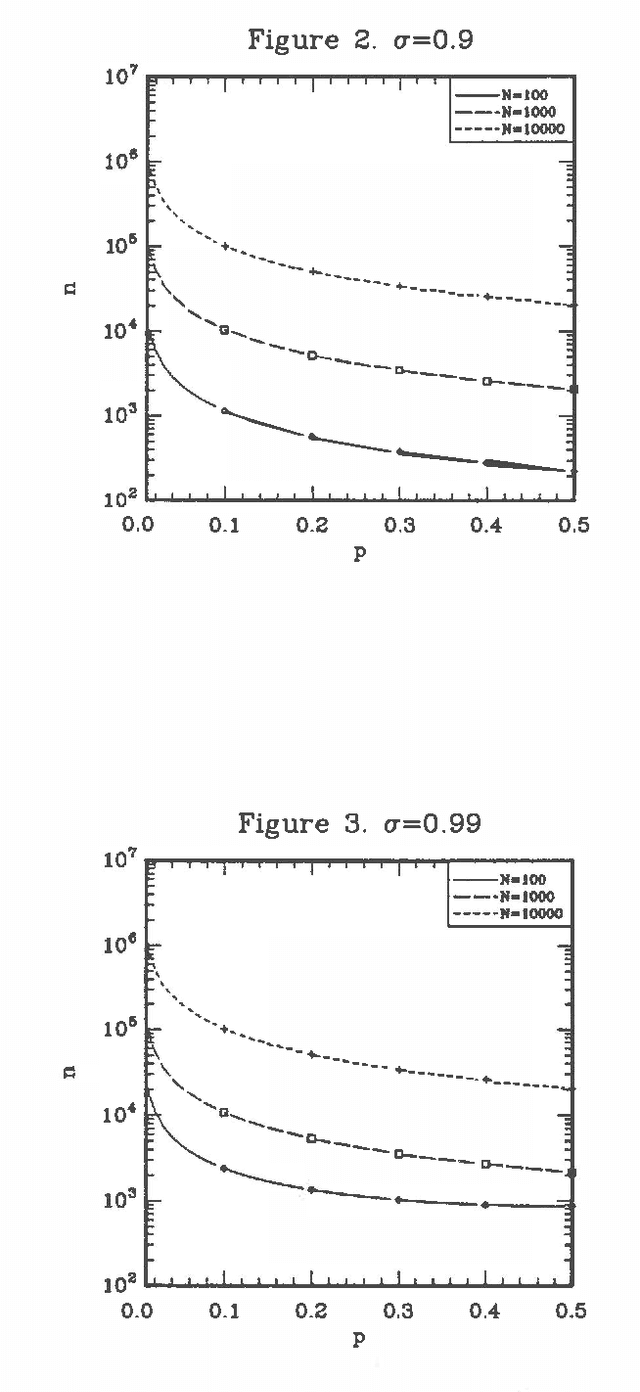

Abstract:In recent years, researchers in decision analysis and artificial intelligence (Al) have used Bayesian belief networks to build models of expert opinion. Using standard methods drawn from the theory of computational complexity, workers in the field have shown that the problem of probabilistic inference in belief networks is difficult and almost certainly intractable. K N ET, a software environment for constructing knowledge-based systems within the axiomatic framework of decision theory, contains a randomized approximation scheme for probabilistic inference. The algorithm can, in many circumstances, perform efficient approximate inference in large and richly interconnected models of medical diagnosis. Unlike previously described stochastic algorithms for probabilistic inference, the randomized approximation scheme computes a priori bounds on running time by analyzing the structure and contents of the belief network. In this article, we describe a randomized algorithm for probabilistic inference and analyze its performance mathematically. Then, we devote the major portion of the paper to a discussion of the algorithm's empirical behavior. The results indicate that the generation of good trials (that is, trials whose distribution closely matches the true distribution), rather than the computation of numerous mediocre trials, dominates the performance of stochastic simulation. Key words: probabilistic inference, belief networks, stochastic simulation, computational complexity theory, randomized algorithms.

A Randomized Approximation Algorithm of Logic Sampling

Mar 27, 2013

Abstract:In recent years, researchers in decision analysis and artificial intelligence (AI) have used Bayesian belief networks to build models of expert opinion. Using standard methods drawn from the theory of computational complexity, workers in the field have shown that the problem of exact probabilistic inference on belief networks almost certainly requires exponential computation in the worst ease [3]. We have previously described a randomized approximation scheme, called BN-RAS, for computation on belief networks [ 1, 2, 4]. We gave precise analytic bounds on the convergence of BN-RAS and showed how to trade running time for accuracy in the evaluation of posterior marginal probabilities. We now extend our previous results and demonstrate the generality of our framework by applying similar mathematical techniques to the analysis of convergence for logic sampling [7], an alternative simulation algorithm for probabilistic inference.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge