Qunfeng Liu

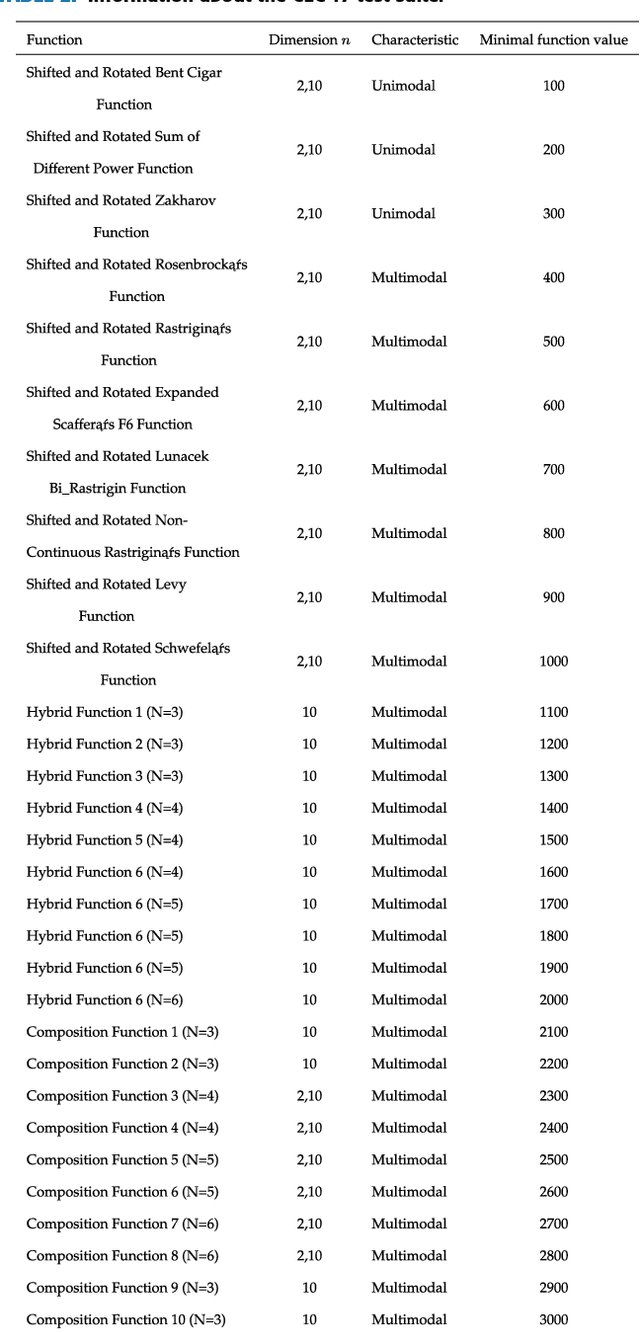

Absolute Ranking: An Essential Normalization for Benchmarking Optimization Algorithms

Sep 06, 2024

Abstract:Evaluating performance across optimization algorithms on many problems presents a complex challenge due to the diversity of numerical scales involved. Traditional data processing methods, such as hypothesis testing and Bayesian inference, often employ ranking-based methods to normalize performance values across these varying scales. However, a significant issue emerges with this ranking-based approach: the introduction of new algorithms can potentially disrupt the original rankings. This paper extensively explores the problem, making a compelling case to underscore the issue and conducting a thorough analysis of its root causes. These efforts pave the way for a comprehensive examination of potential solutions. Building on this research, this paper introduces a new mathematical model called "absolute ranking" and a sampling-based computational method. These contributions come with practical implementation recommendations, aimed at providing a more robust framework for addressing the challenge of numerical scale variation in the assessment of performance across multiple algorithms and problems.

A semantic hierarchical graph neural network for text classification

Sep 15, 2022

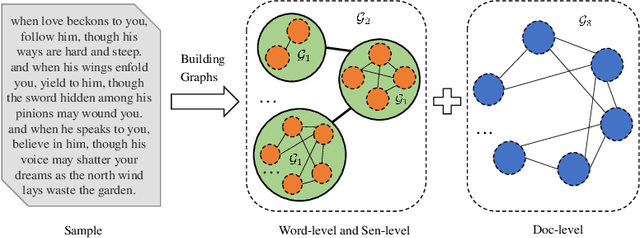

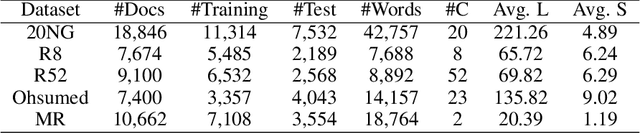

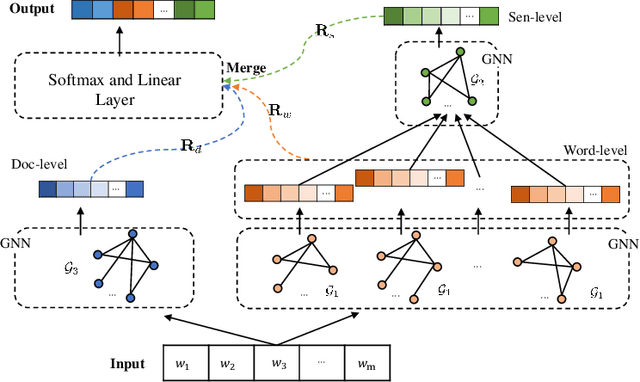

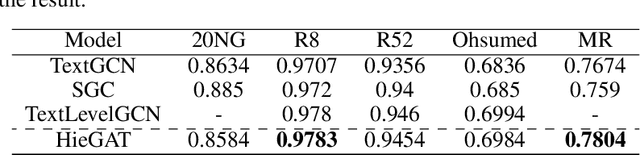

Abstract:The key to the text classification task is language representation and important information extraction, and there are many related studies. In recent years, the research on graph neural network (GNN) in text classification has gradually emerged and shown its advantages, but the existing models mainly focus on directly inputting words as graph nodes into the GNN models ignoring the different levels of semantic structure information in the samples. To address the issue, we propose a new hierarchical graph neural network (HieGNN) which extracts corresponding information from word-level, sentence-level and document-level respectively. Experimental results on several benchmark datasets achieve better or similar results compared to several baseline methods, which demonstrate that our model is able to obtain more useful information for classification from samples.

Simplex Search Based Brain Storm Optimization

Jun 06, 2018

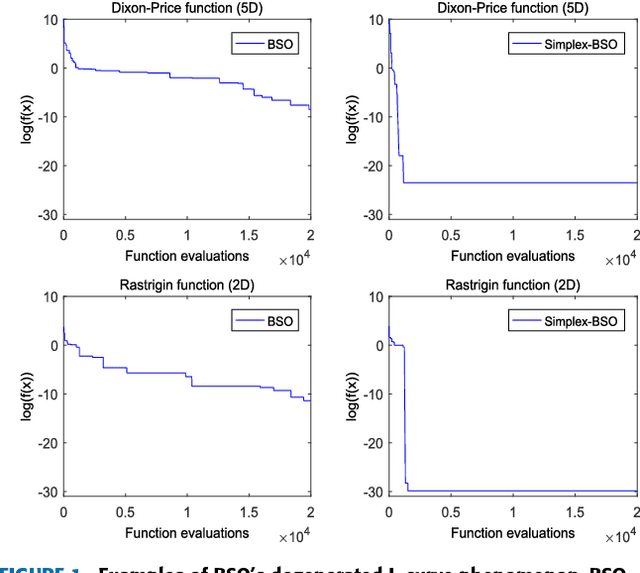

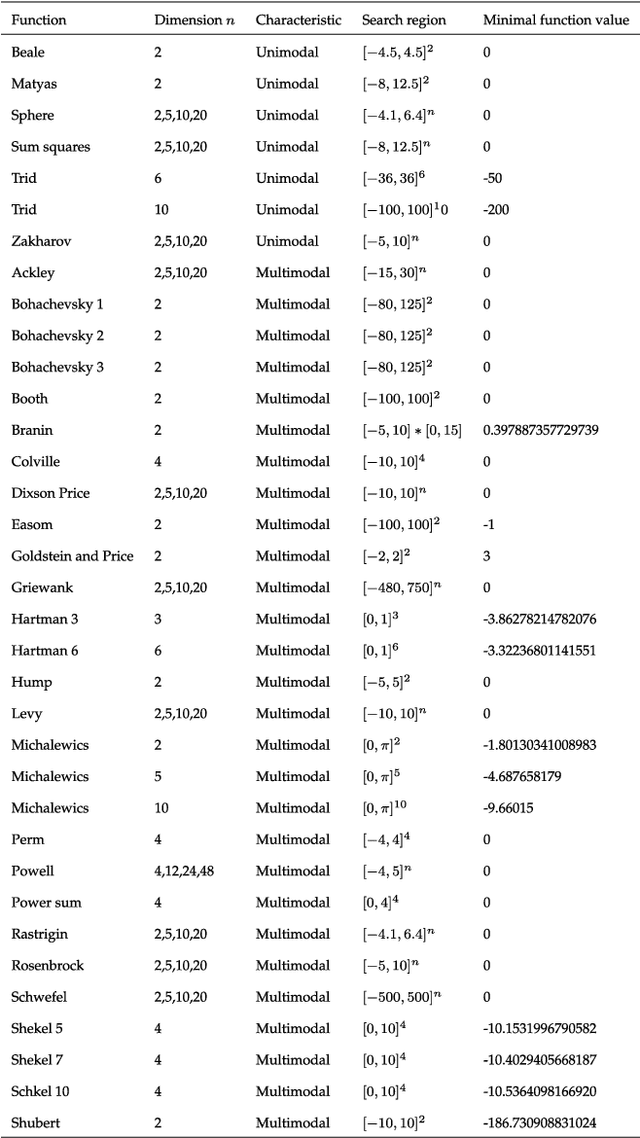

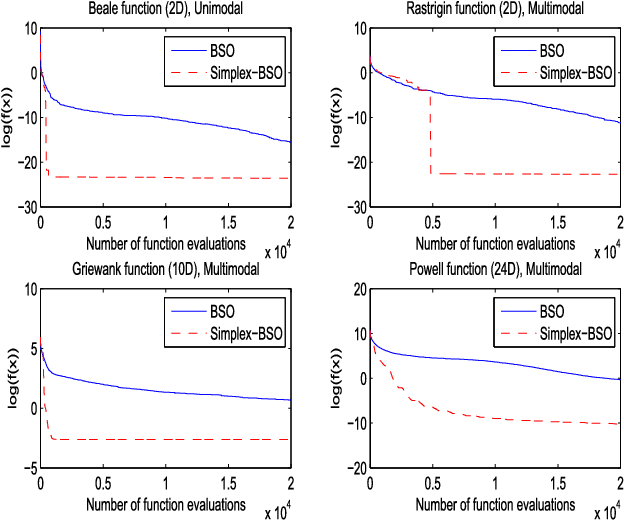

Abstract:Through modeling human's brainstorming process, the brain storm optimization (BSO) algorithm has become a promising population-based evolutionary algorithm. However, BSO is pointed out that it possesses a degenerated L-curve phenomenon, i.e., it often gets near optimum quickly but needs much more cost to improve the accuracy. To overcome this question in this paper, an excellent direct search based local solver, the Nelder-Mead Simplex (NMS) method is adopted in BSO. Through combining BSO's exploration ability and NMS's exploitation ability together, a simplex search based BSO (Simplex-BSO) is developed via a better balance between global exploration and local exploitation. Simplex-BSO is shown to be able to eliminate the degenerated L-curve phenomenon on unimodal functions, and alleviate significantly this phenomenon on multimodal functions. Large number of experimental results show that Simplex-BSO is a promising algorithm for global optimization problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge