Quansheng Ren

Artificial Open World for Evaluating AGI: a Conceptual Design

Jun 02, 2022

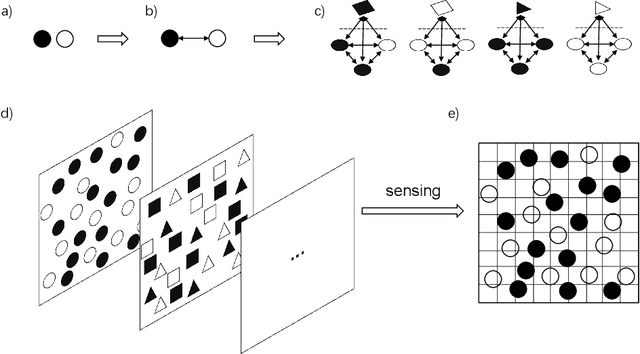

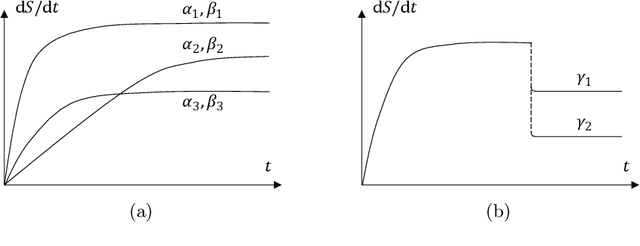

Abstract:How to evaluate Artificial General Intelligence (AGI) is a critical problem that is discussed and unsolved for a long period. In the research of narrow AI, this seems not a severe problem, since researchers in that field focus on some specific problems as well as one or some aspects of cognition, and the criteria for evaluation are explicitly defined. By contrast, an AGI agent should solve problems that are never-encountered by both agents and developers. However, once a developer tests and debugs the agent with a problem, the never-encountered problem becomes the encountered problem, as a result, the problem is solved by the developers to some extent, exploiting their experience, rather than the agents. This conflict, as we call the trap of developers' experience, leads to that this kind of problems is probably hard to become an acknowledged criterion. In this paper, we propose an evaluation method named Artificial Open World, aiming to jump out of the trap. The intuition is that most of the experience in the actual world should not be necessary to be applied to the artificial world, and the world should be open in some sense, such that developers are unable to perceive the world and solve problems by themselves before testing, though after that they are allowed to check all the data. The world is generated in a similar way as the actual world, and a general form of problems is proposed. A metric is proposed aiming to quantify the progress of research. This paper describes the conceptual design of the Artificial Open World, though the formalization and the implementation are left to the future.

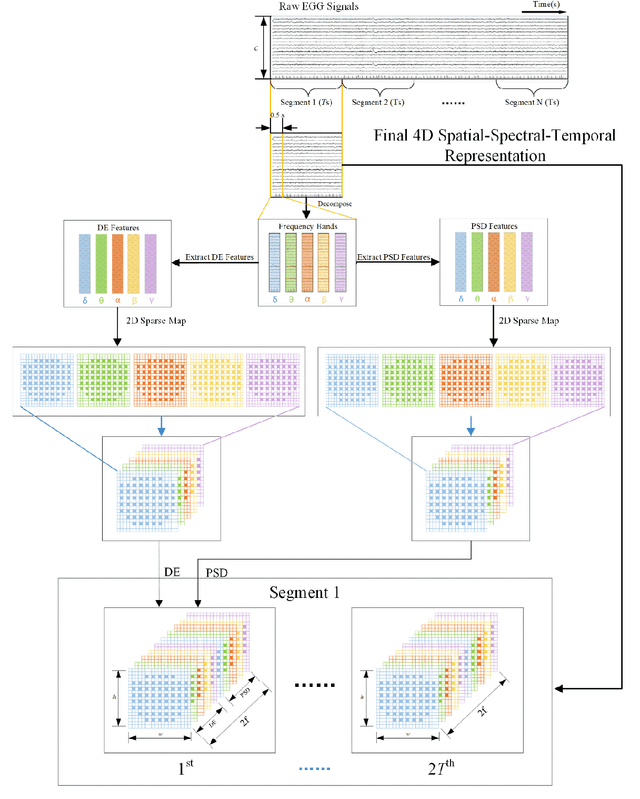

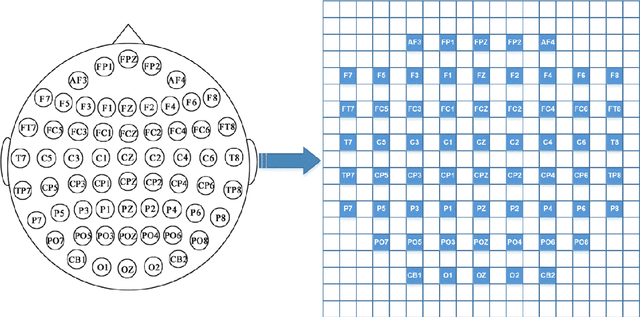

4D Attention-based Neural Network for EEG Emotion Recognition

Jan 14, 2021

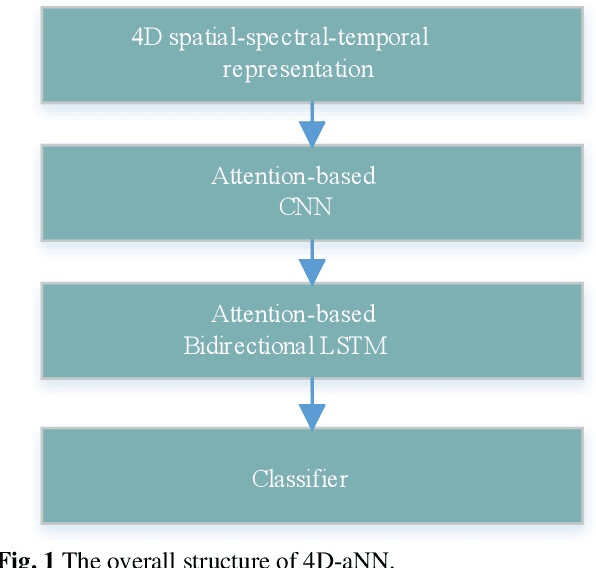

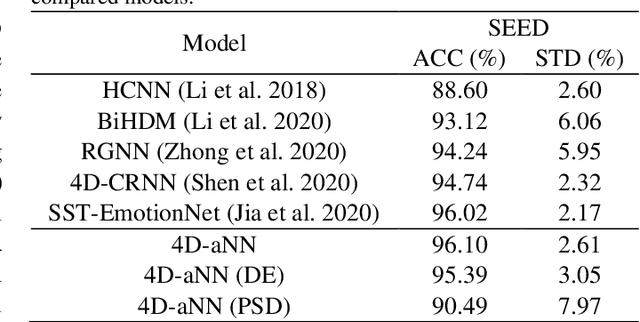

Abstract:Electroencephalograph (EEG) emotion recognition is a significant task in the brain-computer interface field. Although many deep learning methods are proposed recently, it is still challenging to make full use of the information contained in different domains of EEG signals. In this paper, we present a novel method, called four-dimensional attention-based neural network (4D-aNN) for EEG emotion recognition. First, raw EEG signals are transformed into 4D spatial-spectral-temporal representations. Then, the proposed 4D-aNN adopts spectral and spatial attention mechanisms to adaptively assign the weights of different brain regions and frequency bands, and a convolutional neural network (CNN) is utilized to deal with the spectral and spatial information of the 4D representations. Moreover, a temporal attention mechanism is integrated into a bidirectional Long Short-Term Memory (LSTM) to explore temporal dependencies of the 4D representations. Our model achieves state-of-the-art performance on the SEED dataset under intra-subject splitting. The experimental results have shown the effectiveness of the attention mechanisms in different domains for EEG emotion recognition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge