Pramod Abichandani

Event-based Spiking Neural Networks for Object Detection: A Review of Datasets, Architectures, Learning Rules, and Implementation

Nov 26, 2024

Abstract:Spiking Neural Networks (SNNs) represent a biologically inspired paradigm offering an energy-efficient alternative to conventional artificial neural networks (ANNs) for Computer Vision (CV) applications. This paper presents a systematic review of datasets, architectures, learning methods, implementation techniques, and evaluation methodologies used in CV-based object detection tasks using SNNs. Based on an analysis of 151 journal and conference articles, the review codifies: 1) the effectiveness of fully connected, convolutional, and recurrent architectures; 2) the performance of direct unsupervised, direct supervised, and indirect learning methods; and 3) the trade-offs in energy consumption, latency, and memory in neuromorphic hardware implementations. An open-source repository along with detailed examples of Python code and resources for building SNN models, event-based data processing, and SNN simulations are provided. Key challenges in SNN training, hardware integration, and future directions for CV applications are also identified.

NU-AIR -- A Neuromorphic Urban Aerial Dataset for Detection and Localization of Pedestrians and Vehicles

Feb 18, 2023Abstract:Annotated imagery capturing pedestrians and vehicles in an urban environment can be used to train Neural Networks (NNs) for machine vision tasks. This paper presents the first open-source aerial neuromorphic dataset that captures pedestrians and vehicles moving in an urban environment. The dataset, titled NU-AIR, features 70.75 minutes of event footage acquired with a 640 x 480 resolution neuromorphic sensor mounted on a quadrotor operating in an urban environment. Crowds of pedestrians, different types of vehicles, and street scenes at a busy urban intersection are captured at different elevations and illumination conditions. Manual bounding box annotations of vehicles and pedestrians contained in the recordings are provided at a frequency of 30 Hz, yielding 93,204 labels in total. Evaluation of the dataset's fidelity is performed by training three Spiking Neural Networks (SNNs) and ten Deep Neural Networks (DNNs). The mean average precision (mAP) accuracy results achieved for the testing set evaluations are on-par with results reported for similar SNNs and DNNs on established neuromorphic benchmark datasets. All data and Python code to voxelize the data and subsequently train SNNs/DNNs has been open-sourced.

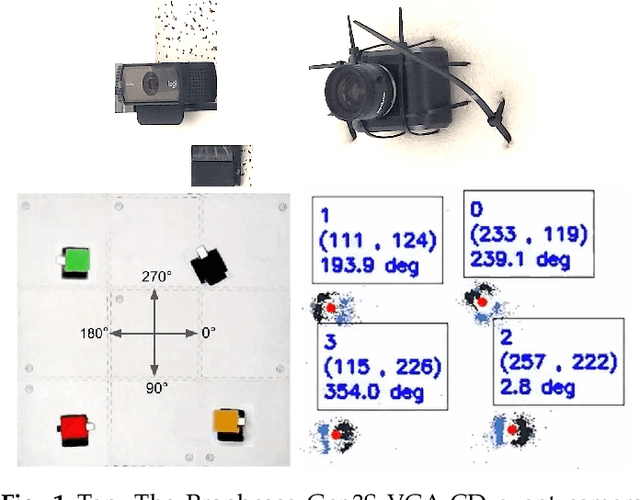

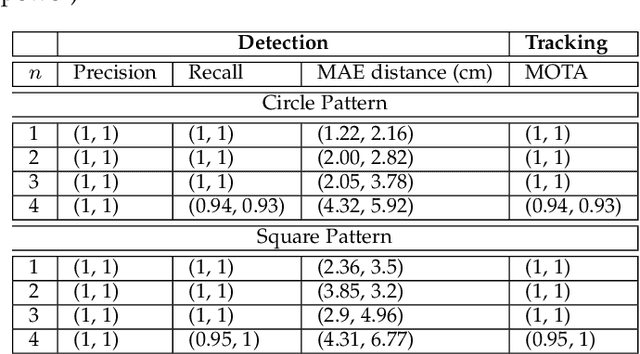

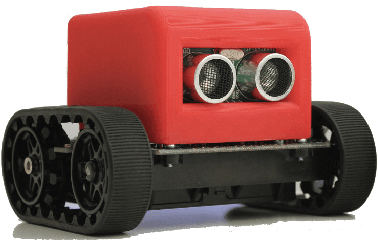

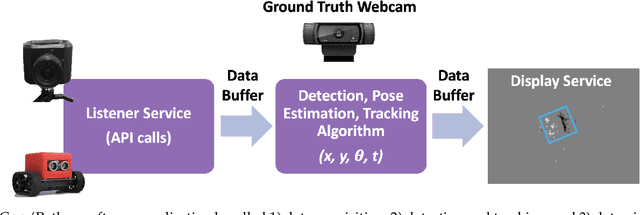

Event Camera Based Real-Time Detection and Tracking of Indoor Ground Robots

Feb 23, 2021

Abstract:This paper presents a real-time method to detect and track multiple mobile ground robots using event cameras. The method uses density-based spatial clustering of applications with noise (DBSCAN) to detect the robots and a single k-dimensional (k-d) tree to accurately keep track of them as they move in an indoor arena. Robust detections and tracks are maintained in the face of event camera noise and lack of events (due to robots moving slowly or stopping). An off-the-shelf RGB camera-based tracking system was used to provide ground truth. Experiments including up to 4 robots are performed to study the effect of i) varying DBSCAN parameters, ii) the event accumulation time, iii) the number of robots in the arena, and iv) the speed of the robots on the detection and tracking performance. The experimental results showed 100% detection and tracking fidelity in the face of event camera noise and robots stopping for tests involving up to 3 robots (and upwards of 93% for 4 robots).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge