Piotr Płoński

Electron Neutrino Classification in Liquid Argon Time Projection Chamber Detector

May 03, 2015

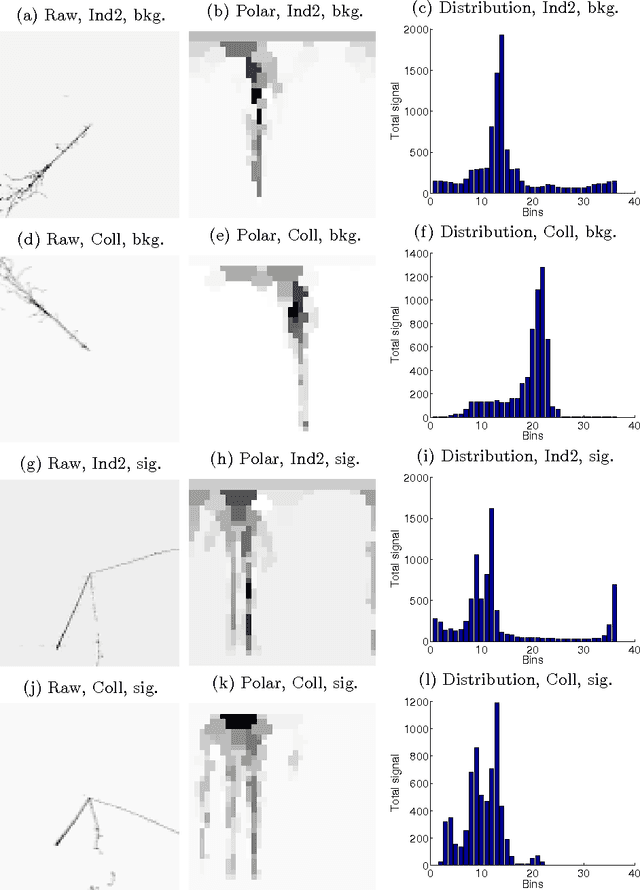

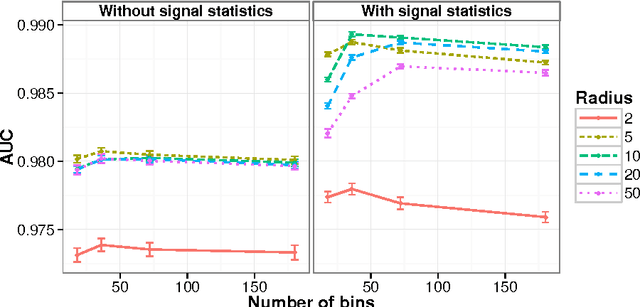

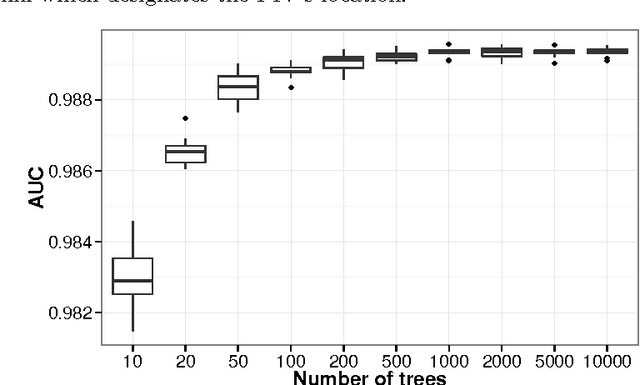

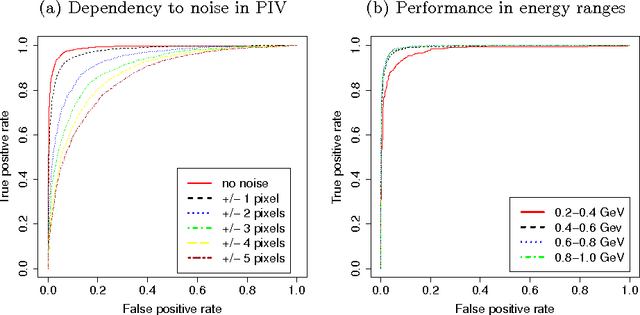

Abstract:Neutrinos are one of the least known elementary particles. The detection of neutrinos is an extremely difficult task since they are affected only by weak sub-atomic force or gravity. Therefore large detectors are constructed to reveal neutrino's properties. Among them the Liquid Argon Time Projection Chamber (LAr-TPC) detectors provide excellent imaging and particle identification ability for studying neutrinos. The computerized methods for automatic reconstruction and identification of particles are needed to fully exploit the potential of the LAr-TPC technique. Herein, the novel method for electron neutrino classification is presented. The method constructs a feature descriptor from images of observed event. It characterizes the signal distribution propagated from vertex of interest, where the particle interacts with the detector medium. The classifier is learned with a constructed feature descriptor to decide whether the images represent the electron neutrino or cascade produced by photons. The proposed approach assumes that the position of primary interaction vertex is known. The method's performance in dependency to the noise in a primary vertex position and deposited energy of particles is studied.

Image Segmentation in Liquid Argon Time Projection Chamber Detector

Feb 27, 2015

Abstract:The Liquid Argon Time Projection Chamber (LAr-TPC) detectors provide excellent imaging and particle identification ability for studying neutrinos. An efficient and automatic reconstruction procedures are required to exploit potential of this imaging technology. Herein, a novel method for segmentation of images from LAr-TPC detectors is presented. The proposed approach computes a feature descriptor for each pixel in the image, which characterizes amplitude distribution in pixel and its neighbourhood. The supervised classifier is employed to distinguish between pixels representing particle's track and noise. The classifier is trained and evaluated on the hand-labeled dataset. The proposed approach can be a preprocessing step for reconstructing algorithms working directly on detector images.

Improving Performance of Self-Organising Maps with Distance Metric Learning Method

Jul 04, 2014

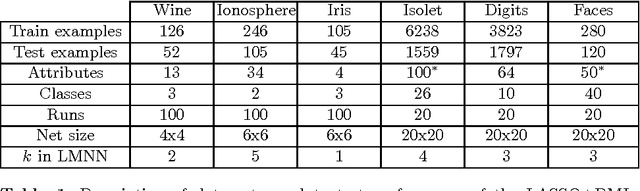

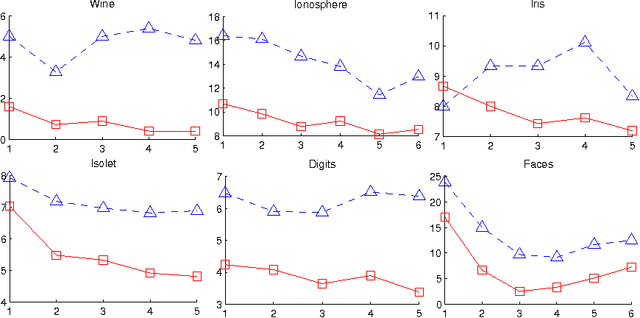

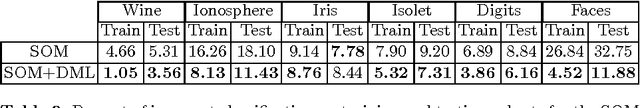

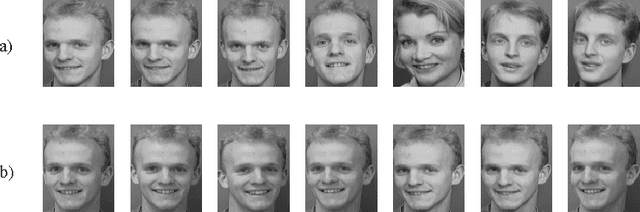

Abstract:Self-Organising Maps (SOM) are Artificial Neural Networks used in Pattern Recognition tasks. Their major advantage over other architectures is human readability of a model. However, they often gain poorer accuracy. Mostly used metric in SOM is the Euclidean distance, which is not the best approach to some problems. In this paper, we study an impact of the metric change on the SOM's performance in classification problems. In order to change the metric of the SOM we applied a distance metric learning method, so-called 'Large Margin Nearest Neighbour'. It computes the Mahalanobis matrix, which assures small distance between nearest neighbour points from the same class and separation of points belonging to different classes by large margin. Results are presented on several real data sets, containing for example recognition of written digits, spoken letters or faces.

* 9 pages, 2 figures

Visualizing Random Forest with Self-Organising Map

May 26, 2014

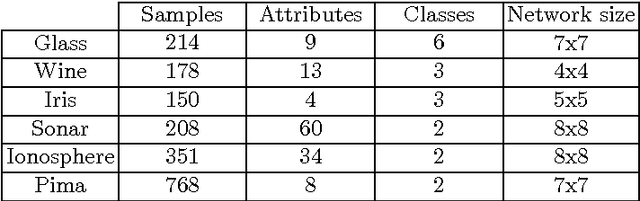

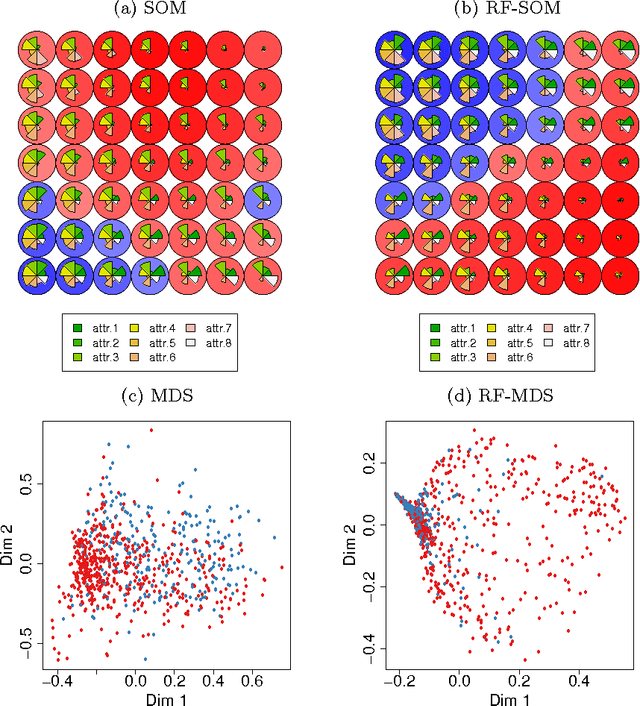

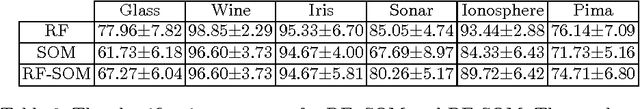

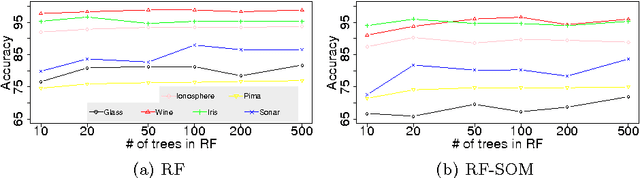

Abstract:Random Forest (RF) is a powerful ensemble method for classification and regression tasks. It consists of decision trees set. Although, a single tree is well interpretable for human, the ensemble of trees is a black-box model. The popular technique to look inside the RF model is to visualize a RF proximity matrix obtained on data samples with Multidimensional Scaling (MDS) method. Herein, we present a novel method based on Self-Organising Maps (SOM) for revealing intrinsic relationships in data that lay inside the RF used for classification tasks. We propose an algorithm to learn the SOM with the proximity matrix obtained from the RF. The visualization of RF proximity matrix with MDS and SOM is compared. What is more, the SOM learned with the RF proximity matrix has better classification accuracy in comparison to SOM learned with Euclidean distance. Presented approach enables better understanding of the RF and additionally improves accuracy of the SOM.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge