Pinaki Roy Chowdhury

Deep Learning based Automatic Detection of Dicentric Chromosome

Apr 17, 2022

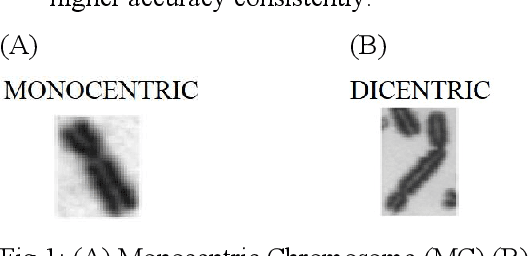

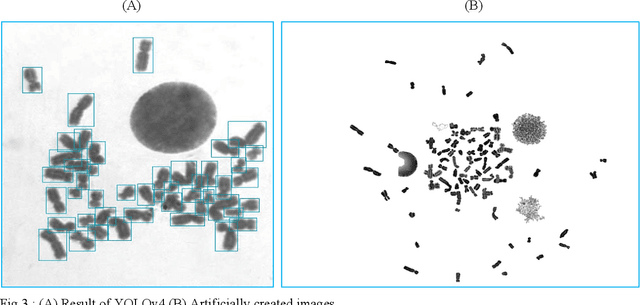

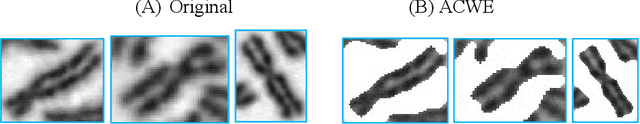

Abstract:Automatic detection of dicentric chromosomes is an essential step to estimate radiation exposure and development of end to end emergency bio dosimetry systems. During accidents, a large amount of data is required to be processed for extensive testing to formulate a medical treatment plan for the masses, which requires this process to be automated. Current approaches require human adjustments according to the data and therefore need a human expert to calibrate the system. This paper proposes a completely data driven framework which requires minimum intervention of field experts and can be deployed in emergency cases with relative ease. Our approach involves YOLOv4 to detect the chromosomes and remove the debris in each image, followed by a classifier that differentiates between an analysable chromosome and a non-analysable one. Images are extracted from YOLOv4 based on the protocols described by WHO-BIODOSNET. The analysable chromosome is classified as Monocentric or Dicentric and an image is accepted for consideration of dose estimation based on the analysable chromosome count. We report an accuracy in dicentric identification of 94.33% on a 1:1 split of Dicentric and Monocentric Chromosomes.

Brain Inspired Object Recognition System

May 15, 2021

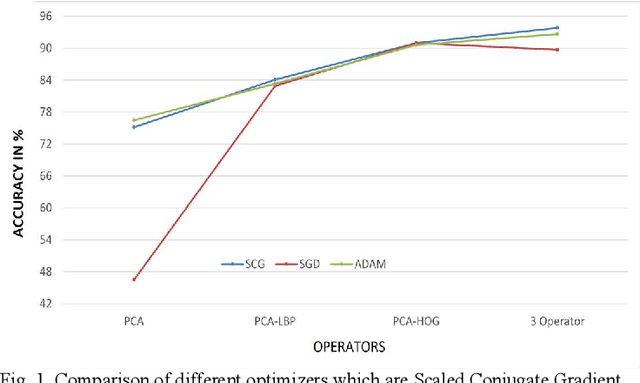

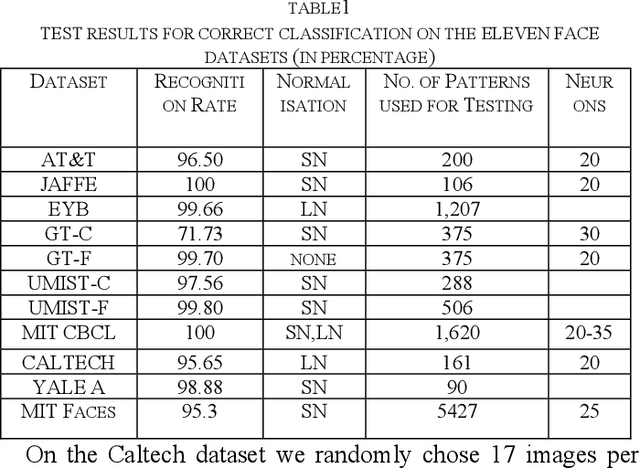

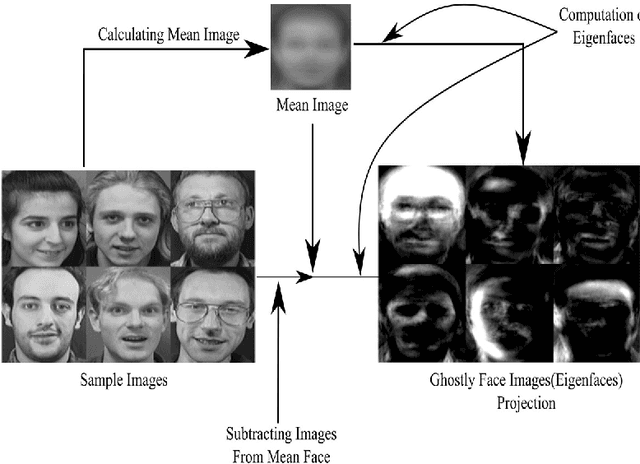

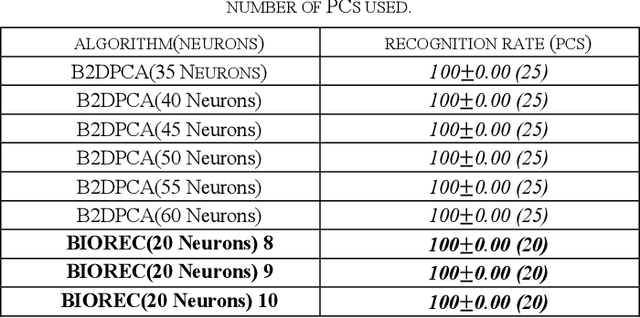

Abstract:This paper presents a new proposal of an efficient computational model of face and object recognition which uses cues from the distributed face and object recognition mechanism of the brain, and by gathering engineering equivalent of these cues from existing literature. Three distinct and widely used features, Histogram of Oriented Gradients, Local Binary Patterns, and Principal components extracted from target images are used in a manner which is simple, and yet effective. Our model uses multi-layer perceptrons (MLP) to classify these three features and fuse them at the decision level using sum rule. A computational theory is first developed by using concepts from the information processing mechanism of the brain. Extensive experiments are carried out using fifteen publicly available datasets to validate the performance of our proposed model in recognizing faces and objects with extreme variation of illumination, pose angle, expression, and background. Results obtained are extremely promising when compared with other face and object recognition algorithms including CNN and deep learning based methods. This highlights that simple computational processes, if clubbed properly, can produce competing performance with best algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge