Peter Fasogbon

Camera-Agnostic Pruning of 3D Gaussian Splats via Descriptor-Based Beta Evidence

Mar 23, 2026Abstract:The pruning of 3D Gaussian splats is essential for reducing their complexity to enable efficient storage, transmission, and downstream processing. However, most of the existing pruning strategies depend on camera parameters, rendered images, or view-dependent measures. This dependency becomes a hindrance in emerging camera-agnostic exchange settings, where splats are shared directly as point-based representations (e.g., .ply). In this paper, we propose a camera-agnostic, one-shot, post-training pruning method for 3D Gaussian splats that relies solely on attribute-derived neighbourhood descriptors. As our primary contribution, we introduce a hybrid descriptor framework that captures structural and appearance consistency directly from the splat representation. Building on these descriptors, we formulate pruning as a statistical evidence estimation problem and introduce a Beta evidence model that quantifies per-splat reliability through a probabilistic confidence score. Experiments conducted on standardized test sequences defined by the ISO/IEC MPEG Common Test Conditions (CTC) demonstrate that our approach achieves substantial pruning while preserving reconstruction quality, establishing a practical and generalizable alternative to existing camera-dependent pruning strategies.

Calibration of fisheye camera using entrance pupil

Jul 03, 2019

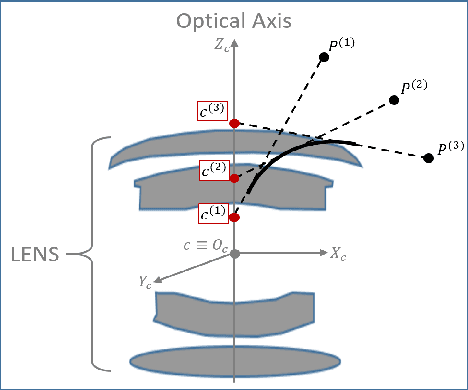

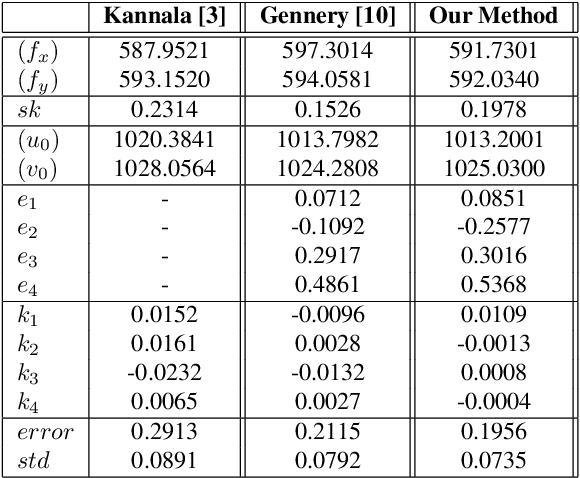

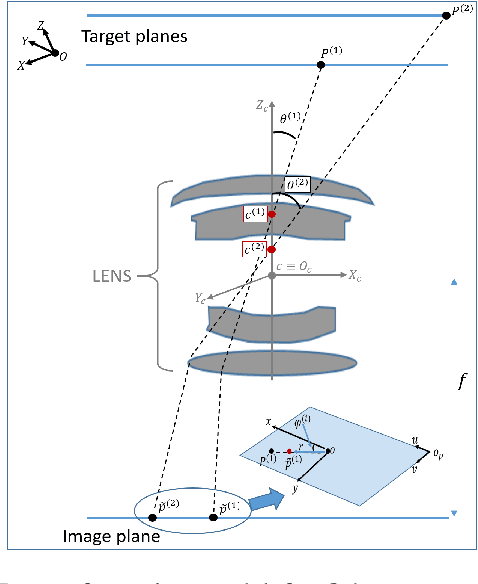

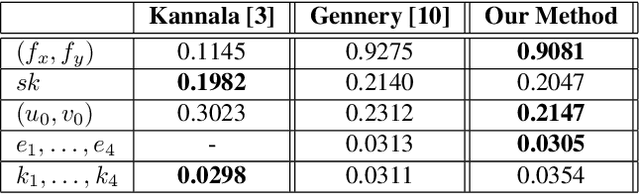

Abstract:Most conventional camera calibration algorithms assume that the imaging device has a Single Viewpoint (SVP). This is not necessarily true for special imaging device such as fisheye lenses. As a consequence, the intrinsic camera calibration result is not always reliable. In this paper, we propose a new formation model that tends to relax this assumption so that a Non-Single Viewpoint (NSVP) system is corrected to always maintain a SVP, by taking into account the variation of the Entrance Pupil (EP) using thin lens modeling. In addition, we present a calibration procedure for the image formation to estimate these EP parameters using non linear optimization procedure with bundle adjustment. From experiments, we are able to obtain slightly better re-projection error than traditional methods, and the camera parameters are better estimated. The proposed calibration procedure is simple and can easily be integrated to any other thin lens image formation model.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge