Patrick Hohenecker

Controlling Text Edition by Changing Answers of Specific Questions

May 23, 2021

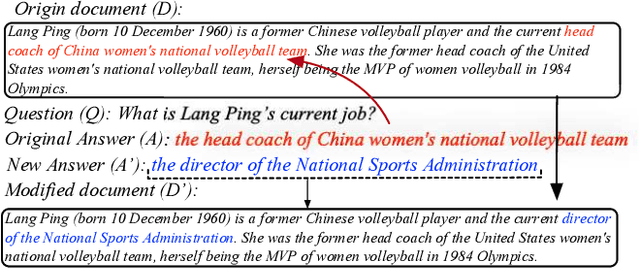

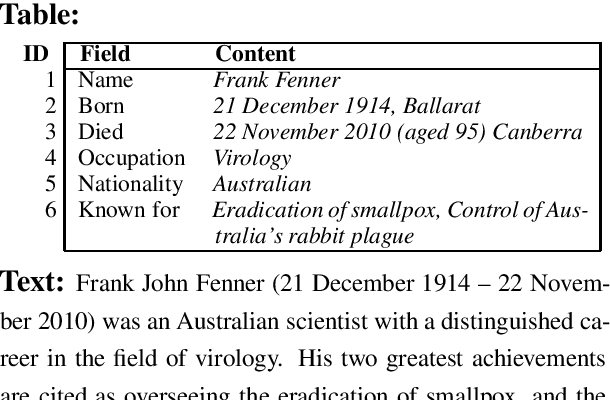

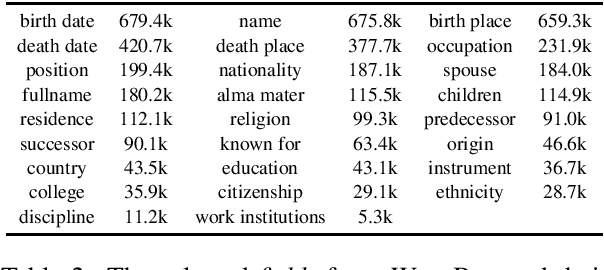

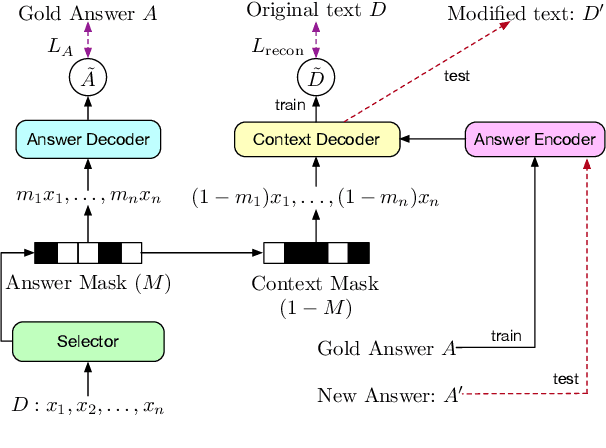

Abstract:In this paper, we introduce the new task of controllable text edition, in which we take as input a long text, a question, and a target answer, and the output is a minimally modified text, so that it fits the target answer. This task is very important in many situations, such as changing some conditions, consequences, or properties in a legal document, or changing some key information of an event in a news text. This is very challenging, as it is hard to obtain a parallel corpus for training, and we need to first find all text positions that should be changed and then decide how to change them. We constructed the new dataset WikiBioCTE for this task based on the existing dataset WikiBio (originally created for table-to-text generation). We use WikiBioCTE for training, and manually labeled a test set for testing. We also propose novel evaluation metrics and a novel method for solving the new task. Experimental results on the test set show that our proposed method is a good fit for this novel NLP task.

Ontology Reasoning with Deep Neural Networks

Sep 04, 2018

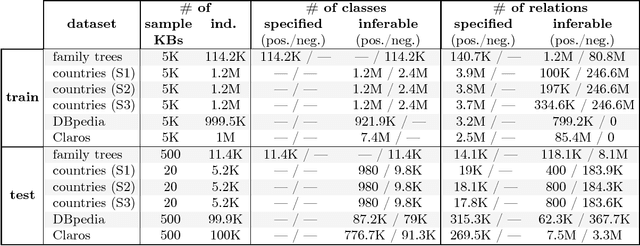

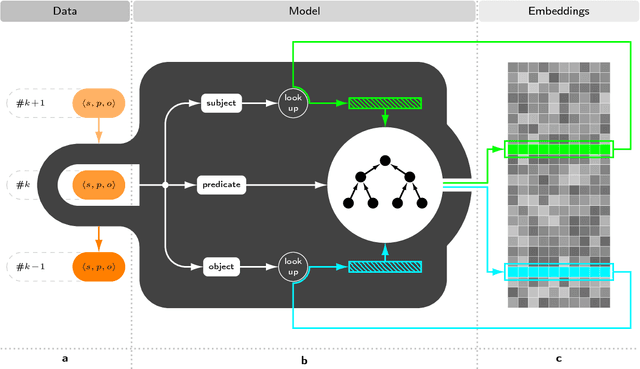

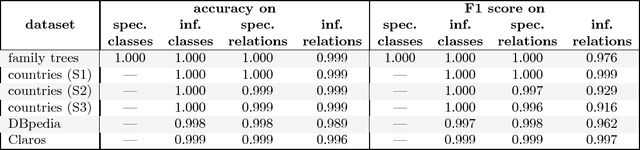

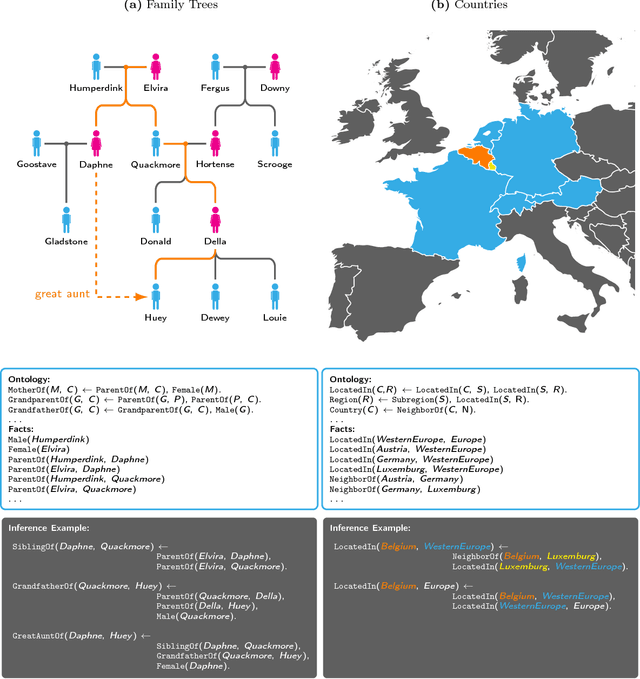

Abstract:The ability to conduct logical reasoning is a fundamental aspect of intelligent behavior, and thus an important problem along the way to human-level artificial intelligence. Traditionally, symbolic methods from the field of knowledge representation and reasoning have been used to equip agents with capabilities that resemble human logical reasoning qualities. More recently, however, there has been an increasing interest in using machine learning rather than logic-based formalisms to tackle these tasks. In this paper, we employ state-of-the-art methods for training deep neural networks to devise a novel model that is able to learn how to effectively perform basic ontology reasoning. This is an important and at the same time very natural reasoning problem, which is why the presented approach is applicable to a plethora of important real-world problems. We present the outcomes of several experiments, which show that our model learned to perform precise reasoning on diverse and challenging tasks. Furthermore, it turned out that the suggested approach suffers much less from different obstacles that prohibit symbolic reasoning, and, at the same time, is surprisingly plausible from a biological point of view.

Deep Learning for Ontology Reasoning

May 29, 2017

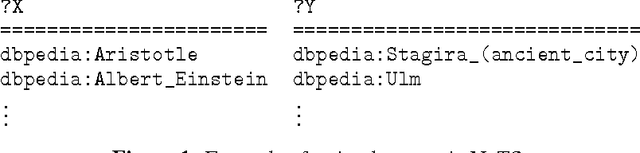

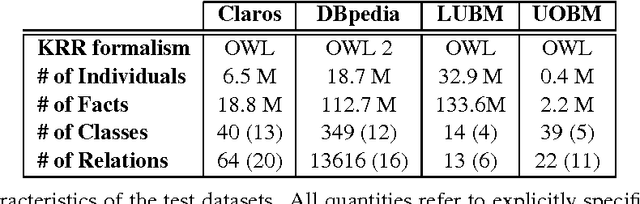

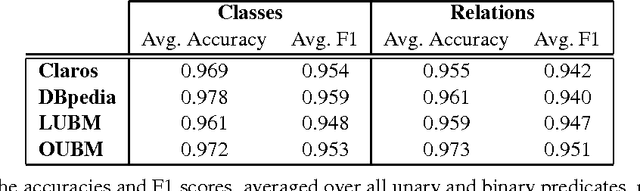

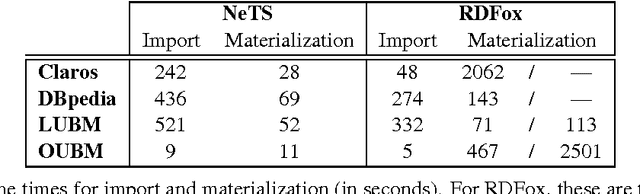

Abstract:In this work, we present a novel approach to ontology reasoning that is based on deep learning rather than logic-based formal reasoning. To this end, we introduce a new model for statistical relational learning that is built upon deep recursive neural networks, and give experimental evidence that it can easily compete with, or even outperform, existing logic-based reasoners on the task of ontology reasoning. More precisely, we compared our implemented system with one of the best logic-based ontology reasoners at present, RDFox, on a number of large standard benchmark datasets, and found that our system attained high reasoning quality, while being up to two orders of magnitude faster.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge