Osman Erman Okman

EdgeSpot: Efficient and High-Performance Few-Shot Model for Keyword Spotting

Jan 22, 2026Abstract:We introduce an efficient few-shot keyword spotting model for edge devices, EdgeSpot, that pairs an optimized version of a BC-ResNet-based acoustic backbone with a trainable Per-Channel Energy Normalization frontend and lightweight temporal self-attention. Knowledge distillation is utilized during training by employing a self-supervised teacher model, optimized with Sub-center ArcFace loss. This study demonstrates that the EdgeSpot model consistently provides better accuracy at a fixed false-alarm rate (FAR) than strong BC-ResNet baselines. The largest variant, EdgeSpot-4, improves the 10-shot accuracy at 1% FAR from 73.7% to 82.0%, which requires only 29.4M MACs with 128k parameters.

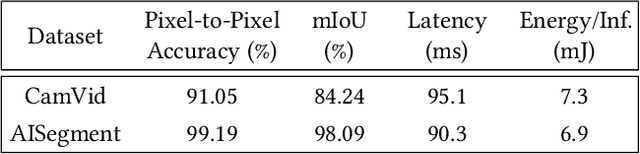

L^3U-net: Low-Latency Lightweight U-net Based Image Segmentation Model for Parallel CNN Processors

Mar 30, 2022

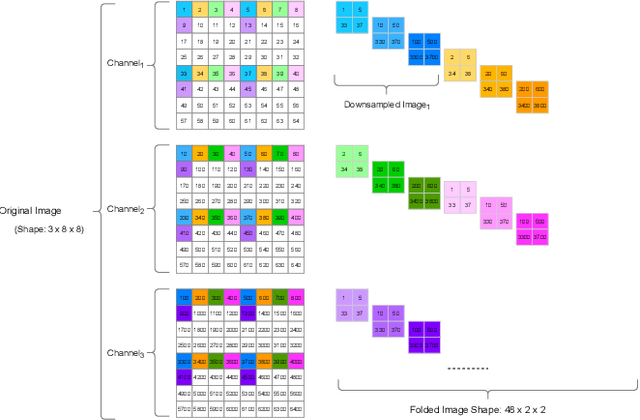

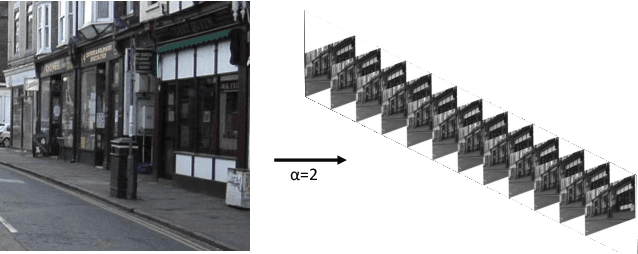

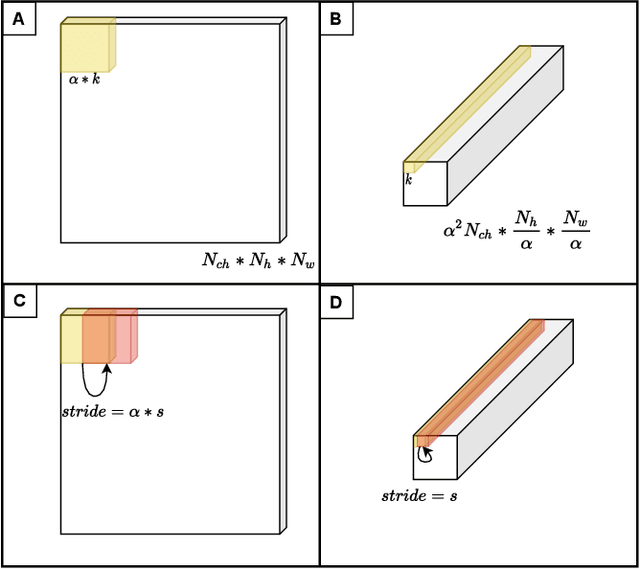

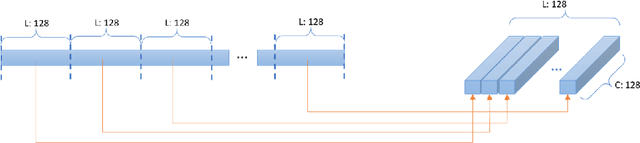

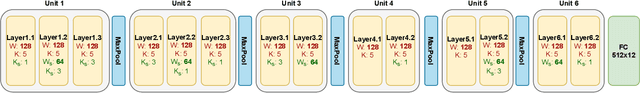

Abstract:In this research, we propose a tiny image segmentation model, L^3U-net, that works on low-resource edge devices in real-time. We introduce a data folding technique that reduces inference latency by leveraging the parallel convolutional layer processing capability of the CNN accelerators. We also deploy the proposed model to such a device, MAX78000, and the results show that L^3U-net achieves more than 90% accuracy over two different segmentation datasets with 10 fps.

Ultra-Low Power Keyword Spotting at the Edge

Nov 09, 2021

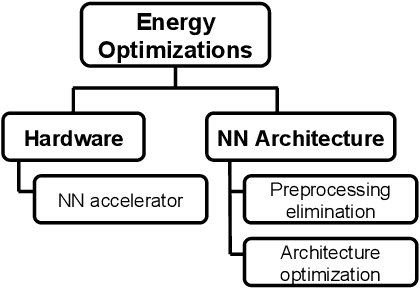

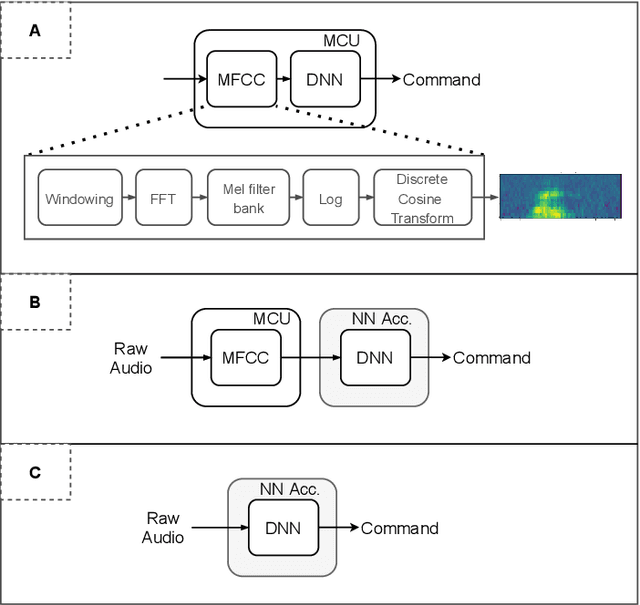

Abstract:Keyword spotting (KWS) has become an indispensable part of many intelligent devices surrounding us, as audio is one of the most efficient ways of interacting with these devices. The accuracy and performance of KWS solutions have been the main focus of the researchers, and thanks to deep learning, substantial progress has been made in this domain. However, as the use of KWS spreads into IoT devices, energy efficiency becomes a very critical requirement besides the performance. We believe KWS solutions that would seek power optimization both in the hardware and the neural network (NN) model architecture are advantageous over many solutions in the literature where mostly the architecture side of the problem is considered. In this work, we designed an optimized KWS CNN model by considering end-to-end energy efficiency for the deployment at MAX78000, an ultra-low-power CNN accelerator. With the combined hardware and model optimization approach, we achieve 96.3\% accuracy for 12 classes while only consuming 251 uJ per inference. We compare our results with other small-footprint neural network-based KWS solutions in the literature. Additionally, we share the energy consumption of our model in power-optimized ARM Cortex-M4F to depict the effectiveness of the chosen hardware for the sake of clarity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge