O. Michailovich

Optimization of Structural Similarity in Mathematical Imaging

Feb 07, 2020

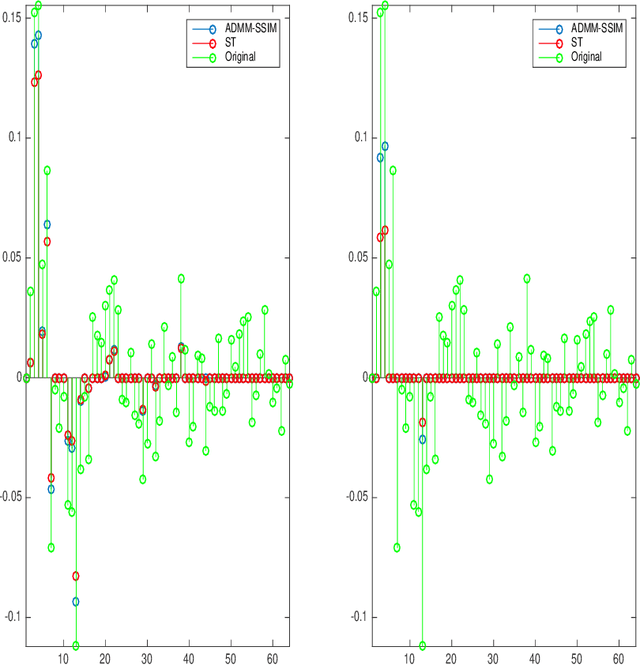

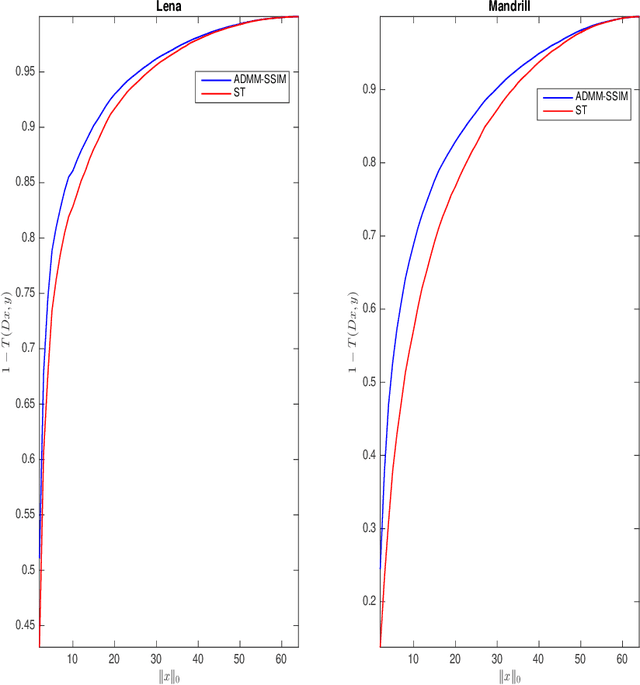

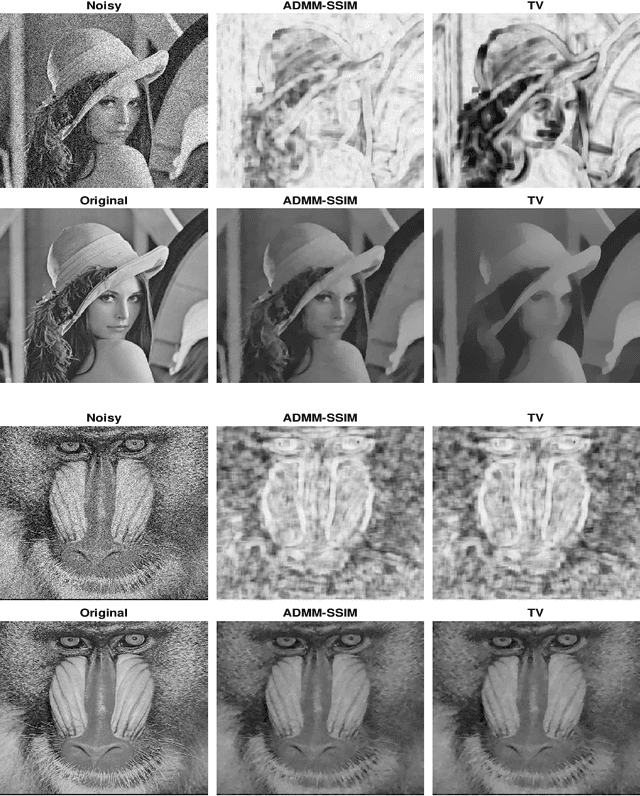

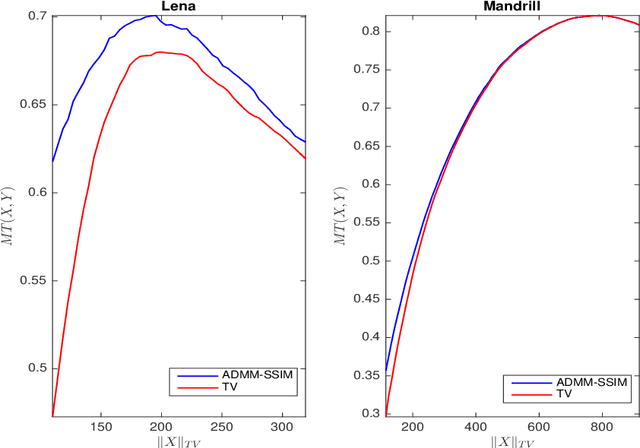

Abstract:It is now generally accepted that Euclidean-based metrics may not always adequately represent the subjective judgement of a human observer. As a result, many image processing methodologies have been recently extended to take advantage of alternative visual quality measures, the most prominent of which is the Structural Similarity Index Measure (SSIM). The superiority of the latter over Euclidean-based metrics have been demonstrated in several studies. However, being focused on specific applications, the findings of such studies often lack generality which, if otherwise acknowledged, could have provided a useful guidance for further development of SSIM-based image processing algorithms. Accordingly, instead of focusing on a particular image processing task, in this paper, we introduce a general framework that encompasses a wide range of imaging applications in which the SSIM can be employed as a fidelity measure. Subsequently, we show how the framework can be used to cast some standard as well as original imaging tasks into optimization problems, followed by a discussion of a number of novel numerical strategies for their solution.

Spatially regularized reconstruction of fibre orientation distributions in the presence of isotropic diffusion

Jan 23, 2014

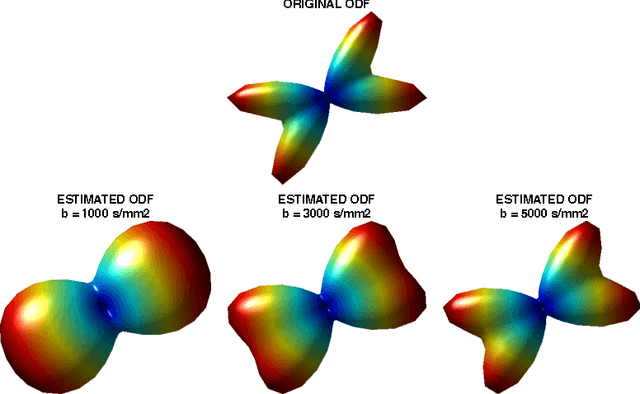

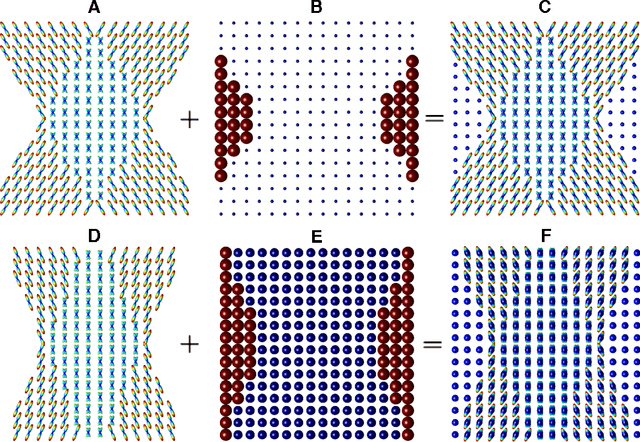

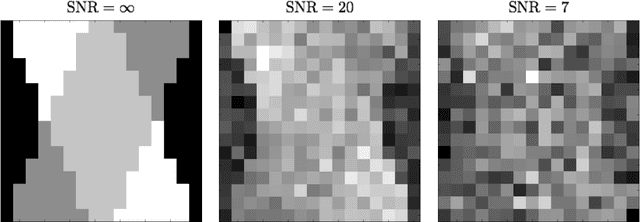

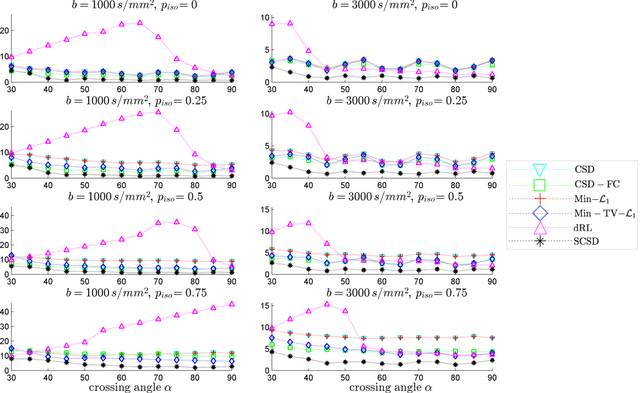

Abstract:The connectivity and structural integrity of the white matter of the brain is nowadays known to be implicated into a wide range of brain-related disorders. However, it was not before the advent of diffusion Magnetic Resonance Imaging (dMRI) that researches have been able to examine the properties of white matter in vivo. Presently, among a range of various methods of dMRI, high angular resolution diffusion imaging (HARDI) is known to excel in its ability to provide reliable information about the local orientations of neural fasciculi (aka fibre tracts). Moreover, as opposed to the more traditional diffusion tensor imaging (DTI), HARDI is capable of distinguishing the orientations of multiple fibres passing through a given spatial voxel. Unfortunately, the ability of HARDI to discriminate between neural fibres that cross each other at acute angles is always limited, which is the main reason behind the development of numerous post-processing tools, aiming at the improvement of the directional resolution of HARDI. Among such tools is spherical deconvolution (SD). Due to its ill-posed nature, however, SD standardly relies on a number of a priori assumptions which are to render its results unique and stable. In this paper, we propose a different approach to the problem of SD in HARDI, which accounts for the spatial continuity of neural fibres as well as the presence of isotropic diffusion. Subsequently, we demonstrate how the proposed solution can be used to successfully overcome the effect of partial voluming, while preserving the spatial coherency of cerebral diffusion at moderate-to-severe noise levels. In a series of both in silico and in vivo experiments, the performance of the proposed method is compared with that of several available alternatives, with the comparative results clearly supporting the viability and usefulness of our approach.

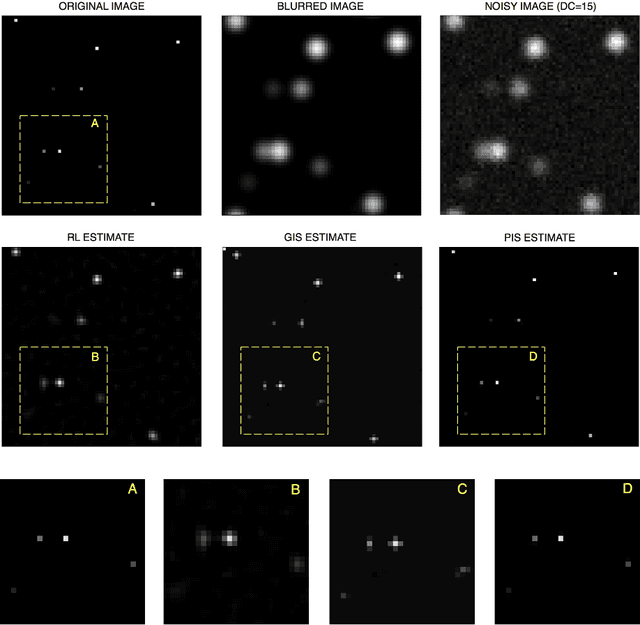

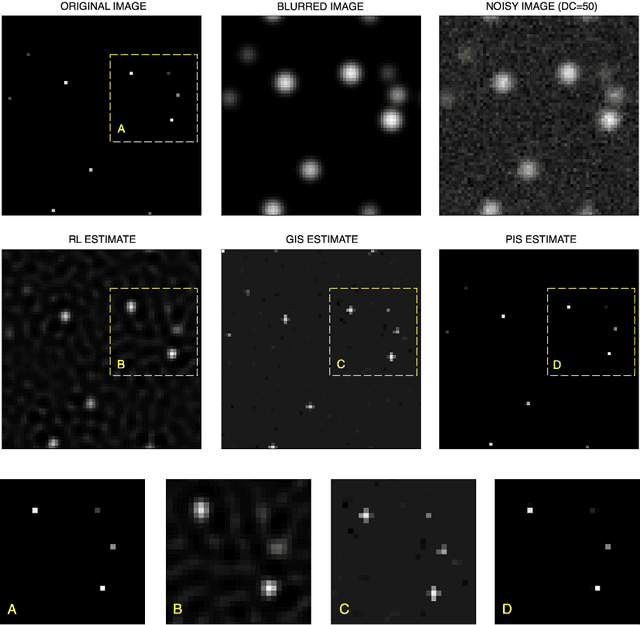

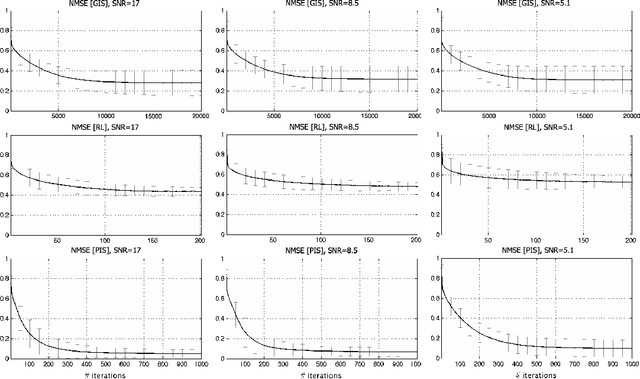

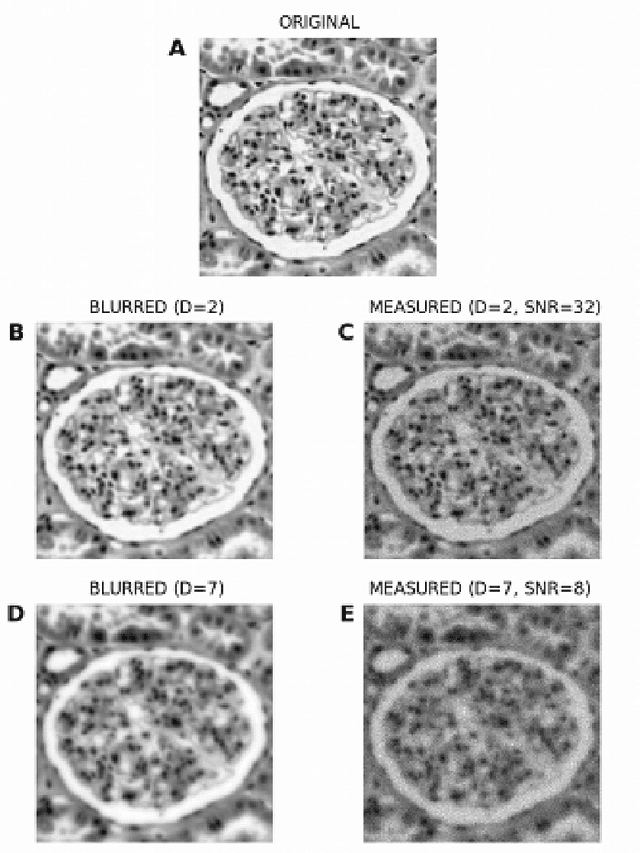

Iterative Shrinkage Approach to Restoration of Optical Imagery

Jan 06, 2010

Abstract:The problem of reconstruction of digital images from their degraded measurements is regarded as a problem of central importance in various fields of engineering and imaging sciences. In such cases, the degradation is typically caused by the resolution limitations of an imaging device in use and/or by the destructive influence of measurement noise. Specifically, when the noise obeys a Poisson probability law, standard approaches to the problem of image reconstruction are based on using fixed-point algorithms which follow the methodology first proposed by Richardson and Lucy. The practice of using these methods, however, shows that their convergence properties tend to deteriorate at relatively high noise levels. Accordingly, in the present paper, a novel method for de-noising and/or de-blurring of digital images corrupted by Poisson noise is introduced. The proposed method is derived under the assumption that the image of interest can be sparsely represented in the domain of a linear transform. Consequently, a shrinkage-based iterative procedure is proposed, which guarantees the solution to converge to the global maximizer of an associated maximum-a-posteriori criterion. It is shown in a series of both computer-simulated and real-life experiments that the proposed method outperforms a number of existing alternatives in terms of stability, precision, and computational efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge