Norman Knyazev

A Lightweight Method for Modeling Confidence in Recommendations with Learned Beta Distributions

Aug 06, 2023Abstract:Most Recommender Systems (RecSys) do not provide an indication of confidence in their decisions. Therefore, they do not distinguish between recommendations of which they are certain, and those where they are not. Existing confidence methods for RecSys are either inaccurate heuristics, conceptually complex or computationally very expensive. Consequently, real-world RecSys applications rarely adopt these methods, and thus, provide no confidence insights in their behavior. In this work, we propose learned beta distributions (LBD) as a simple and practical recommendation method with an explicit measure of confidence. Our main insight is that beta distributions predict user preferences as probability distributions that naturally model confidence on a closed interval, yet can be implemented with the minimal model-complexity. Our results show that LBD maintains competitive accuracy to existing methods while also having a significantly stronger correlation between its accuracy and confidence. Furthermore, LBD has higher performance when applied to a high-precision targeted recommendation task. Our work thus shows that confidence in RecSys is possible without sacrificing simplicity or accuracy, and without introducing heavy computational complexity. Thereby, we hope it enables better insight into real-world RecSys and opens the door for novel future applications.

The Bandwagon Effect: Not Just Another Bias

Jul 01, 2022

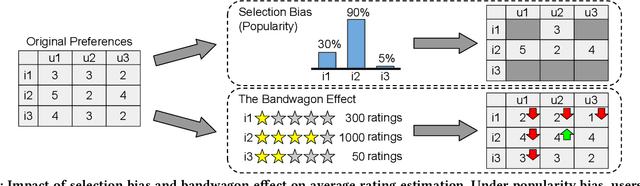

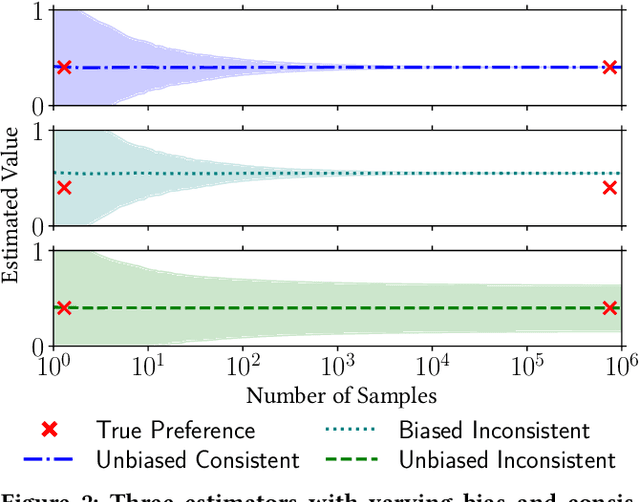

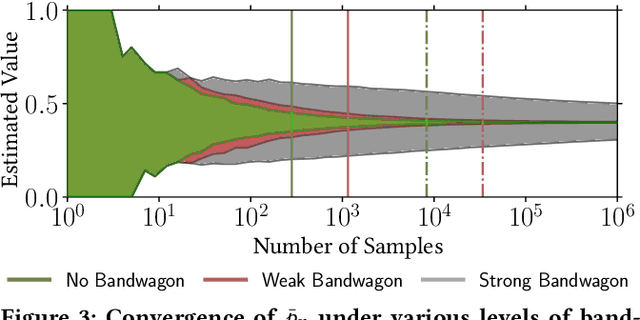

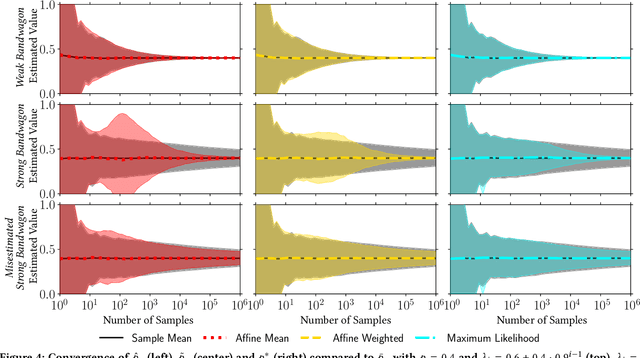

Abstract:Optimizing recommender systems based on user interaction data is mainly seen as a problem of dealing with selection bias, where most existing work assumes that interactions from different users are independent. However, it has been shown that in reality user feedback is often influenced by earlier interactions of other users, e.g. via average ratings, number of views or sales per item, etc. This phenomenon is known as the bandwagon effect. In contrast with previous literature, we argue that the bandwagon effect should not be seen as a problem of statistical bias. In fact, we prove that this effect leaves both individual interactions and their sample mean unbiased. Nevertheless, we show that it can make estimators inconsistent, introducing a distinct set of problems for convergence in relevance estimation. Our theoretical analysis investigates the conditions under which the bandwagon effect poses a consistency problem and explores several approaches for mitigating these issues. This work aims to show that the bandwagon effect poses an underinvestigated open problem that is fundamentally distinct from the well-studied selection bias in recommendation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge