Ning Qian

Rethinking the Need for Source Models: Source-Free Domain Adaptation from Scratch Guided by a Vision-Language Model

May 04, 2026Abstract:Source-Free Domain Adaptation (SFDA) adapts source models to target domains without accessing source data, addressing privacy and transmission issues. However, existing methods still initialize from a source pre-trained model and thus are not truly source-free. Recent works have introduced Vision-Language (ViL) models to guide the adaptation process, in these methods, we observe that for the same target domain, different source models yield minimal variation in final results, indicating the source model itself has limited impact. Motivated by this, we propose ViL-Only Domain Adaptation (VODA) , a stricter setting that eliminates all dependencies on source domain, relying solely on a randomly initialized model, a ViL model, and unlabeled target data. We analyze the adaptation dynamics of VODA and introduce Two-Stage Denoised-Region Distillation (TS-DRD) , a two-stage framework that first warms up the model with ViL guidance, then seek a Denoised-Region inherent in both the ViL and adapting model, yielding cleaner supervision for distillation. Experiments on Office-Home, VisDA, and DomainNet-126 show that under VODA, TS-DRD achieves competitive or superior performance to existing SFDA methods that still use source models, demonstrating its effectiveness and the potential of the VODA setting.

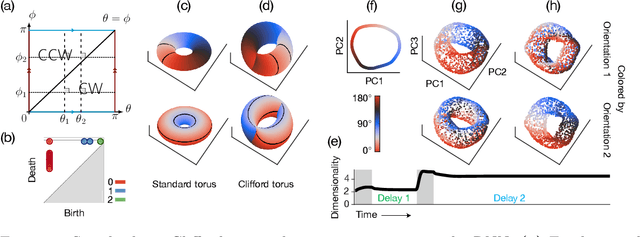

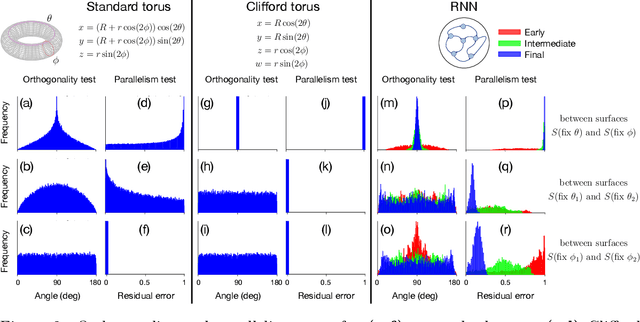

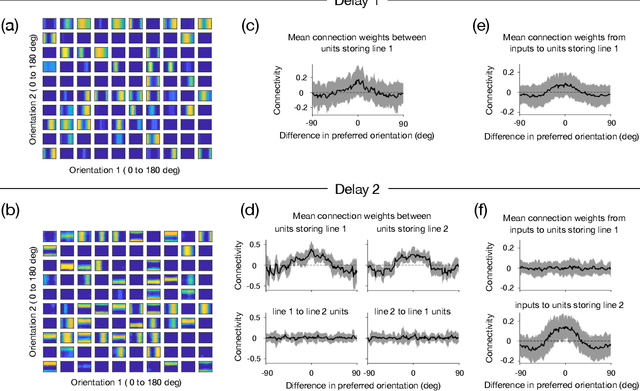

Recurrent neural network models for working memory of continuous variables: activity manifolds, connectivity patterns, and dynamic codes

Nov 01, 2021

Abstract:Many daily activities and psychophysical experiments involve keeping multiple items in working memory. When items take continuous values (e.g., orientation, contrast, length, loudness) they must be stored in a continuous structure of appropriate dimensions. We investigate how this structure is represented in neural circuits by training recurrent networks to report two previously shown stimulus orientations. We find the activity manifold for the two orientations resembles a Clifford torus. Although a Clifford and standard torus (the surface of a donut) are topologically equivalent, they have important functional differences. A Clifford torus treats the two orientations equally and keeps them in orthogonal subspaces, as demanded by the task, whereas a standard torus does not. We find and characterize the connectivity patterns that support the Clifford torus. Moreover, in addition to attractors that store information via persistent activity, our networks also use a dynamic code where units change their tuning to prevent new sensory input from overwriting the previously stored one. We argue that such dynamic codes are generally required whenever multiple inputs enter a memory system via shared connections. Finally, we apply our framework to a human psychophysics experiment in which subjects reported two remembered orientations. By varying the training conditions of the RNNs, we test and support the hypothesis that human behavior is a product of both neural noise and reliance on the more stable and behaviorally relevant memory of the ordinal relationship between the two orientations. This suggests that suitable inductive biases in RNNs are important for uncovering how the human brain implements working memory. Together, these results offer an understanding of the neural computations underlying a class of visual decoding tasks, bridging the scales from human behavior to synaptic connectivity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge