Nicolás D'Ippolito

Anonymous-by-Construction: An LLM-Driven Framework for Privacy-Preserving Text

Mar 17, 2026Abstract:Responsible use of AI demands that we protect sensitive information without undermining the usefulness of data, an imperative that has become acute in the age of large language models. We address this challenge with an on-premise, LLM-driven substitution pipeline that anonymizes text by replacing personally identifiable information (PII) with realistic, type-consistent surrogates. Executed entirely within organizational boundaries using local LLMs, the approach prevents data egress while preserving fluency and task-relevant semantics. We conduct a systematic, multi-metric, cross-technique evaluation on the Action-Based Conversation Dataset, benchmarking against industry standards (Microsoft Presidio and Google DLP) and a state-of-the-art approach (ZSTS, in redaction-only and redaction-plus-substitution variants). Our protocol jointly measures privacy, semantic utility, and trainability under privacy via a lifecycle-ready criterion obtained by fine-tuning a compact encoder (BERT+LoRA) on sanitized text. In addition, we assess agentic Q&A performance by inserting an on-premise anonymization layer before the answering LLM and evaluating the quality of its responses. This intermediate, type-preserving substitution stage ensures that no sensitive content is exposed to third-party APIs, enabling responsible deployment of Q\&A agents without compromising confidentiality. Our method attains state-of-the-art privacy, minimal topical drift, strong factual utility, and low trainability loss, outperforming rule-based approaches and named-entity recognition (NER) baselines and ZSTS variants on the combined privacy--utility--trainability frontier. These results show that local LLM substitution yields anonymized corpora that are both responsible to use and operationally valuable: safe for agentic pipelines and suitable for downstream fine-tuning with limited degradation.

Multi-tier Automated Planning for Adaptive Behavior (Extended Version)

Feb 27, 2020Abstract:A planning domain, as any model, is never complete and inevitably makes assumptions on the environment's dynamic. By allowing the specification of just one domain model, the knowledge engineer is only able to make one set of assumptions, and to specify a single objective-goal. Borrowing from work in Software Engineering, we propose a multi-tier framework for planning that allows the specification of different sets of assumptions, and of different corresponding objectives. The framework aims to support the synthesis of adaptive behavior so as to mitigate the intrinsic risk in any planning modeling task. After defining the multi-tier planning task and its solution concept, we show how to solve problem instances by a succinct compilation to a form of non-deterministic planning. In doing so, our technique justifies the applicability of planning with both fair and unfair actions, and the need for more efforts in developing planning systems supporting dual fairness assumptions.

Technical Report: Directed Controller Synthesis of Discrete Event Systems

May 31, 2016

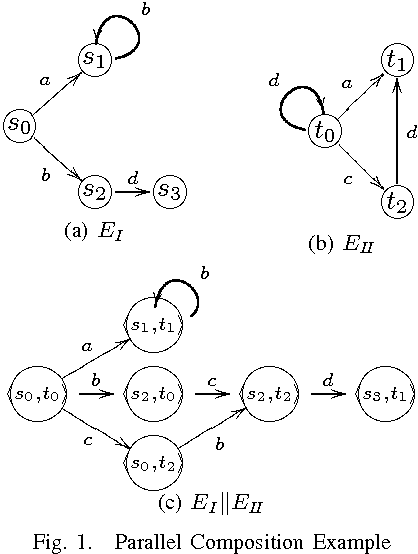

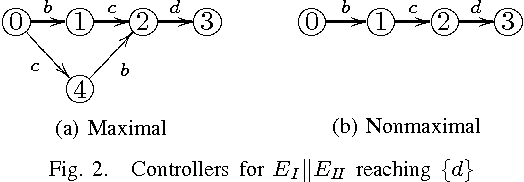

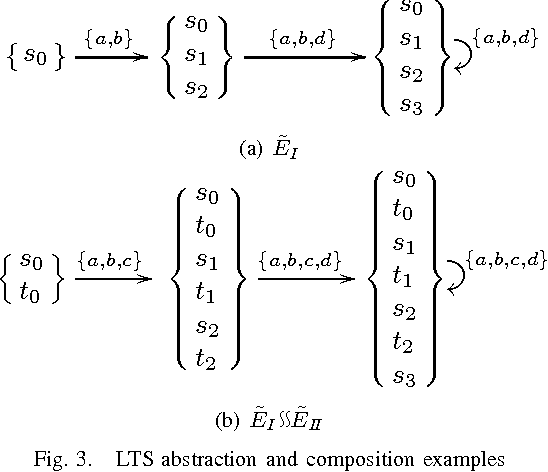

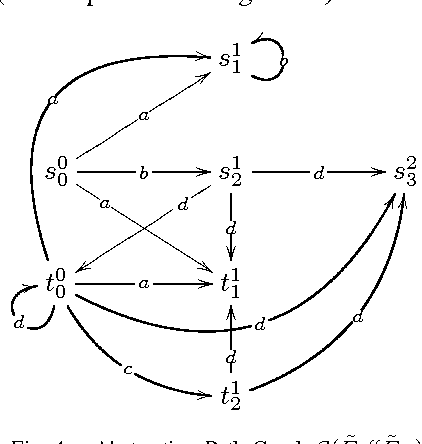

Abstract:This paper presents a Directed Controller Synthesis (DCS) technique for discrete event systems. The DCS method explores the solution space for reactive controllers guided by a domain-independent heuristic. The heuristic is derived from an efficient abstraction of the environment based on the componentized way in which complex environments are described. Then by building the composition of the components on-the-fly DCS obtains a solution by exploring a reduced portion of the state space. This work focuses on untimed discrete event systems with safety and co-safety (i.e. reachability) goals. An evaluation for the technique is presented comparing it to other well-known approaches to controller synthesis (based on symbolic representation and compositional analyses).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge