Natesh Shivakumar

A Deep learning Approach to Generate Contrast-Enhanced Computerised Tomography Angiography without the Use of Intravenous Contrast Agents

Mar 02, 2020

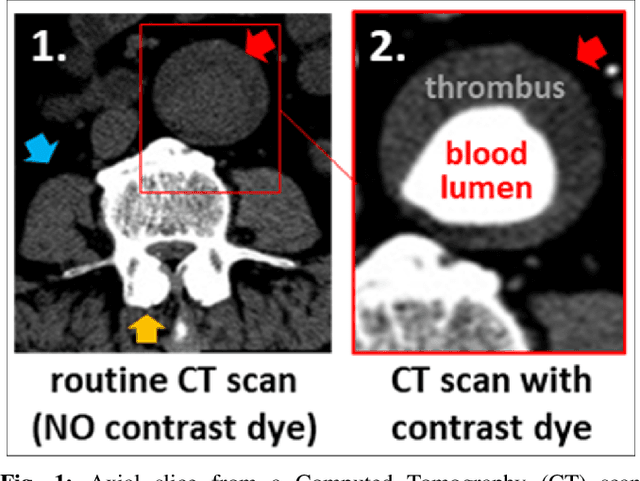

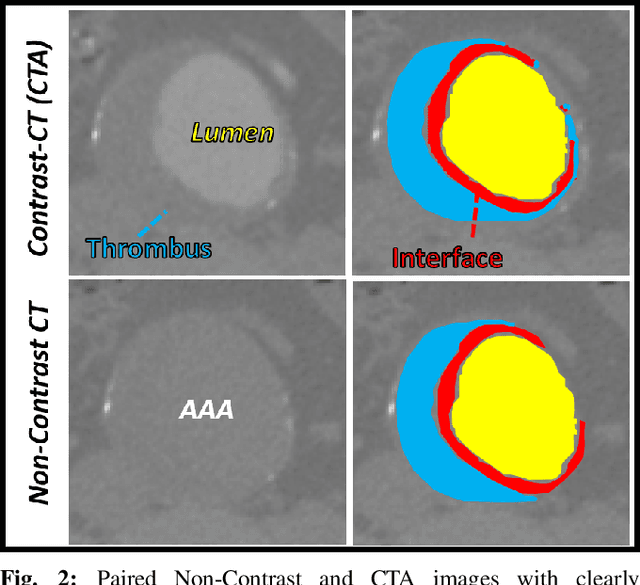

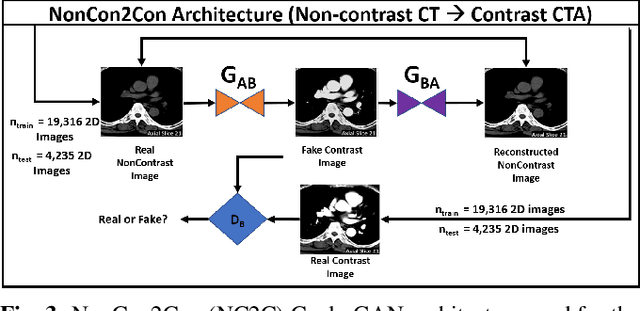

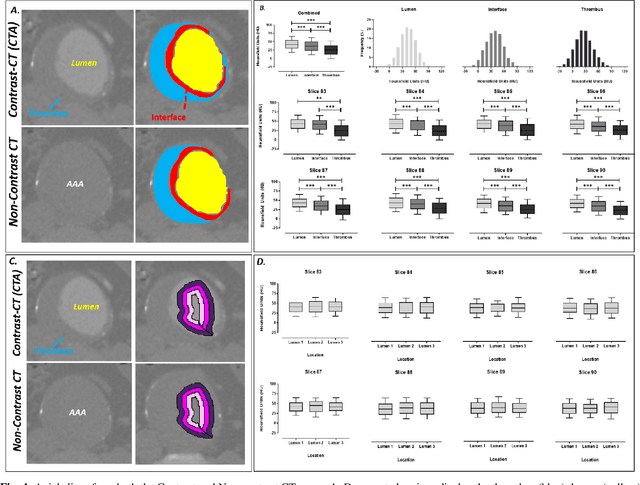

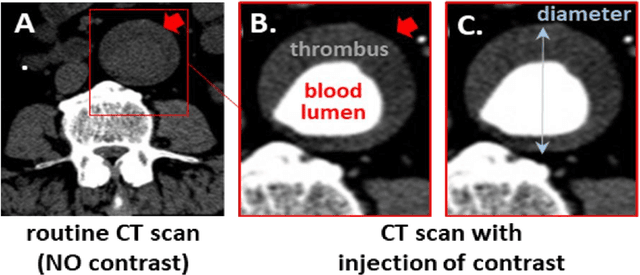

Abstract:Contrast-enhanced computed tomography angiograms (CTAs) are widely used in cardiovascular imaging to obtain a non-invasive view of arterial structures. However, contrast agents are associated with complications at the injection site as well as renal toxicity leading to contrast-induced nephropathy (CIN) and renal failure. We hypothesised that the raw data acquired from a non-contrast CT contains sufficient information to differentiate blood and other soft tissue components. We utilised deep learning methods to define the subtleties between soft tissue components in order to simulate contrast enhanced CTAs without contrast agents. Twenty-six patients with paired non-contrast and CTA images were randomly selected from an approved clinical study. Non-contrast axial slices within the AAA from 10 patients (n = 100) were sampled for the underlying Hounsfield unit (HU) distribution at the lumen, intra-luminal thrombus and interface locations. Sampling of HUs in these regions revealed significant differences between all regions (p<0.001 for all comparisons), confirming the intrinsic differences in the radiomic signatures between these regions. To generate a large training dataset, paired axial slices from the training set (n=13) were augmented to produce a total of 23,551 2-D images. We trained a 2-D Cycle Generative Adversarial Network (cycleGAN) for this non-contrast to contrast (NC2C) transformation task. The accuracy of the cycleGAN output was assessed by comparison to the contrast image. This pipeline is able to differentiate between visually incoherent soft tissue regions in non-contrast CT images. The CTAs generated from the non-contrast images bear strong resemblance to the ground truth. Here we describe a novel application of Generative Adversarial Network for CT image processing. This is poised to disrupt clinical pathways requiring contrast enhanced CT imaging.

A Deep Learning Approach to Automate High-Resolution Blood Vessel Reconstruction on Computerized Tomography Images With or Without the Use of Contrast Agent

Feb 09, 2020

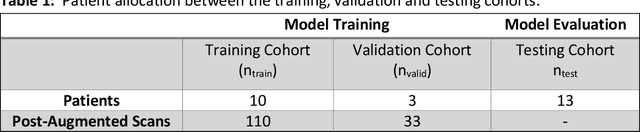

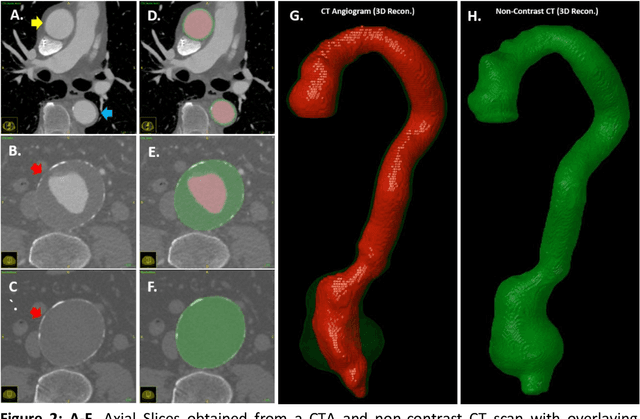

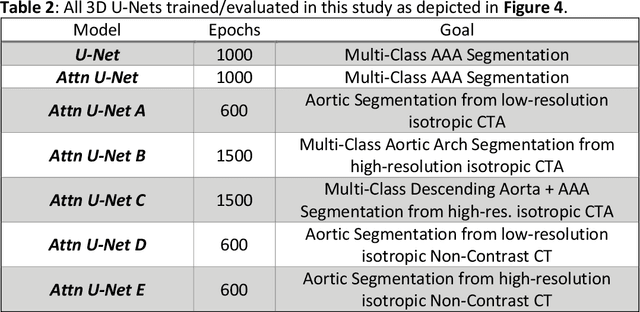

Abstract:Existing methods to reconstruct vascular structures from a computed tomography (CT) angiogram rely on injection of intravenous contrast to enhance the radio-density within the vessel lumen. However, pathological changes can be present in the blood lumen, vessel wall or a combination of both that prevent accurate reconstruction. In the example of aortic aneurysmal disease, a blood clot or thrombus adherent to the aortic wall within the expanding aneurysmal sac is present in 70-80% of cases. These deformations prevent the automatic extraction of vital clinically relevant information by current methods. In this study, we implemented a modified U-Net architecture with attention-gating to establish a high-throughput and automated segmentation pipeline of pathological blood vessels in CT images acquired with or without the use of a contrast agent. Twenty-six patients with paired non-contrast and contrast-enhanced CT images within the ongoing Oxford Abdominal Aortic Aneurysm (OxAAA) study were randomly selected, manually annotated and used for model training and evaluation (13/13). Data augmentation methods were implemented to diversify the training data set in a ratio of 10:1. The performance of our Attention-based U-Net in extracting both the inner lumen and the outer wall of the aortic aneurysm from CT angiograms (CTA) was compared against a generic 3-D U-Net and displayed superior results. Subsequent implementation of this network architecture within the aortic segmentation pipeline from both contrast-enhanced CTA and non-contrast CT images has allowed for accurate and efficient extraction of the entire aortic volume. This extracted volume can be used to standardize current methods of aneurysmal disease management and sets the foundation for subsequent complex geometric and morphological analysis. Furthermore, the proposed pipeline can be extended to other vascular pathologies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge