Naoto Mitsume

Graph Neural PDE Solvers with Conservation and Similarity-Equivariance

May 25, 2024Abstract:Utilizing machine learning to address partial differential equations (PDEs) presents significant challenges due to the diversity of spatial domains and their corresponding state configurations, which complicates the task of encompassing all potential scenarios through data-driven methodologies alone. Moreover, there are legitimate concerns regarding the generalization and reliability of such approaches, as they often overlook inherent physical constraints. In response to these challenges, this study introduces a novel machine-learning architecture that is highly generalizable and adheres to conservation laws and physical symmetries, thereby ensuring greater reliability. The foundation of this architecture is graph neural networks (GNNs), which are adept at accommodating a variety of shapes and forms. Additionally, we explore the parallels between GNNs and traditional numerical solvers, facilitating a seamless integration of conservative principles and symmetries into machine learning models. Our findings from experiments demonstrate that the model's inclusion of physical laws significantly enhances its generalizability, i.e., no significant accuracy degradation for unseen spatial domains while other models degrade. The code is available at https://github.com/yellowshippo/fluxgnn-icml2024.

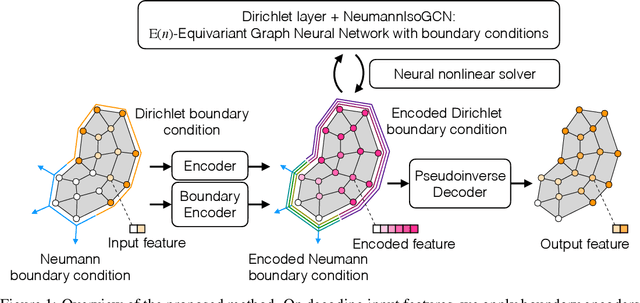

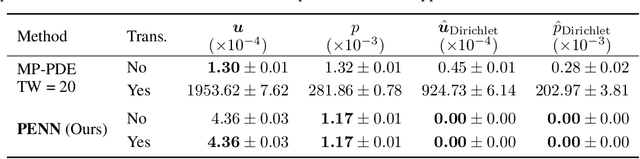

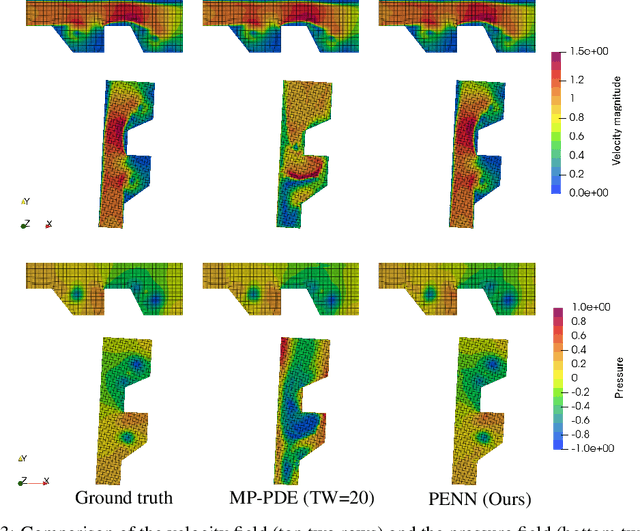

Physics-Embedded Neural Networks: $\boldsymbol{\mathrm{E}(n)}$-Equivariant Graph Neural PDE Solvers

May 24, 2022

Abstract:Graph neural network (GNN) is a promising approach to learning and predicting physical phenomena described in boundary value problems, such as partial differential equations (PDEs) with boundary conditions. However, existing models inadequately treat boundary conditions essential for the reliable prediction of such problems. In addition, because of the locally connected nature of GNNs, it is difficult to accurately predict the state after a long time, where interaction between vertices tends to be global. We present our approach termed physics-embedded neural networks that considers boundary conditions and predicts the state after a long time using an implicit method. It is built based on an $\mathrm{E}(n)$-equivariant GNN, resulting in high generalization performance on various shapes. We demonstrate that our model learns flow phenomena in complex shapes and outperforms a well-optimized classical solver and a state-of-the-art machine learning model in speed-accuracy trade-off. Therefore, our model can be a useful standard for realizing reliable, fast, and accurate GNN-based PDE solvers.

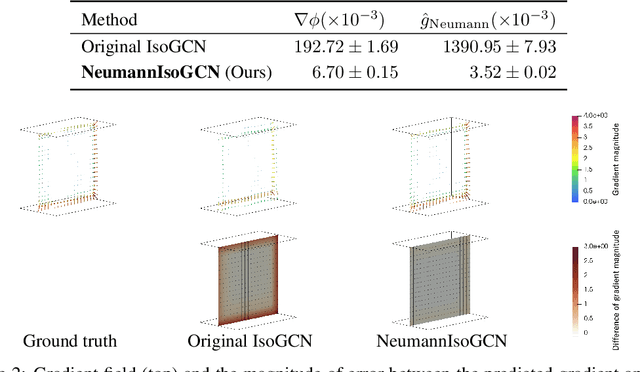

Isometric Transformation Invariant and Equivariant Graph Convolutional Networks

May 13, 2020

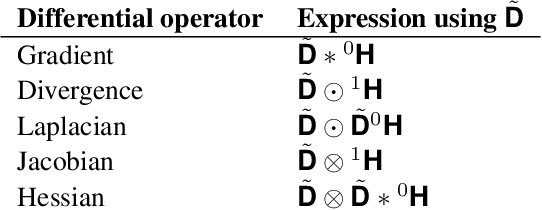

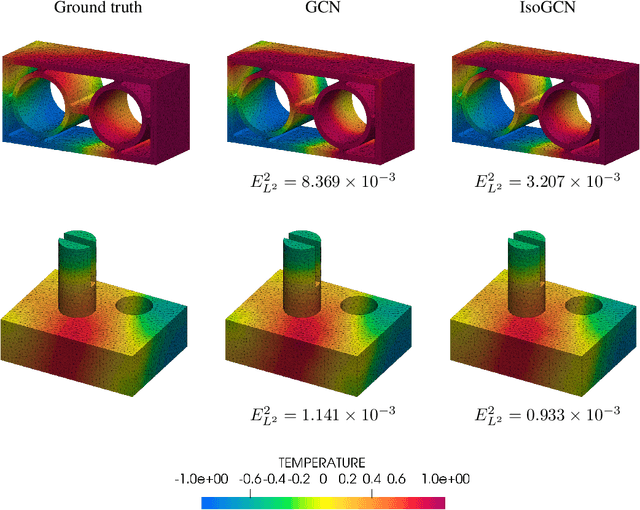

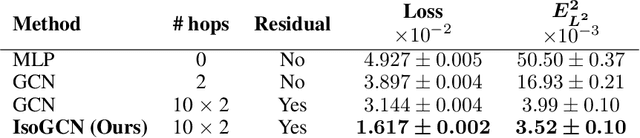

Abstract:Graphs correspond to one of the most important data structures used to represent pairwise relations between objects. Specifically, using the graphs embedded in the Euclidean space is essential to solve real problems, such as object detection, structural chemistry analysis, and physical simulation. A crucial requirement to employ the graphs in the Euclidean space is to learn the isometric transformation invariant and equivariant features. In the present paper, we propose a set of the transformation invariant and equivariant models called IsoGCNs that are based on graph convolutional networks. We discuss an example of IsoGCNs that corresponds to differential equations. We also demonstrate that the proposed model achieves high prediction performance on the considered finite element analysis dataset and can scale up to the graphs with 1M vertices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge