N. S. Lakshmiprabha

Fusing Face and Periocular biometrics using Canonical correlation analysis

Mar 29, 2016

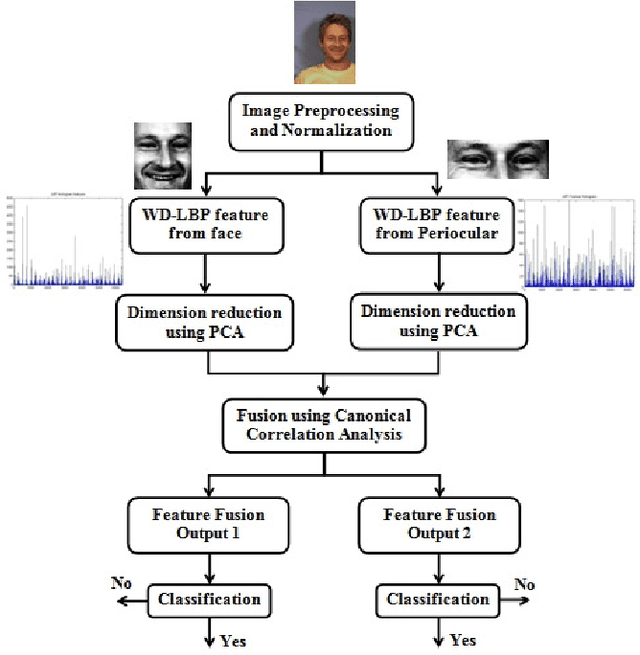

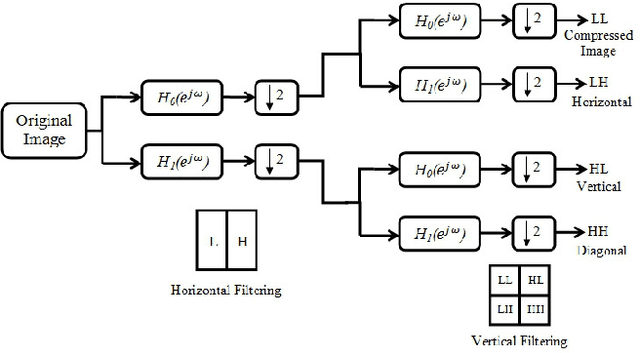

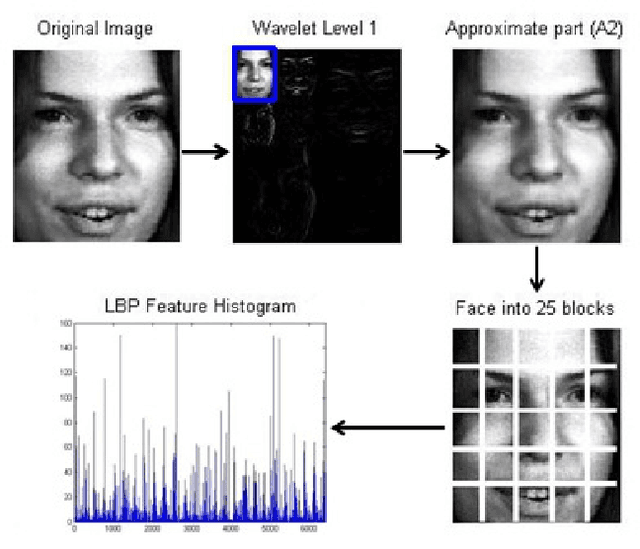

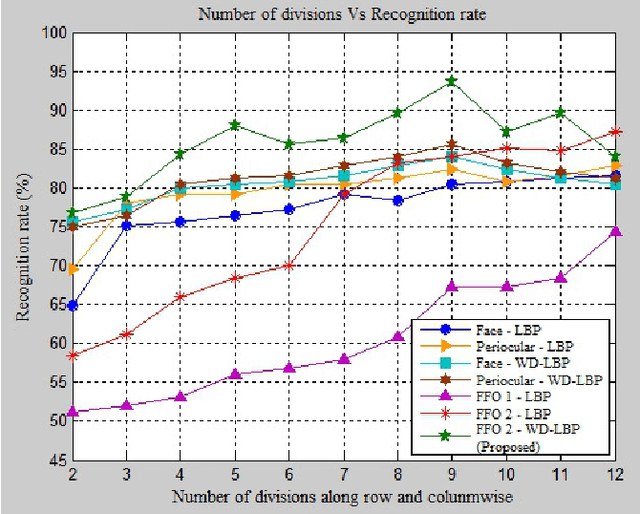

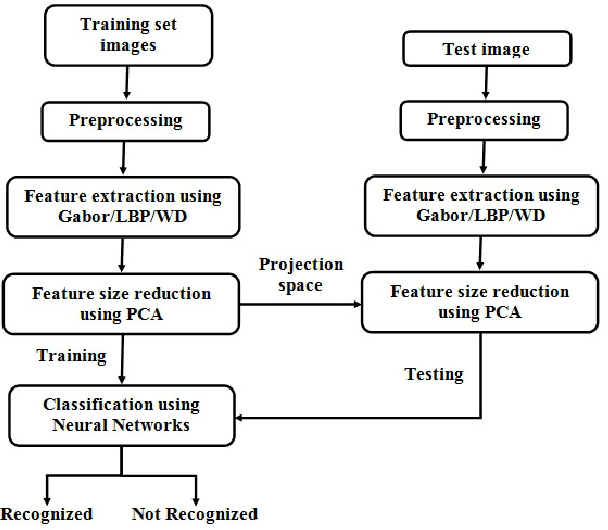

Abstract:This paper presents a novel face and periocular biometric fusion at feature level using canonical correlation analysis. Face recognition itself has limitations such as illumination, pose, expression, occlusion etc. Also, periocular biometrics has spectacles, head angle, hair and expression as its limitations. Unimodal biometrics cannot surmount all these limitations. The recognition accuracy can be increased by fusing dual information (face and periocular) from a single source (face image) using canonical correlation analysis (CCA). This work also proposes a new wavelet decomposed local binary pattern (WD-LBP) feature extractor which provides sufficient features for fusion. A detailed analysis on face and periocular biometrics shows that WD-LBP features are more accurate and faster than local binary pattern (LBP) and gabor wavelet. The experimental results using Muct face database reveals that the proposed multimodal biometrics performs better than the unimodal biometrics.

Face Image Analysis using AAM, Gabor, LBP and WD features for Gender, Age, Expression and Ethnicity Classification

Mar 29, 2016

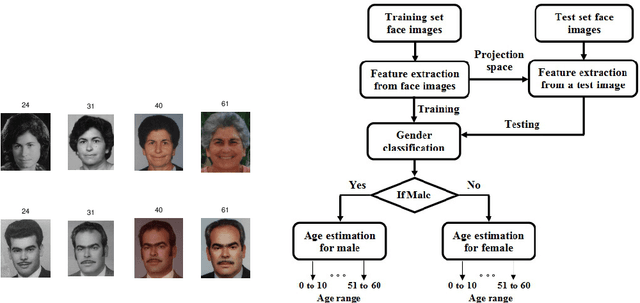

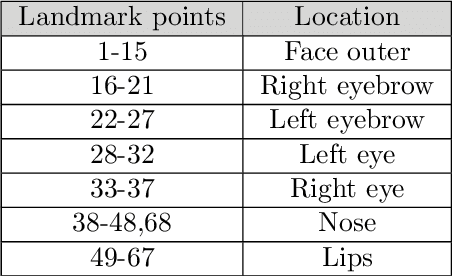

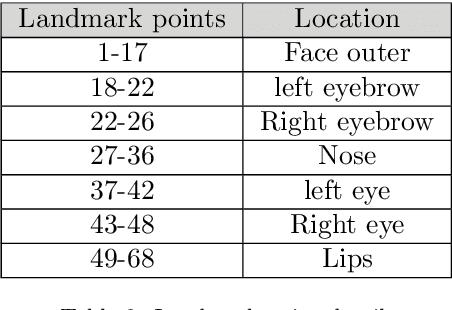

Abstract:The growth in electronic transactions and human machine interactions rely on the information such as gender, age, expression and ethnicity provided by the face image. In order to obtain these information, feature extraction plays a major role. In this paper, retrieval of age, gender, expression and race information from an individual face image is analysed using different feature extraction methods. The performance of four major feature extraction methods such as Active Appearance Model (AAM), Gabor wavelets, Local Binary Pattern (LBP) and Wavelet Decomposition (WD) are analyzed for gender recognition, age estimation, expression recognition and racial recognition in terms of accuracy (recognition rate), time for feature extraction, neural training and time to test an image. Each of this recognition system is compared with four feature extractors on same dataset (training and validation set) to get a better understanding in its performance. Experiments carried out on FG-NET, Cohn-Kanade, PAL face database shows that each method has its own merits and demerits. Hence it is practically impossible to define a method which is best at all circumstances with less computational complexity. Further, a detailed comparison of age estimation and age estimation using gender information is provided along with a solution to overcome aging effect in case of gender recognition. An attempt has been made in obtaining all (i.e. gender, age range, expression and ethnicity) information from a test image in a single go.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge