Mohammad Ali Masnadi-Shirazi

Online Multi-Object Tracking with delta-GLMB Filter based on Occlusion and Identity Switch Handling

Nov 19, 2020

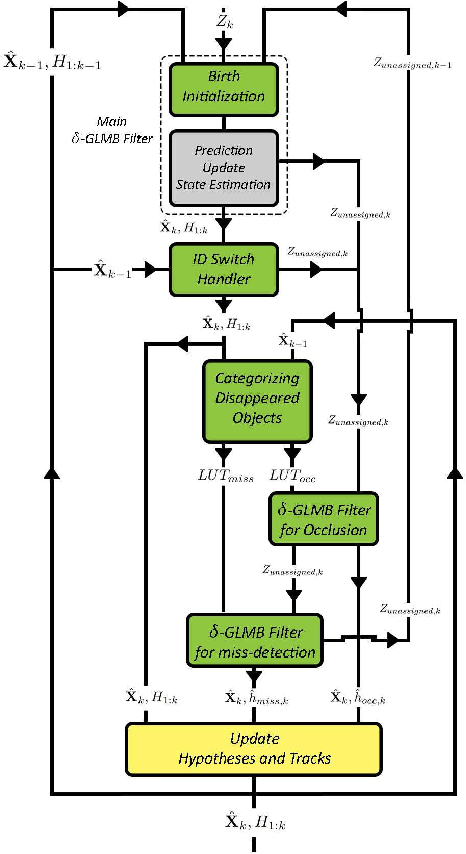

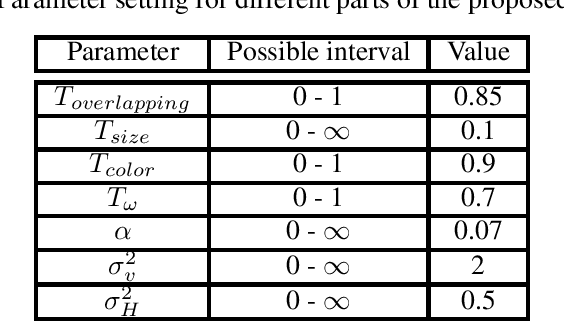

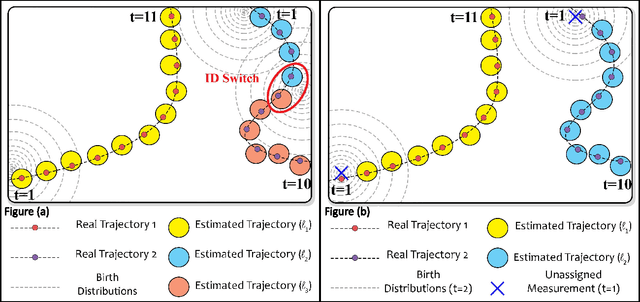

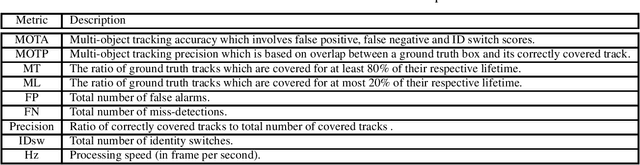

Abstract:In this paper, we propose an online multi-object tracking (MOT) method in a delta Generalized Labeled Multi-Bernoulli (delta-GLMB) filter framework to address occlusion and miss-detection issues, reduce false alarms, and recover identity switch (ID switch). To handle occlusion and miss-detection issues, we propose a measurement-to-disappeared track association method based on one-step delta-GLMB filter, so it is possible to manage these difficulties by jointly processing occluded or miss-detected objects. This part of proposed method is based on a proposed similarity metric which is responsible for defining the weight of hypothesized reappeared tracks. We also extend the delta-GLMB filter to efficiently recover switched IDs using the cardinality density, size and color features of the hypothesized tracks. We also propose a novel birth model to achieve more effective clutter removal performance. In both occlusion/miss-detection handler and newly-birthed object detector sections of the proposed method, unassigned measurements play a significant role, since they are used as the candidates for reappeared or birth objects. In addition, we perform an ablation study which confirms the effectiveness of our contributions in comparison with the baseline method. We evaluate the proposed method on well-known and publicly available MOT15 and MOT17 test datasets which are focused on pedestrian tracking. Experimental results show that the proposed tracker performs better or at least at the same level of the state-of-the-art online and offline MOT methods. It effectively handles the occlusion and ID switch issues and reduces false alarms as well.

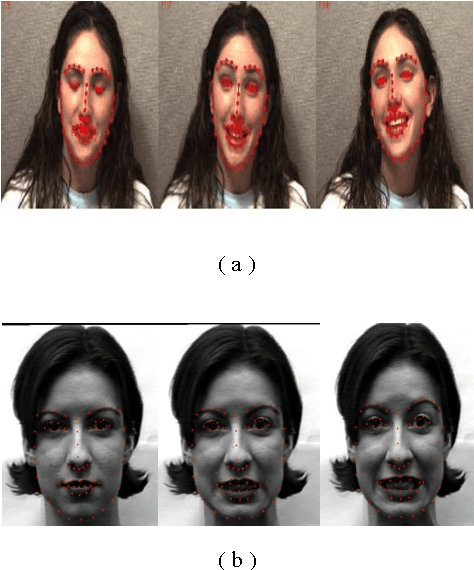

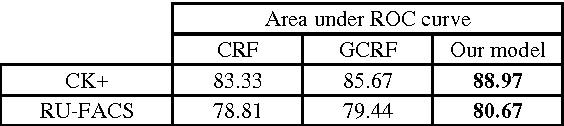

Facial Expression Recognition Using Sparse Gaussian Conditional Random Field

Nov 06, 2015

Abstract:The analysis of expression and facial Action Units (AUs) detection are very important tasks in fields of computer vision and Human Computer Interaction (HCI) due to the wide range of applications in human life. Many works has been done during the past few years which has their own advantages and disadvantages. In this work we present a new model based on Gaussian Conditional Random Field. We solve our objective problem using ADMM and we show how well the proposed model works. We train and test our work on two facial expression datasets, CK+ and RU-FACS. Experimental evaluation shows that our proposed approach outperform state of the art expression recognition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge