Minhaz Zibran

When AI Teammates Meet Code Review: Collaboration Signals Shaping the Integration of Agent-Authored Pull Requests

Feb 23, 2026Abstract:Autonomous coding agents increasingly contribute to software development by submitting pull requests on GitHub; yet, little is known about how these contributions integrate into human-driven review workflows. We present a large empirical study of agent-authored pull requests using the public AIDev dataset, examining integration outcomes, resolution speed, and review-time collaboration signals. Using logistic regression with repository-clustered standard errors, we find that reviewer engagement has the strongest correlation with successful integration, whereas larger change sizes and coordination-disrupting actions, such as force pushes, are associated with a lower likelihood of merging. In contrast, iteration intensity alone provides limited explanatory power once collaboration signals are considered. A qualitative analysis further shows that successful integration occurs when agents engage in actionable review loops that converge toward reviewer expectations. Overall, our results highlight that the effective integration of agent-authored pull requests depends not only on code quality but also on alignment with established review and coordination practices.

An Empirical Study of the Relationships between Code Readability and Software Complexity

Aug 30, 2019

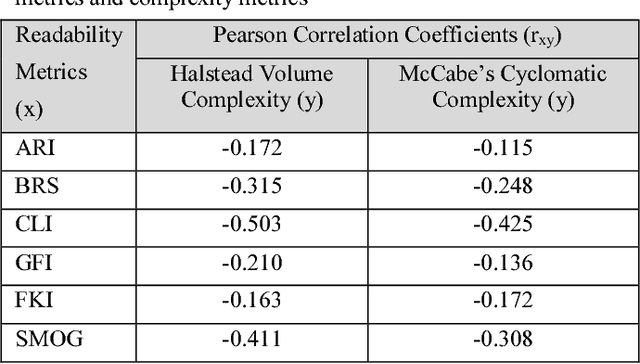

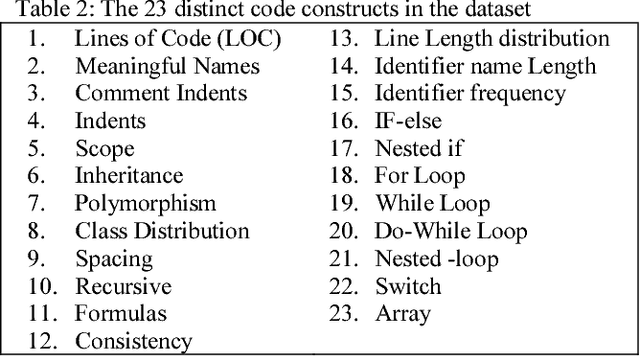

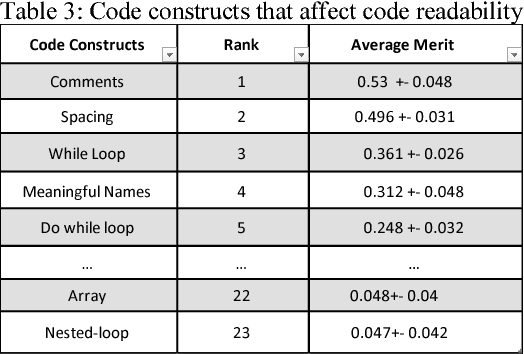

Abstract:Code readability and software complexity are important software quality metrics that impact other software metrics such as maintainability, reusability, portability and reliability. This paper presents an empirical study of the relationships between code readability and program complexity. The results are derived from an analysis of 35 Java programs that cover 23 distinct code constructs. The analysis includes six readability metrics and two complexity metrics. Our study empirically confirms the existing wisdom that readability and complexity are negatively correlated. Applying a machine learning technique, we also identify and rank those code constructs that substantially affect code readability.

* 7 pages, 2 figures, 3 tables

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge