Miguel Faria

AMALIA Technical Report: A Fully Open Source Large Language Model for European Portuguese

Mar 27, 2026Abstract:Despite rapid progress in open large language models (LLMs), European Portuguese (pt-PT) remains underrepresented in both training data and native evaluation, with machine-translated benchmarks likely missing the variant's linguistic and cultural nuances. We introduce AMALIA, a fully open LLM that prioritizes pt-PT by using more high-quality pt-PT data during both the mid- and post-training stages. To evaluate pt-PT more faithfully, we release a suite of pt-PT benchmarks that includes translated standard tasks and four new datasets targeting pt-PT generation, linguistic competence, and pt-PT/pt-BR bias. Experiments show that AMALIA matches strong baselines on translated benchmarks while substantially improving performance on pt-PT-specific evaluations, supporting the case for targeted training and native benchmarking for European Portuguese.

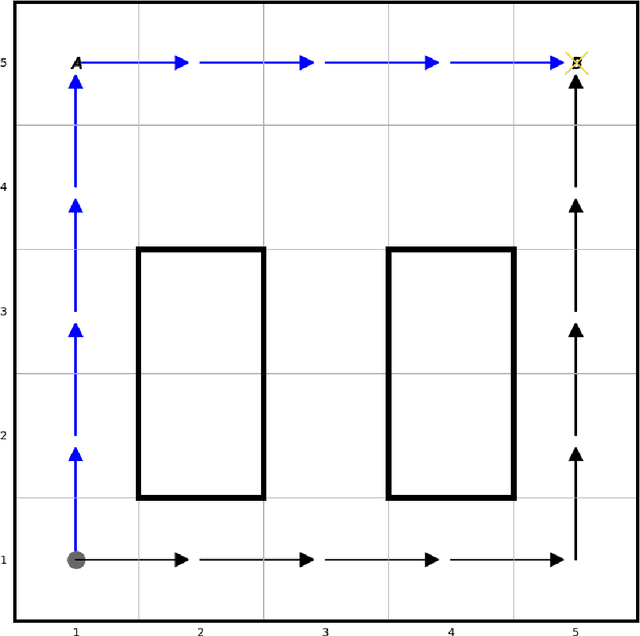

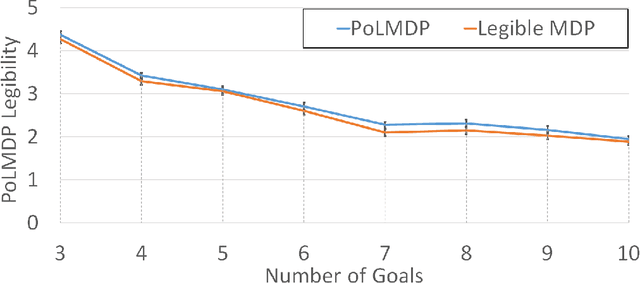

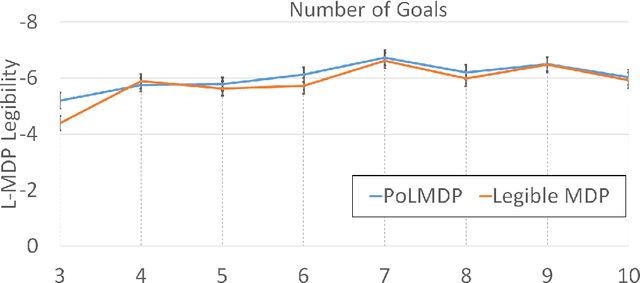

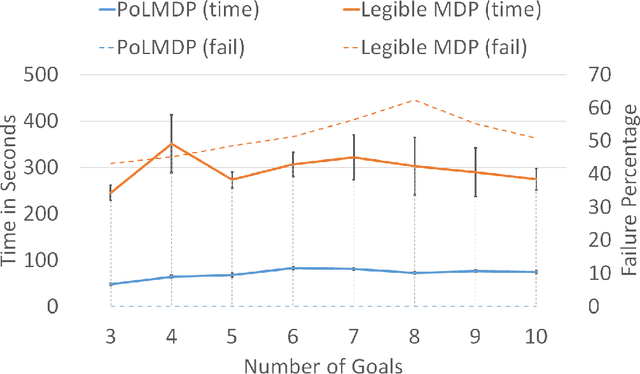

"Guess what I'm doing": Extending legibility to sequential decision tasks

Sep 19, 2022

Abstract:In this paper we investigate the notion of legibility in sequential decision tasks under uncertainty. Previous works that extend legibility to scenarios beyond robot motion either focus on deterministic settings or are computationally too expensive. Our proposed approach, dubbed PoL-MDP, is able to handle uncertainty while remaining computationally tractable. We establish the advantages of our approach against state-of-the-art approaches in several simulated scenarios of different complexity. We also showcase the use of our legible policies as demonstrations for an inverse reinforcement learning agent, establishing their superiority against the commonly used demonstrations based on the optimal policy. Finally, we assess the legibility of our computed policies through a user study where people are asked to infer the goal of a mobile robot following a legible policy by observing its actions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge