Mei-Chen Yeh

Zero-Shot Recognition through Image-Guided Semantic Classification

Jul 23, 2020

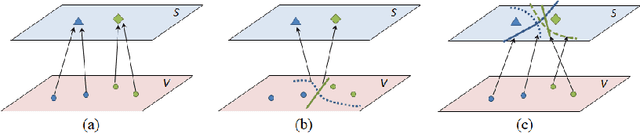

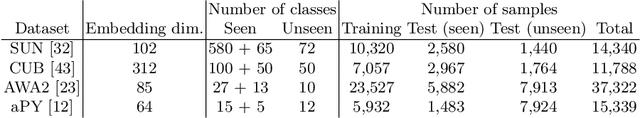

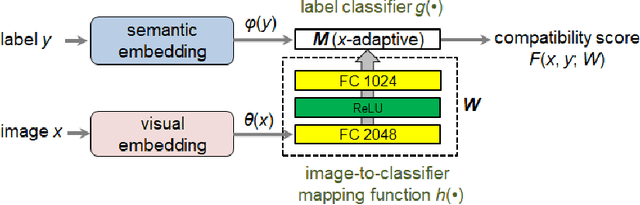

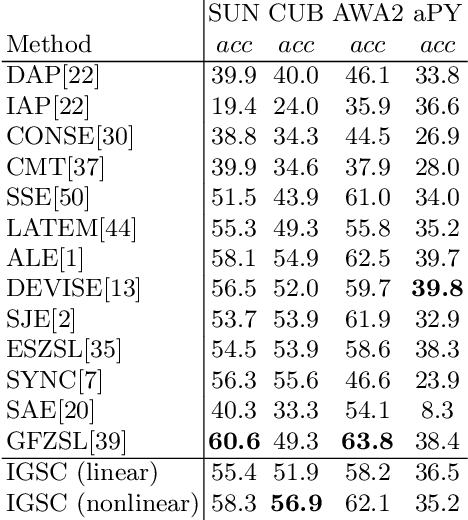

Abstract:We present a new embedding-based framework for zero-shot learning (ZSL). Most embedding-based methods aim to learn the correspondence between an image classifier (visual representation) and its class prototype (semantic representation) for each class. Motivated by the binary relevance method for multi-label classification, we propose to inversely learn the mapping between an image and a semantic classifier. Given an input image, the proposed Image-Guided Semantic Classification (IGSC) method creates a label classifier, being applied to all label embeddings to determine whether a label belongs to the input image. Therefore, semantic classifiers are image-adaptive and are generated during inference. IGSC is conceptually simple and can be realized by a slight enhancement of an existing deep architecture for classification; yet it is effective and outperforms state-of-the-art embedding-based generalized ZSL approaches on standard benchmarks.

Learning Image Conditioned Label Space for Multilabel Classification

Feb 21, 2018

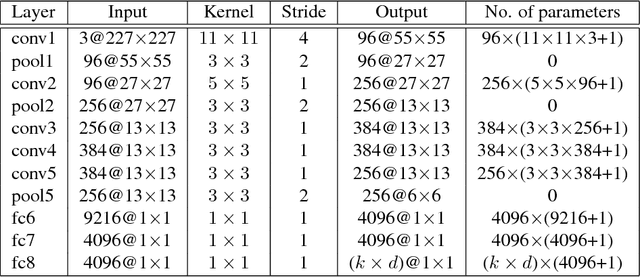

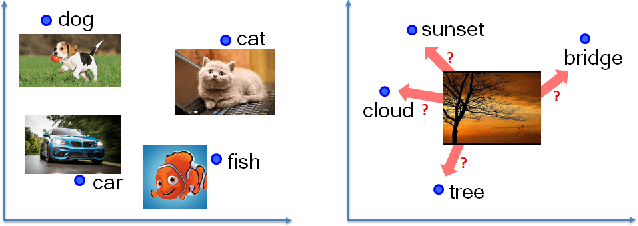

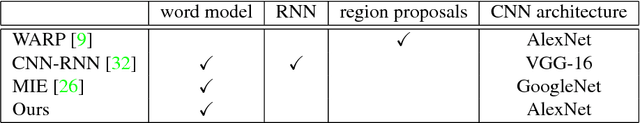

Abstract:This work addresses the task of multilabel image classification. Inspired by the great success from deep convolutional neural networks (CNNs) for single-label visual-semantic embedding, we exploit extending these models for multilabel images. Specifically, we propose an image-dependent ranking model, which returns a ranked list of labels according to its relevance to the input image. In contrast to conventional CNN models that learn an image representation (i.e. the image embedding vector), the developed model learns a mapping (i.e. a transformation matrix) from an image in an attempt to differentiate between its relevant and irrelevant labels. Despite the conceptual simplicity of our approach, experimental results on a public benchmark dataset demonstrate that the proposed model achieves state-of-the-art performance while using fewer training images than other multilabel classification methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge