Meenu Ajith

A Novel Indoor Positioning System for unprepared firefighting scenarios

Aug 04, 2020

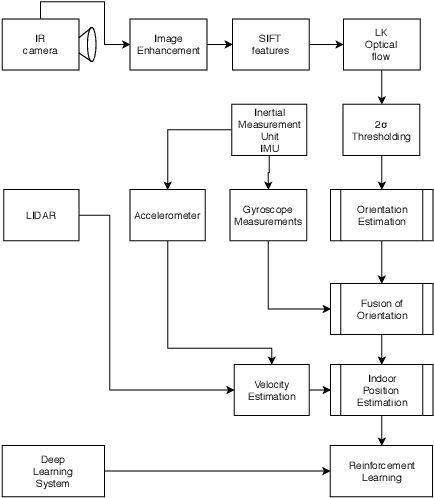

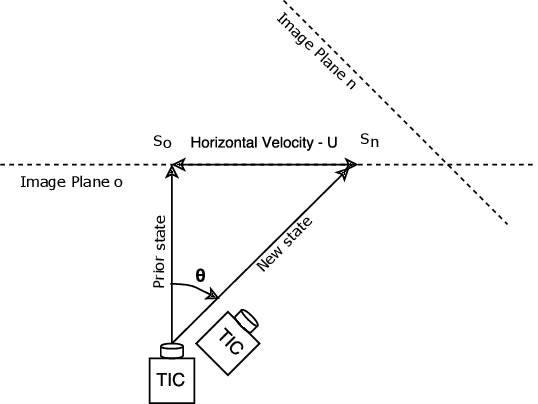

Abstract:Situational awareness and Indoor location tracking for firefighters is one of the tasks with paramount importance in search and rescue operations. For Indoor Positioning systems (IPS), GPS is not the best possible solution. There are few other techniques like dead reckoning, Wifi and bluetooth based triangulation, Structure from Motion (SFM) based scene reconstruction for Indoor positioning system. However due to high temperatures, the rapidly changing environment of fires, and low parallax in the thermal images, these techniques are not suitable for relaying the necessary information in a fire fighting environment needed to increase situational awareness in real time. In fire fighting environments, thermal imaging cameras are used due to smoke and low visibility hence obtaining relative orientation from the vanishing point estimation is very difficult. The following technique that is the content of this research implements a novel optical flow based video compass for orientation estimation and fused IMU data based activity recognition for IPS. This technique helps first responders to go into unprepared, unknown environments and still maintain situational awareness like the orientation and, position of the victim fire fighters.

Time accelerated image super-resolution using shallow residual feature representative network

Apr 08, 2020

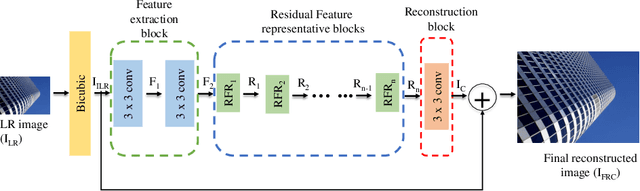

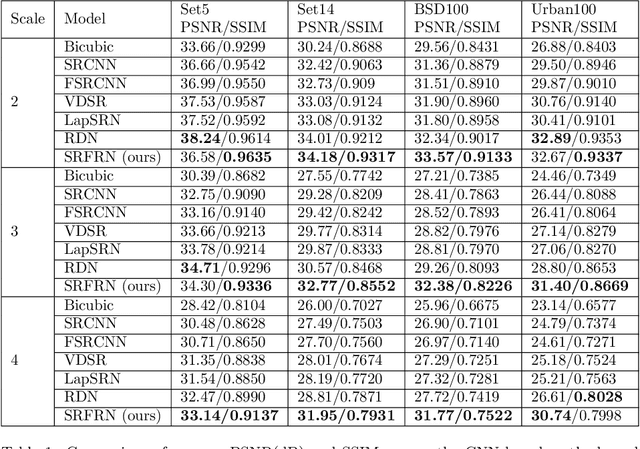

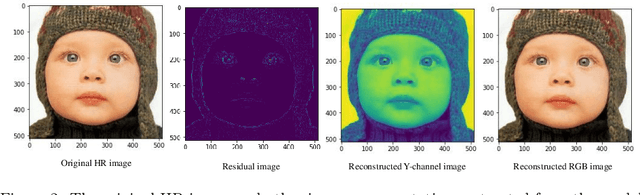

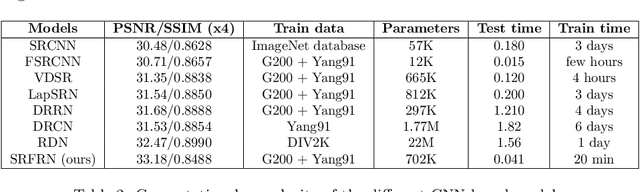

Abstract:The recent advances in deep learning indicate significant progress in the field of single image super-resolution. With the advent of these techniques, high-resolution image with high peak signal to noise ratio (PSNR) and excellent perceptual quality can be reconstructed. The major challenges associated with existing deep convolutional neural networks are their computational complexity and time; the increasing depth of the networks, often result in high space complexity. To alleviate these issues, we developed an innovative shallow residual feature representative network (SRFRN) that uses a bicubic interpolated low-resolution image as input and residual representative units (RFR) which include serially stacked residual non-linear convolutions. Furthermore, the reconstruction of the high-resolution image is done by combining the output of the RFR units and the residual output from the bicubic interpolated LR image. Finally, multiple experiments have been performed on the benchmark datasets and the proposed model illustrates superior performance for higher scales. Besides, this model also exhibits faster execution time compared to all the existing approaches.

Unsupervised Segmentation of Fire and Smoke from Infra-Red Videos

Sep 18, 2019

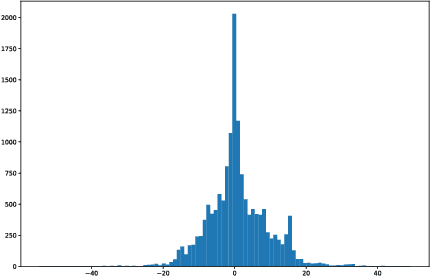

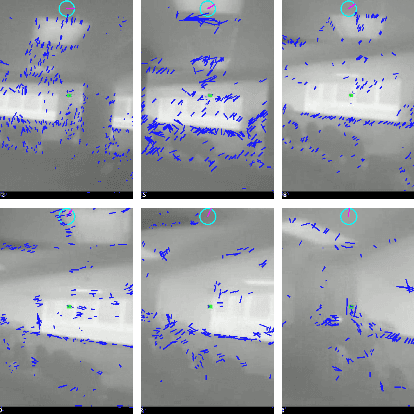

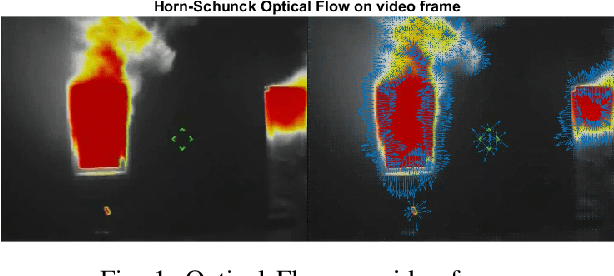

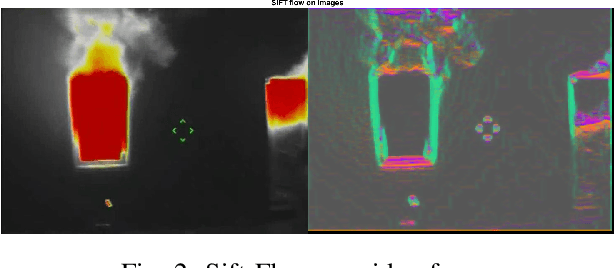

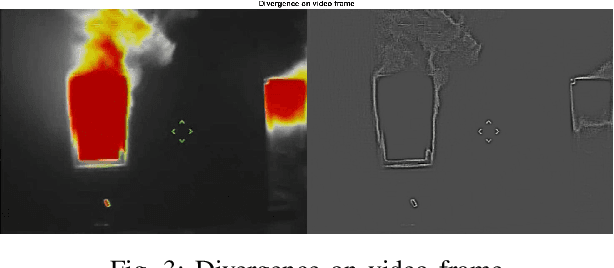

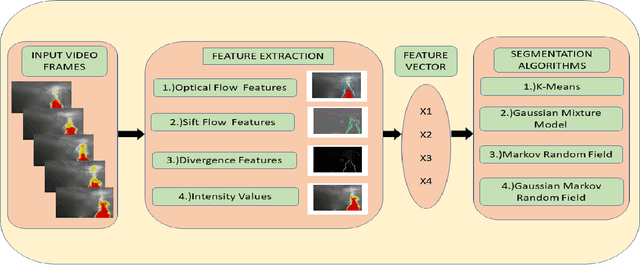

Abstract:This paper proposes a vision-based fire and smoke segmentation system which use spatial, temporal and motion information to extract the desired regions from the video frames. The fusion of information is done using multiple features such as optical flow, divergence and intensity values. These features extracted from the images are used to segment the pixels into different classes in an unsupervised way. A comparative analysis is done by using multiple clustering algorithms for segmentation. Here the Markov Random Field performs more accurately than other segmentation algorithms since it characterizes the spatial interactions of pixels using a finite number of parameters. It builds a probabilistic image model that selects the most likely labeling using the maximum a posteriori (MAP) estimation. This unsupervised approach is tested on various images and achieves a frame-wise fire detection rate of 95.39%. Hence this method can be used for early detection of fire in real-time and it can be incorporated into an indoor or outdoor surveillance system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge