Matthew Mattina

Euphrates: Algorithm-SoC Co-Design for Low-Power Mobile Continuous Vision

Mar 29, 2018

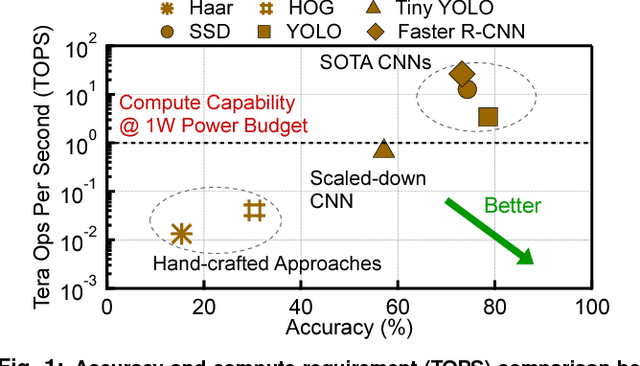

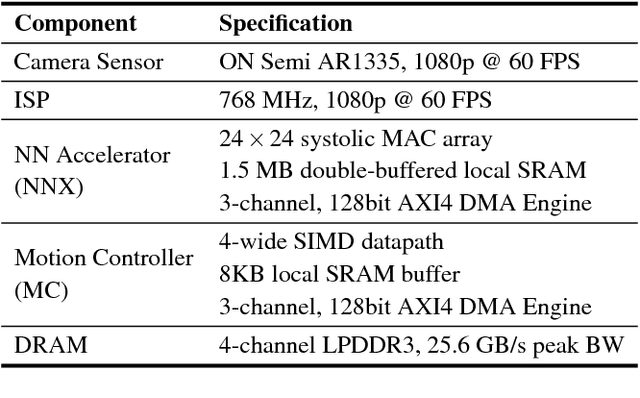

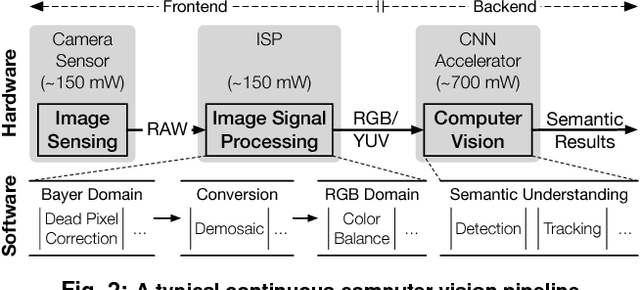

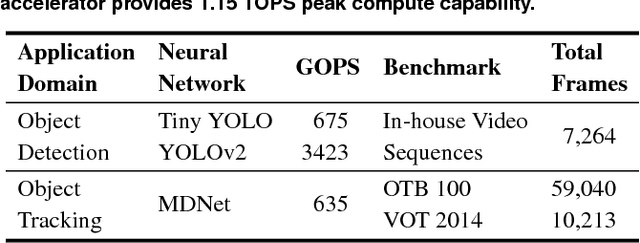

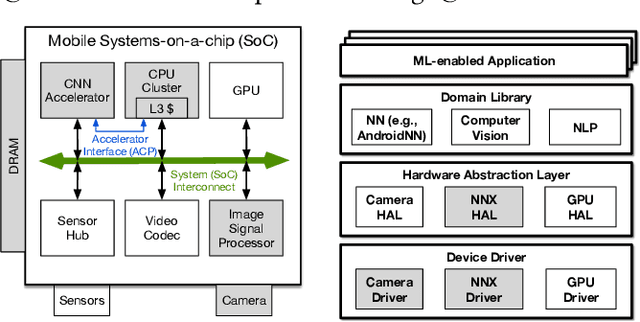

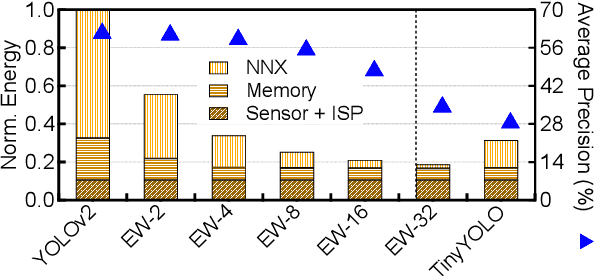

Abstract:Continuous computer vision (CV) tasks increasingly rely on convolutional neural networks (CNN). However, CNNs have massive compute demands that far exceed the performance and energy constraints of mobile devices. In this paper, we propose and develop an algorithm-architecture co-designed system, Euphrates, that simultaneously improves the energy-efficiency and performance of continuous vision tasks. Our key observation is that changes in pixel data between consecutive frames represents visual motion. We first propose an algorithm that leverages this motion information to relax the number of expensive CNN inferences required by continuous vision applications. We co-design a mobile System-on-a-Chip (SoC) architecture to maximize the efficiency of the new algorithm. The key to our architectural augmentation is to co-optimize different SoC IP blocks in the vision pipeline collectively. Specifically, we propose to expose the motion data that is naturally generated by the Image Signal Processor (ISP) early in the vision pipeline to the CNN engine. Measurement and synthesis results show that Euphrates achieves up to 66% SoC-level energy savings (4 times for the vision computations), with only 1% accuracy loss.

Mobile Machine Learning Hardware at ARM: A Systems-on-Chip Perspective

Feb 01, 2018

Abstract:Machine learning is playing an increasingly significant role in emerging mobile application domains such as AR/VR, ADAS, etc. Accordingly, hardware architects have designed customized hardware for machine learning algorithms, especially neural networks, to improve compute efficiency. However, machine learning is typically just one processing stage in complex end-to-end applications, involving multiple components in a mobile Systems-on-a-chip (SoC). Focusing only on ML accelerators loses bigger optimization opportunity at the system (SoC) level. This paper argues that hardware architects should expand the optimization scope to the entire SoC. We demonstrate one particular case-study in the domain of continuous computer vision where camera sensor, image signal processor (ISP), memory, and NN accelerator are synergistically co-designed to achieve optimal system-level efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge