Martino Banchio

Artificial Intelligence and Auction Design

Feb 12, 2022

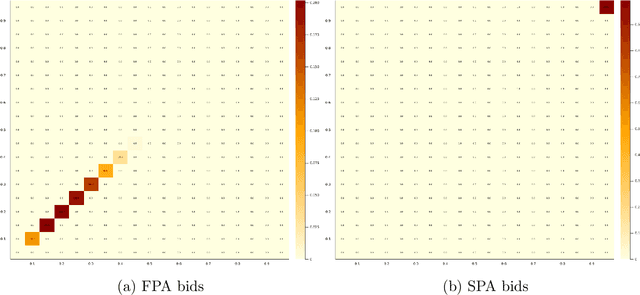

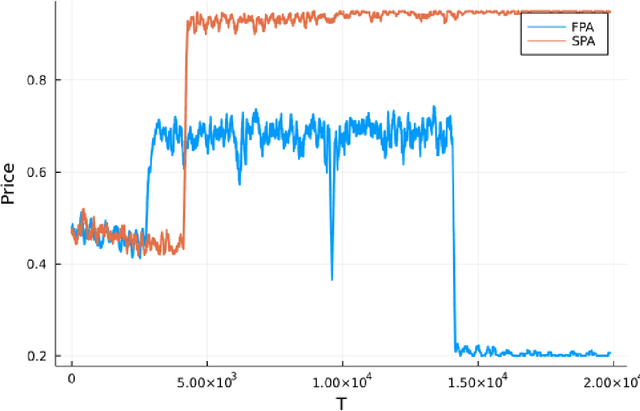

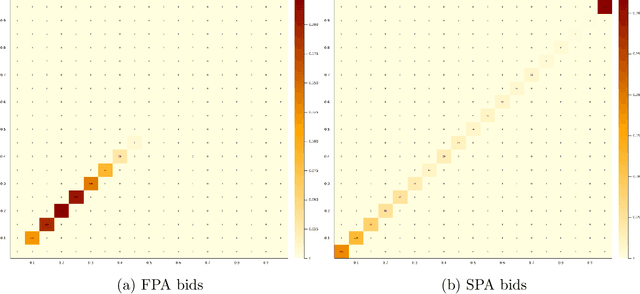

Abstract:Motivated by online advertising auctions, we study auction design in repeated auctions played by simple Artificial Intelligence algorithms (Q-learning). We find that first-price auctions with no additional feedback lead to tacit-collusive outcomes (bids lower than values), while second-price auctions do not. We show that the difference is driven by the incentive in first-price auctions to outbid opponents by just one bid increment. This facilitates re-coordination on low bids after a phase of experimentation. We also show that providing information about lowest bid to win, as introduced by Google at the time of switch to first-price auctions, increases competitiveness of auctions.

Games of Artificial Intelligence: A Continuous-Time Approach

Feb 12, 2022

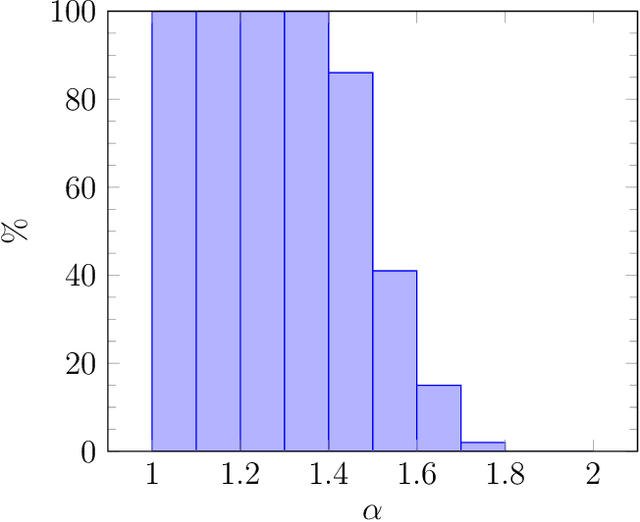

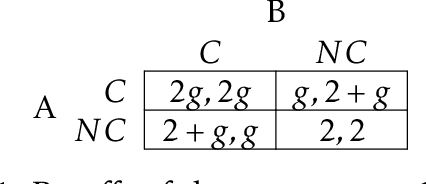

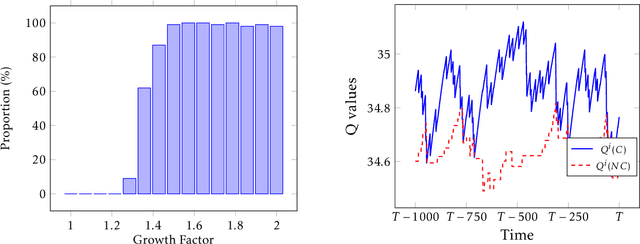

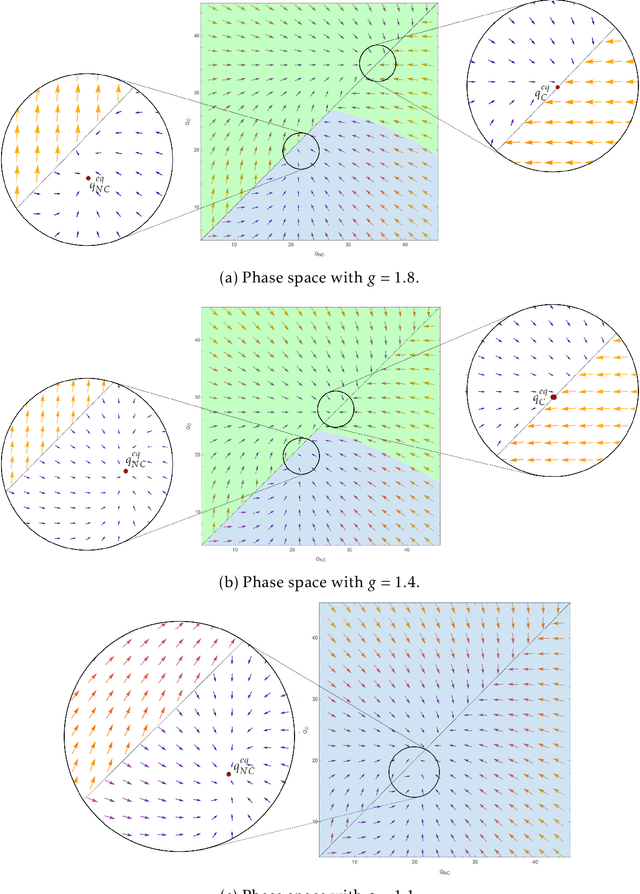

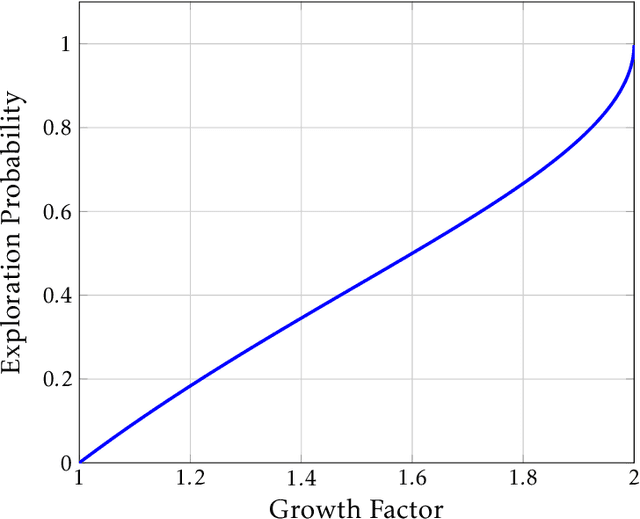

Abstract:This paper studies the strategic interaction of algorithms in economic games. We analyze games where learning algorithms play against each other while searching for the best strategy. We first establish a fluid approximation technique that enables us to characterize the learning outcomes in continuous time. This tool allows to identify the equilibria of games played by Artificial Intelligence algorithms and perform comparative statics analysis. Thus, our results bridge a gap between traditional learning theory and applied models, allowing quantitative analysis of traditionally experimental systems. We describe the outcomes of a social dilemma, and we provide analytical guidance for the design of pricing algorithms in a Bertrand game. We uncover a new phenomenon, the coordination bias, which explains how algorithms may fail to learn dominant strategies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge