Marcus Weber

Effective Dynamics and Transition Pathways from Koopman-Inspired Neural Learning of Collective Variables

Apr 07, 2026Abstract:The ISOKANN (Invariant Subspaces of Koopman Operators Learned by Artificial Neural Networks) framework provides a data-driven route to extract collective variables (CVs) and effective dynamics from complex molecular systems. In this work, we integrate the theoretical foundation of Koopman operators with Krylov-like subspace algorithms, and reduced dynamical modeling to build a coherent picture of how to describe metastable transitions in high-dimensional systems based on CVs. Starting from the identification of CVs based on dominant invariant subspaces, we derive the corresponding effective dynamics on the latent space and connect these to transition rates and times, committor functions, and transition pathways. The combination of Koopman-based learning and reduced-dimensional effective dynamics yields a principled framework for computing transition rates and pathways from simulation data. Numerical experiments on one-, two-, and three-dimensional benchmark potentials illustrate the ability of ISOKANN to reconstruct the coarse-grained kinetics and reproduce transition times across enthalpic and entropic barriers.

Towards a FAIR Documentation of Workflows and Models in Applied Mathematics

Mar 26, 2024Abstract:Modeling-Simulation-Optimization workflows play a fundamental role in applied mathematics. The Mathematical Research Data Initiative, MaRDI, responded to this by developing a FAIR and machine-interpretable template for a comprehensive documentation of such workflows. MaRDMO, a Plugin for the Research Data Management Organiser, enables scientists from diverse fields to document and publish their workflows on the MaRDI Portal seamlessly using the MaRDI template. Central to these workflows are mathematical models. MaRDI addresses them with the MathModDB ontology, offering a structured formal model description. Here, we showcase the interaction between MaRDMO and the MathModDB Knowledge Graph through an algebraic modeling workflow from the Digital Humanities. This demonstration underscores the versatility of both services beyond their original numerical domain.

The Mathematics of Comparing Objects

Jan 14, 2022

Abstract:`After reading two different crime stories, an artificial intelligence concludes that in both stories the police has found the murderer just by random.'' -- To what extend and under which assumptions this is a description of a realistic scenario?

The Complexity of Comparative Text Analysis -- "The Gardener is always the Murderer" says the Fourth Machine

Dec 11, 2020

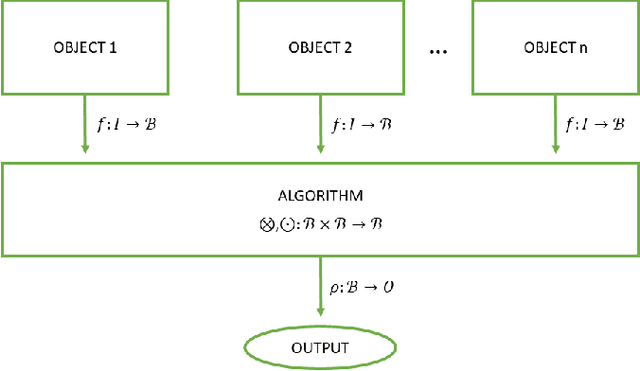

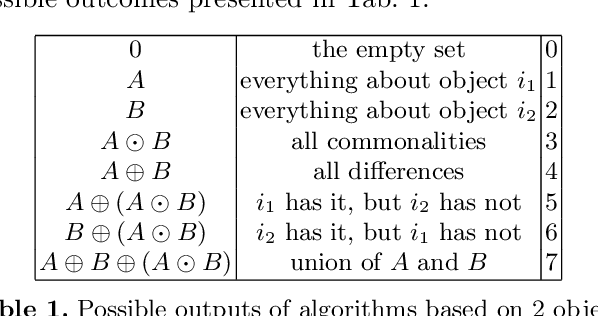

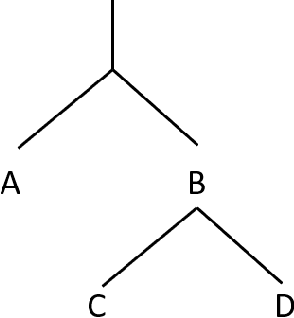

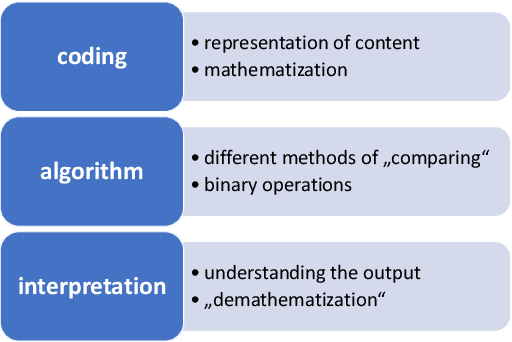

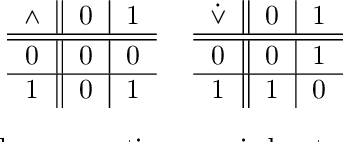

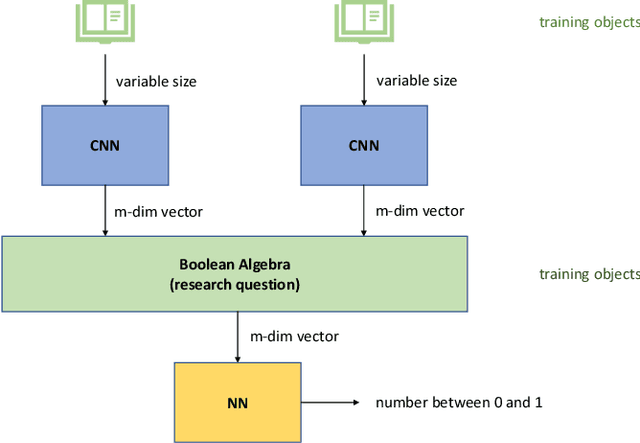

Abstract:There is a heated debate about how far computers can map the complexity of text analysis compared to the abilities of the whole team of human researchers. A "deep" analysis of a given text is still beyond the possibilities of modern computers. In the heart of the existing computational text analysis algorithms there are operations with real numbers, such as additions and multiplications according to the rules of algebraic fields. However, the process of "comparing" has a very precise mathematical structure, which is different from the structure of an algebraic field. The mathematical structure of "comparing" can be expressed by using Boolean rings. We build on this structure and define the corresponding algebraic equations lifting algorithms of comparative text analysis onto the "correct" algebraic basis. From this point of view, we can investigate the question of {\em computational} complexity of comparative text analysis.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge